Individual AI agents solve focused problems. Production enterprise systems require coordinated teams of specialized agents working together toward complex objectives. This transition from single-agent architectures to multi-agent orchestration represents one of the most significant challenges in deploying autonomous AI at scale. The choice of orchestration pattern fundamentally determines system performance across four critical dimensions: token consumption efficiency, latency characteristics, scalability limits, and operational complexity. Organizations that master these patterns gain the ability to decompose business processes into coordinated agent workflows that operate with enterprise reliability.

This article examines the architectural patterns, state management strategies, and implementation techniques that enable effective multi-agent coordination. We will explore proven orchestration approaches including supervisor hierarchies, peer-to-peer collaboration, sequential pipelines, and hybrid patterns. The implementations demonstrate production-ready patterns using LangGraph, the leading framework for stateful multi-agent workflows. All code examples progress from foundational concepts through enterprise deployments that handle thousands of concurrent agent executions.

Understanding Multi-Agent Orchestration

Multi-agent orchestration addresses a fundamental limitation of single-agent architectures. When one agent manages everything, prompts become unwieldy as context windows overflow with tool definitions and instructions. Token consumption escalates as the agent must maintain awareness of dozens of capabilities. Tool calling becomes inconsistent when the agent must choose from too many options. Performance degrades as the single agent becomes a bottleneck for all operations.

Orchestrated multi-agent systems solve these problems through specialization and coordination. Instead of one generalist agent, you deploy focused specialist agents, each expert in their specific domain. A database agent handles queries and schema operations. An API integration agent manages external service calls. A document processing agent extracts and analyzes content. A validation agent ensures quality and compliance. These specialists coordinate through well-defined interfaces, passing context and delegating tasks as workflows progress.

The orchestration layer manages four critical responsibilities. Task decomposition takes high-level objectives and breaks them into executable subtasks that individual agents can handle. Agent selection routes each subtask to the appropriate specialist based on capabilities and current load. State management maintains shared context across agent interactions while preventing race conditions. Result aggregation synthesizes outputs from multiple agents into coherent responses.

graph TB

subgraph "Single Agent Architecture"

A[User Request] --> B[General Purpose Agent]

B --> C1[Database Tool]

B --> C2[API Tool]

B --> C3[Document Tool]

B --> C4[Validation Tool]

B --> C5[N Tools...]

C1 & C2 & C3 & C4 & C5 --> D[Response]

end

style B fill:#ffc3c3

graph TB

subgraph "Multi-Agent Architecture"

E[User Request] --> F[Orchestrator]

F --> G1[Database Agent]

F --> G2[API Agent]

F --> G3[Document Agent]

F --> G4[Validation Agent]

G1 --> H[Shared State]

G2 --> H

G3 --> H

G4 --> H

H --> I[Aggregated Response]

end

style F fill:#c3f0ca

style H fill:#fff4c3Core Orchestration Patterns

Production multi-agent systems typically implement one of several proven orchestration patterns. Each pattern optimizes for different coordination requirements and makes specific tradeoffs between control, performance, and complexity. Understanding these patterns enables architects to select appropriate approaches for specific business requirements.

The Supervisor Pattern: Centralized Coordination

The supervisor pattern employs hierarchical architecture where a central orchestrator coordinates all agent interactions. The supervisor receives user requests, decomposes them into subtasks, delegates work to specialized agents, monitors progress, validates outputs, and synthesizes final responses. This pattern excels when reasoning transparency, quality assurance, and traceability matter more than raw speed.

Consider a financial services workflow where regulatory compliance demands complete audit trails. The supervisor agent receives a loan application review request. It decomposes this into identity verification, credit assessment, fraud detection, and regulatory compliance checks. Each specialist agent executes its focused task while the supervisor maintains the complete reasoning chain. All decisions trace back through the supervisor, creating the audit log required for regulatory review.

The supervisor pattern provides clear benefits for enterprise deployments. Centralized control simplifies debugging because all coordination logic resides in one place. Quality gates prevent bad outputs from propagating through the system. Sequential reasoning enables complex multi-step workflows where later steps depend on earlier results. The pattern naturally supports human-in-the-loop approval workflows where the supervisor can pause for human review before proceeding.

However, the pattern introduces specific constraints. The supervisor becomes a potential bottleneck as all communication flows through it. Latency increases because agents cannot directly coordinate with each other. Token consumption rises as the supervisor must maintain context for all agents. The pattern works poorly for real-time systems where millisecond latency matters or for high-scale environments processing thousands of concurrent requests.

Sequential Pipeline: Linear Processing Chains

The sequential pipeline pattern chains agents in predefined linear order. Each agent processes output from the previous agent in the sequence, creating a transformation pipeline. This pattern maps naturally to business processes that follow inherent sequential dependencies. Document processing workflows exemplify this pattern: extraction agent reads raw documents, formatting agent structures the content, classification agent categorizes information, validation agent verifies accuracy, and storage agent persists results.

Sequential pipelines provide predictability and determinism. The execution order is explicit and unchanging. Each agent has clearly defined inputs and outputs. Debugging becomes straightforward because you can examine state at each pipeline stage. The pattern handles backpressure naturally by slowing the entire pipeline when downstream agents cannot keep pace.

The constraints are equally clear. Pipelines cannot model workflows with conditional branching or parallel processing. The slowest agent determines overall throughput. Failures in early stages block all downstream processing. The pattern wastes resources when later stages could begin processing while earlier stages continue with new inputs.

Parallel Orchestration: Concurrent Execution

Parallel orchestration enables multiple agents to process the same input simultaneously with results merged downstream. This pattern optimizes latency by exploiting parallelism. A research aggregation system demonstrates this well. The supervisor broadcasts a research query to multiple specialist agents concurrently. A technical research agent searches academic databases. A market research agent queries business intelligence sources. A news research agent scans current events. An opinion research agent analyzes social media. All agents work simultaneously, and their results merge into a comprehensive research report.

Parallel execution provides compelling benefits. Latency drops dramatically because the slowest agent determines total time rather than the sum of all agent times. Throughput increases as agents utilize available compute resources concurrently. The pattern naturally handles agent failures because other agents continue working while failed agents retry or get replaced.

The challenges center on coordination complexity. Agents must carefully coordinate access to shared resources to prevent conflicts. Result merging requires sophisticated logic to handle contradictory outputs from different agents. Resource consumption spikes as all agents run simultaneously, potentially overwhelming quotas or rate limits. The pattern requires infrastructure capable of supporting high concurrent execution loads.

Adaptive Agent Networks: Decentralized Collaboration

Adaptive agent networks eliminate central coordinators in favor of peer-to-peer collaboration. Agents communicate directly based on current context and system state. This pattern excels when flexibility and self-organization matter more than deterministic behavior. Agents discover each other dynamically, negotiate task allocation, and adapt to changing conditions without central planning.

Consider a customer service environment with unpredictable demand patterns. Multiple customer service agents operate independently. When a complex issue arrives, agents negotiate among themselves to determine who has relevant expertise. They share context directly rather than routing through a supervisor. If one agent becomes overloaded, others automatically pick up slack. The network adapts to changing load without human intervention.

Adaptive networks provide exceptional scalability because they lack central bottlenecks. The system handles agent failures gracefully as remaining agents reorganize automatically. The pattern supports incremental deployment where you add new specialist agents without reconfiguring existing ones.

The complexity trade-offs are significant. Debugging becomes challenging because behavior emerges from agent interactions rather than following predetermined logic. Ensuring consistent outcomes requires sophisticated conflict resolution mechanisms. The pattern may produce different results for identical inputs based on agent availability and current system state. Organizations with strict compliance requirements often cannot accept this non-determinism.

graph TB

subgraph "Supervisor Pattern"

S1[User Request] --> S2[Supervisor Agent]

S2 --> S3[Agent 1]

S2 --> S4[Agent 2]

S2 --> S5[Agent 3]

S3 & S4 & S5 --> S2

S2 --> S6[Response]

end

style S2 fill:#c3f0cagraph TB

subgraph "Sequential Pipeline"

P1[Input] --> P2[Agent 1]

P2 --> P3[Agent 2]

P3 --> P4[Agent 3]

P4 --> P5[Output]

end

graph TB

subgraph "Parallel Orchestration"

PA1[Input] --> PA2{Broadcast}

PA2 --> PA3[Agent 1]

PA2 --> PA4[Agent 2]

PA2 --> PA5[Agent 3]

PA3 & PA4 & PA5 --> PA6[Merge]

PA6 --> PA7[Output]

end

style PA6 fill:#fff4c3graph TB

subgraph "Adaptive Network"

AN1[Input] --> AN2[Agent 1]

AN2 <--> AN3[Agent 2]

AN2 <--> AN4[Agent 3]

AN3 <--> AN4

AN2 & AN3 & AN4 --> AN5[Output]

end

style AN2 fill:#e1f5ff

style AN3 fill:#e1f5ff

style AN4 fill:#e1f5ffImplementing Supervisor Pattern with LangGraph

LangGraph provides the most mature framework for building production multi-agent systems with explicit state management and graph-based orchestration. The library handles the complexities of state persistence, parallel execution, and conditional routing while giving developers precise control over agent behavior. Let us implement a supervisor pattern for a document processing workflow that demonstrates production-ready patterns.

# multi_agent_supervisor.py

from typing import TypedDict, Annotated, Sequence

from langchain_core.messages import BaseMessage, HumanMessage, SystemMessage

from langchain_openai import ChatOpenAI

from langgraph.graph import StateGraph, END

from langgraph.prebuilt import ToolNode

import operator

import functools

# Define the shared state structure

class AgentState(TypedDict):

"""State shared across all agents in the workflow."""

messages: Annotated[Sequence[BaseMessage], operator.add]

next: str # Next agent to execute

final_response: str # Aggregated final response

class DocumentProcessingWorkflow:

"""

Multi-agent document processing using supervisor pattern.

Demonstrates state management, agent coordination, and result aggregation.

"""

def __init__(self, llm_model: str = "gpt-4"):

self.llm = ChatOpenAI(model=llm_model, temperature=0)

self.graph = self._build_graph()

def _build_graph(self) -> StateGraph:

"""Construct the multi-agent workflow graph."""

# Create state graph

workflow = StateGraph(AgentState)

# Add supervisor node

workflow.add_node("supervisor", self._supervisor_agent)

# Add specialist agent nodes

workflow.add_node("extractor", self._extractor_agent)

workflow.add_node("classifier", self._classifier_agent)

workflow.add_node("validator", self._validator_agent)

workflow.add_node("aggregator", self._aggregator_agent)

# Define edges from supervisor to agents

workflow.add_conditional_edges(

"supervisor",

self._route_to_next_agent,

{

"extractor": "extractor",

"classifier": "classifier",

"validator": "validator",

"aggregator": "aggregator",

"FINISH": END

}

)

# All agents return to supervisor for next decision

for agent in ["extractor", "classifier", "validator", "aggregator"]:

workflow.add_edge(agent, "supervisor")

# Set entry point

workflow.set_entry_point("supervisor")

return workflow.compile()

def _supervisor_agent(self, state: AgentState) -> AgentState:

"""

Supervisor coordinates the workflow and decides next action.

"""

messages = state["messages"]

# Determine workflow stage based on message history

agent_history = [msg.name for msg in messages if hasattr(msg, 'name')]

if not agent_history:

# Start workflow with extraction

next_agent = "extractor"

elif "extractor" in agent_history and "classifier" not in agent_history:

# After extraction, classify the document

next_agent = "classifier"

elif "classifier" in agent_history and "validator" not in agent_history:

# After classification, validate the results

next_agent = "validator"

elif "validator" in agent_history and "aggregator" not in agent_history:

# After validation, aggregate results

next_agent = "aggregator"

else:

# All agents completed, finish workflow

next_agent = "FINISH"

# Create supervisor message explaining the decision

supervisor_message = SystemMessage(

content=f"Supervisor: Routing to {next_agent} agent",

name="supervisor"

)

return {

"messages": [supervisor_message],

"next": next_agent

}

def _route_to_next_agent(self, state: AgentState) -> str:

"""Conditional routing based on supervisor decision."""

return state["next"]

def _extractor_agent(self, state: AgentState) -> AgentState:

"""

Extract structured information from documents.

"""

messages = state["messages"]

# Get the original user request

user_message = next((m for m in messages if isinstance(m, HumanMessage)), None)

# Simulate extraction (in production, use actual extraction logic)

extraction_prompt = f"""

You are a document extraction specialist. Extract key information from:

{user_message.content if user_message else 'No content provided'}

Extract:

- Document type

- Key entities (people, organizations, dates)

- Main topics

- Critical facts

"""

response = self.llm.invoke([SystemMessage(content=extraction_prompt)])

extraction_message = HumanMessage(

content=f"Extraction Results: {response.content}",

name="extractor"

)

return {"messages": [extraction_message]}

def _classifier_agent(self, state: AgentState) -> AgentState:

"""

Classify document type and determine processing requirements.

"""

messages = state["messages"]

# Get extraction results

extraction_msg = next(

(m for m in reversed(messages) if hasattr(m, 'name') and m.name == "extractor"),

None

)

classification_prompt = f"""

You are a document classification specialist. Based on the extraction results:

{extraction_msg.content if extraction_msg else 'No extraction data'}

Classify:

- Document category (contract, report, invoice, etc.)

- Sensitivity level (public, internal, confidential)

- Required validation checks

- Compliance requirements

"""

response = self.llm.invoke([SystemMessage(content=classification_prompt)])

classification_message = HumanMessage(

content=f"Classification Results: {response.content}",

name="classifier"

)

return {"messages": [classification_message]}

def _validator_agent(self, state: AgentState) -> AgentState:

"""

Validate extracted information and classifications.

"""

messages = state["messages"]

# Get both extraction and classification results

recent_messages = [

m for m in messages

if hasattr(m, 'name') and m.name in ["extractor", "classifier"]

]

validation_prompt = f"""

You are a validation specialist. Review the following results for:

- Accuracy and completeness

- Consistency between extraction and classification

- Compliance with requirements

- Data quality issues

Results to validate:

{chr(10).join(m.content for m in recent_messages[-2:])}

"""

response = self.llm.invoke([SystemMessage(content=validation_prompt)])

validation_message = HumanMessage(

content=f"Validation Results: {response.content}",

name="validator"

)

return {"messages": [validation_message]}

def _aggregator_agent(self, state: AgentState) -> AgentState:

"""

Aggregate results from all agents into final response.

"""

messages = state["messages"]

# Collect all agent results

agent_results = {

"extraction": next(

(m.content for m in messages if hasattr(m, 'name') and m.name == "extractor"),

"No extraction"

),

"classification": next(

(m.content for m in messages if hasattr(m, 'name') and m.name == "classifier"),

"No classification"

),

"validation": next(

(m.content for m in messages if hasattr(m, 'name') and m.name == "validator"),

"No validation"

)

}

aggregation_prompt = f"""

You are a results aggregation specialist. Synthesize the following

into a coherent final report:

Extraction: {agent_results['extraction']}

Classification: {agent_results['classification']}

Validation: {agent_results['validation']}

Provide a comprehensive summary suitable for business stakeholders.

"""

response = self.llm.invoke([SystemMessage(content=aggregation_prompt)])

aggregator_message = HumanMessage(

content=f"Final Report: {response.content}",

name="aggregator"

)

return {

"messages": [aggregator_message],

"final_response": response.content

}

def process_document(self, document_content: str) -> dict:

"""

Process a document through the multi-agent workflow.

Args:

document_content: The document text to process

Returns:

Dictionary with final response and execution trace

"""

initial_state = {

"messages": [HumanMessage(content=document_content)],

"next": "",

"final_response": ""

}

# Execute the workflow

result = self.graph.invoke(initial_state)

# Extract execution trace for debugging/auditing

execution_trace = [

{

"agent": msg.name,

"output": msg.content[:200] + "..." if len(msg.content) > 200 else msg.content

}

for msg in result["messages"]

if hasattr(msg, 'name')

]

return {

"final_response": result.get("final_response", "No response generated"),

"execution_trace": execution_trace,

"total_steps": len(execution_trace)

}

# Example usage

if __name__ == "__main__":

# Initialize workflow

workflow = DocumentProcessingWorkflow()

# Process a document

document = """

CONFIDENTIAL EMPLOYMENT CONTRACT

This agreement is made between TechCorp Inc. and John Smith

starting January 1, 2026 for the position of Senior Software Engineer

with annual compensation of $180,000.

Responsibilities include leading the AI platform development team

and managing technical architecture decisions.

"""

result = workflow.process_document(document)

print("=== Final Response ===")

print(result["final_response"])

print("\n=== Execution Trace ===")

for step in result["execution_trace"]:

print(f"{step['agent']}: {step['output']}")

print(f"\nTotal workflow steps: {result['total_steps']}")This implementation demonstrates several production patterns. Explicit state management using TypedDict ensures type safety and clear data contracts between agents. The supervisor maintains complete control over workflow execution, enabling audit trails and human-in-the-loop interventions. Conditional routing allows dynamic workflows where later stages depend on earlier results. State persistence through LangGraph checkpointing enables resumable workflows that survive failures.

State Management and Persistence

Production multi-agent systems require robust state management to maintain consistency across concurrent agent executions, enable workflow resumption after failures, support human-in-the-loop approvals, and provide debugging visibility. LangGraph implements state management through three core mechanisms that work together to provide enterprise-grade reliability.

The state schema defines the structure of data flowing through the workflow. Using TypedDict with Annotated types provides compile-time type checking while enabling runtime validation. Reducer functions control how updates merge when multiple agents modify the same state fields concurrently. The default reducer replaces values. The add reducer appends to sequences. Custom reducers implement domain-specific merge logic.

Checkpointing persists state at configurable intervals, creating recovery points for long-running workflows. The checkpoint system stores complete state snapshots including messages, intermediate results, and execution context. When workflows fail or get interrupted, they resume from the most recent checkpoint rather than restarting from the beginning. This capability proves essential for workflows that take minutes or hours to complete.

# stateful_multi_agent.py

from typing import TypedDict, Annotated, List

from langgraph.graph import StateGraph

from langgraph.checkpoint.sqlite import SqliteSaver

import operator

class WorkflowState(TypedDict):

"""

State with custom reducers for different merge strategies.

"""

# Replace on update (default behavior)

current_agent: str

iteration_count: int

# Append on update (using operator.add)

execution_log: Annotated[List[str], operator.add]

agent_results: Annotated[List[dict], operator.add]

# Custom merge logic

confidence_scores: dict

def custom_confidence_reducer(existing: dict, updates: dict) -> dict:

"""

Custom reducer that takes maximum confidence score for each key.

"""

result = existing.copy() if existing else {}

for key, value in (updates or {}).items():

if key not in result or value > result[key]:

result[key] = value

return result

# Create workflow with persistence

class PersistentMultiAgent:

"""Multi-agent system with checkpoint-based state persistence."""

def __init__(self, checkpoint_path: str = "./checkpoints.db"):

# Initialize SQLite checkpointer for state persistence

self.checkpointer = SqliteSaver.from_conn_string(checkpoint_path)

self.graph = self._build_graph()

def _build_graph(self) -> StateGraph:

workflow = StateGraph(WorkflowState)

# Add nodes (agent implementations)

workflow.add_node("agent1", self._agent1)

workflow.add_node("agent2", self._agent2)

# Define workflow

workflow.set_entry_point("agent1")

workflow.add_edge("agent1", "agent2")

workflow.set_finish_point("agent2")

# Compile with checkpointer

return workflow.compile(checkpointer=self.checkpointer)

def _agent1(self, state: WorkflowState) -> WorkflowState:

"""First agent in workflow."""

return {

"current_agent": "agent1",

"iteration_count": state.get("iteration_count", 0) + 1,

"execution_log": [f"Agent1 executed at iteration {state.get('iteration_count', 0)}"],

"agent_results": [{"agent": "agent1", "status": "completed"}],

"confidence_scores": {"agent1_confidence": 0.95}

}

def _agent2(self, state: WorkflowState) -> WorkflowState:

"""Second agent in workflow."""

return {

"current_agent": "agent2",

"execution_log": [f"Agent2 executed after {state.get('current_agent')}"],

"agent_results": [{"agent": "agent2", "status": "completed"}],

"confidence_scores": {"agent2_confidence": 0.87}

}

def execute(self, initial_state: dict, thread_id: str = "default") -> dict:

"""

Execute workflow with state persistence.

Args:

initial_state: Starting state

thread_id: Unique identifier for this execution thread

Returns:

Final state after workflow completion

"""

config = {"configurable": {"thread_id": thread_id}}

# Execute workflow with automatic checkpointing

result = self.graph.invoke(initial_state, config=config)

return result

def resume_from_checkpoint(self, thread_id: str) -> dict:

"""

Resume workflow from last checkpoint.

Args:

thread_id: Thread identifier for the workflow to resume

Returns:

Final state after resuming execution

"""

config = {"configurable": {"thread_id": thread_id}}

# Get latest checkpoint

checkpoints = list(self.graph.get_state_history(config))

if not checkpoints:

raise ValueError(f"No checkpoints found for thread {thread_id}")

# Resume from latest checkpoint

latest_checkpoint = checkpoints[0]

result = self.graph.invoke(latest_checkpoint.values, config=config)

return result

def get_execution_history(self, thread_id: str) -> List[dict]:

"""

Retrieve complete execution history for a workflow.

Args:

thread_id: Thread identifier

Returns:

List of state snapshots at each checkpoint

"""

config = {"configurable": {"thread_id": thread_id}}

history = []

for checkpoint in self.graph.get_state_history(config):

history.append({

"checkpoint_id": checkpoint.config["configurable"]["checkpoint_id"],

"state": checkpoint.values,

"metadata": checkpoint.metadata

})

return history

# Usage example

if __name__ == "__main__":

workflow = PersistentMultiAgent()

# Start new execution

initial_state = {

"current_agent": "start",

"iteration_count": 0,

"execution_log": [],

"agent_results": [],

"confidence_scores": {}

}

result = workflow.execute(initial_state, thread_id="workflow-001")

print("Final State:")

print(f" Iterations: {result['iteration_count']}")

print(f" Execution Log: {result['execution_log']}")

print(f" Confidence Scores: {result['confidence_scores']}")

# Demonstrate checkpoint retrieval

history = workflow.get_execution_history("workflow-001")

print(f"\nCheckpoints saved: {len(history)}")State persistence enables several critical capabilities for production systems. Long-running workflows that take hours or days can checkpoint progress incrementally. If a workflow fails halfway through, it resumes from the last successful checkpoint rather than starting over. Human-in-the-loop workflows pause at designated points, persist state, and resume when humans provide input. Debugging becomes tractable because developers can inspect state at any point in execution history.

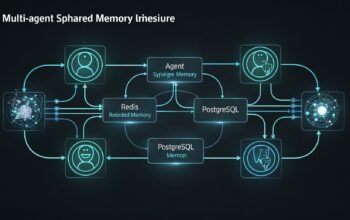

Parallel Agent Execution with Result Merging

Parallel orchestration reduces latency by executing multiple agents concurrently. LangGraph supports parallel execution through scatter-gather patterns where tasks distribute to multiple agents and results consolidate downstream. This pattern works particularly well for research aggregation, multi-perspective analysis, and validation workflows where independent agents can work simultaneously.

# parallel_orchestration.py

from typing import TypedDict, Annotated, List

from langchain_core.messages import HumanMessage, SystemMessage

from langchain_openai import ChatOpenAI

from langgraph.graph import StateGraph, END

import operator

import asyncio

class ResearchState(TypedDict):

"""State for parallel research agents."""

query: str

research_results: Annotated[List[dict], operator.add]

synthesis: str

class ParallelResearchOrchestrator:

"""

Orchestrate multiple research agents running in parallel.

Demonstrates scatter-gather pattern with result aggregation.

"""

def __init__(self):

self.llm = ChatOpenAI(model="gpt-4", temperature=0)

self.graph = self._build_graph()

def _build_graph(self) -> StateGraph:

workflow = StateGraph(ResearchState)

# Add parallel research agents

workflow.add_node("academic_research", self._academic_research)

workflow.add_node("market_research", self._market_research)

workflow.add_node("news_research", self._news_research)

workflow.add_node("synthesis", self._synthesis_agent)

# All research agents start in parallel from entry point

workflow.set_entry_point("academic_research")

workflow.set_entry_point("market_research")

workflow.set_entry_point("news_research")

# All research results flow to synthesis

workflow.add_edge("academic_research", "synthesis")

workflow.add_edge("market_research", "synthesis")

workflow.add_edge("news_research", "synthesis")

# Synthesis is the end

workflow.add_edge("synthesis", END)

return workflow.compile()

async def _research_agent(

self,

state: ResearchState,

agent_type: str,

focus_area: str

) -> ResearchState:

"""

Generic research agent implementation.

Args:

state: Current workflow state

agent_type: Type of research (academic, market, news)

focus_area: Specific focus for this research type

"""

query = state["query"]

research_prompt = f"""

You are a {agent_type} research specialist.

Research the following query with focus on {focus_area}:

Query: {query}

Provide:

- 3-5 key findings

- Supporting evidence

- Confidence level (0-1)

- Sources (simulated)

"""

response = await asyncio.to_thread(

self.llm.invoke,

[SystemMessage(content=research_prompt)]

)

result = {

"agent_type": agent_type,

"findings": response.content,

"timestamp": "2026-02-08T10:00:00Z", # In production, use actual timestamp

"confidence": 0.85 # In production, extract from response

}

return {"research_results": [result]}

async def _academic_research(self, state: ResearchState) -> ResearchState:

"""Research academic literature and papers."""

return await self._research_agent(

state,

"academic",

"peer-reviewed research and theoretical foundations"

)

async def _market_research(self, state: ResearchState) -> ResearchState:

"""Research market trends and business intelligence."""

return await self._research_agent(

state,

"market",

"industry trends and market dynamics"

)

async def _news_research(self, state: ResearchState) -> ResearchState:

"""Research current news and recent developments."""

return await self._research_agent(

state,

"news",

"recent developments and current events"

)

def _synthesis_agent(self, state: ResearchState) -> ResearchState:

"""

Synthesize results from all parallel research agents.

"""

results = state["research_results"]

# Group results by type

findings_by_type = {}

for result in results:

agent_type = result["agent_type"]

findings_by_type[agent_type] = result["findings"]

synthesis_prompt = f"""

You are a research synthesis specialist. Integrate findings from

multiple research perspectives into a coherent analysis.

Academic Research:

{findings_by_type.get('academic', 'No academic research')}

Market Research:

{findings_by_type.get('market', 'No market research')}

News Research:

{findings_by_type.get('news', 'No news research')}

Provide:

- Integrated analysis combining all perspectives

- Key insights from cross-perspective comparison

- Confidence assessment

- Recommended next steps

"""

response = self.llm.invoke([SystemMessage(content=synthesis_prompt)])

return {"synthesis": response.content}

async def conduct_research(self, query: str) -> dict:

"""

Execute parallel research workflow.

Args:

query: Research query

Returns:

Synthesized research results with individual agent outputs

"""

initial_state = {

"query": query,

"research_results": [],

"synthesis": ""

}

# Execute parallel workflow

result = await asyncio.to_thread(

self.graph.invoke,

initial_state

)

return {

"query": query,

"individual_results": result["research_results"],

"synthesized_analysis": result["synthesis"],

"total_agents": len(result["research_results"])

}

# Example usage

async def main():

orchestrator = ParallelResearchOrchestrator()

query = "Impact of agentic AI on enterprise software development practices"

result = await orchestrator.conduct_research(query)

print(f"Research Query: {result['query']}\n")

print(f"Agents Executed: {result['total_agents']}\n")

print("=== Individual Research Results ===")

for res in result["individual_results"]:

print(f"\n{res['agent_type'].upper()} Research:")

print(res['findings'][:300] + "...")

print("\n=== Synthesized Analysis ===")

print(result["synthesized_analysis"])

if __name__ == "__main__":

asyncio.run(main())This parallel implementation demonstrates critical patterns for concurrent agent execution. All research agents execute simultaneously rather than sequentially. The synthesis agent waits until all research agents complete before aggregating results. Async execution ensures the system efficiently utilizes compute resources during network or API calls. Result merging consolidates outputs from multiple agents into coherent analysis.

Conclusion and Next Steps

Multi-agent orchestration transforms collections of individual agents into coordinated systems capable of handling enterprise-scale challenges. The orchestration patterns examined in this article provide proven approaches for different coordination requirements. Supervisor patterns offer centralized control and explicit audit trails. Sequential pipelines handle workflows with natural dependencies. Parallel orchestration minimizes latency through concurrent execution. Adaptive networks provide flexibility through decentralized coordination.

Production implementations require robust state management to maintain consistency, enable recovery, and support debugging. LangGraph provides the infrastructure for explicit state schemas, checkpoint-based persistence, and conditional routing that production systems demand. The code examples demonstrate patterns that scale from development through enterprise deployment.

The next article in this series will examine production deployment strategies for AI agents. We will cover infrastructure requirements for scaling agent workloads, implementing monitoring and observability for autonomous systems, handling failures and implementing retry logic, managing costs and rate limits across multiple agents, and deploying agents across cloud platforms including Azure, AWS, and Kubernetes. These operational patterns separate experimental deployments from production-grade systems that handle millions of agent executions reliably.

References

- AI Agent Orchestration Patterns – Azure Architecture Center

- LangGraph: Agent Orchestration Framework

- LangGraph GitHub Repository

- LangGraph: Multi-Agent Workflows – LangChain Blog

- Choosing the Right Orchestration Pattern for Multi-Agent Systems – Kore.ai

- What is AI Agent Orchestration? – IBM

- Guidance for Multi-Agent Orchestration on AWS

- AI Agent Orchestration Frameworks – n8n Blog

- Unlocking AI Potential with Multi-Agent Orchestration – Deepchecks

- Mastering LangGraph State Management in 2025