The artificial intelligence industry has reached a critical inflection point in 2026. After years of experimental pilots and proof-of-concept projects, enterprises are facing mounting pressure to move AI systems from isolated experiments into production-scale deployments that deliver measurable business value. This shift marks what industry analysts are calling the “Year of Truth for AI,” where the gap between promise and reality must finally narrow.

This article examines the current state of enterprise AI adoption, the challenges preventing production deployment, and the strategic imperatives driving organizations to break free from what has become known as “pilot purgatory.” We will explore real-world case studies, analyze the dramatic shift in build-versus-buy strategies, and provide actionable insights for technical leaders navigating this critical transition.

The Current State of Enterprise AI Adoption

The enterprise AI landscape has transformed dramatically over the past 18 months. Global investment in generative AI solutions more than tripled from 2024 to 2025, reaching approximately $37 billion in 2025. This makes enterprise AI one of the fastest-growing software segments in history, yet the translation from investment to production deployment tells a more complex story.

According to comprehensive research from Deloitte and TechRepublic, worker access to AI rose by 50% in 2025, and expectations for scale are climbing rapidly. The number of companies with 40% or more of their AI projects in production is projected to double within six months. However, only approximately 4% of firms today have truly mature, AI-driven capabilities deployed across all business functions.

This disparity highlights the fundamental challenge facing enterprises in 2026. While AI capabilities have advanced at breathtaking speed, with new features shipping roughly every three days at major AI companies, organizational ability to operationalize these capabilities has lagged significantly behind. The result is a widening “opportunity gap” between what AI models can theoretically accomplish and what organizations can actually deploy in production environments.

Understanding Pilot Purgatory

Pilot purgatory refers to the state where organizations become trapped in endless cycles of AI experimentation without graduating projects to production scale. This phenomenon has become pervasive across industries, with enterprises launching numerous AI pilots that demonstrate technical feasibility but fail to achieve enterprise-wide adoption or deliver sustained business impact.

Several factors contribute to this challenge. First, the rapid pace of AI advancement creates constant pressure to restart projects with newer, more capable models rather than completing deployment of existing solutions. Second, organizations often lack the operational infrastructure required to support production AI systems, including MLOps pipelines, robust data engineering capabilities, and comprehensive governance frameworks. Third, the skills gap in AI talent makes it difficult to maintain momentum as projects transition from research to production phases.

The consequences of pilot purgatory extend beyond wasted investment. Organizations stuck in experimental phases face increasing competitive pressure from AI leaders who have successfully operationalized AI capabilities. A recent survey found that more than half of companies using AI experienced at least one negative incident, such as AI systems producing inaccurate or biased results, highlighting the risks of inadequate production readiness.

The Shift from Experimentation to Impact

The enterprise conversation around AI has fundamentally changed. Technology leaders report that the question has evolved from “What can we do with AI?” to “How do we move from experimentation to impact?” This shift reflects growing urgency driven not just by advancing technology but by the accelerating pace of change itself and competitive dynamics.

Consider the adoption velocity of transformative technologies. The telephone took 50 years to reach 50 million users. The internet required seven years. A leading generative AI tool reached approximately 100 million users in just two months. As of early 2026, that same tool has over 800 million weekly users, representing roughly 10% of the global population. This unprecedented adoption rate creates both opportunity and pressure for enterprises.

Organizations are responding by prioritizing integration of AI into core business operations rather than treating it as an experimental technology. This involves redesigning workflows to incorporate AI capabilities, establishing clear return on investment metrics, and investing in the organizational capabilities needed to operationalize AI at scale. The focus has shifted decisively toward enterprise-wide AI solutions that drive measurable business outcomes.

Real-World Production Deployments and Business Impact

Despite the challenges, leading organizations are successfully deploying AI systems at production scale and achieving significant business results. These case studies provide concrete evidence of what becomes possible when enterprises break through pilot purgatory.

Manufacturing Sector: Production Optimization

A major manufacturer deployed AI agents to optimize production processes, reducing work that previously required six weeks to just one day. This 30x improvement in cycle time was achieved through autonomous agents that analyze production data, identify bottlenecks, and recommend optimization strategies. The system integrates with existing manufacturing execution systems and operates continuously to maintain optimal production parameters.

Another manufacturer is using AI agents to support new product development initiatives, leveraging AI to find optimal balance between competing objectives such as cost and time-to-market. These agents analyze historical product development data, current market conditions, and resource constraints to provide actionable recommendations that reduce development cycles while maintaining quality standards.

Financial Services: Automated Workflow Orchestration

A financial services company built agentic workflows to automatically capture meeting actions from video conferences, draft communications reminding participants of commitments, and track follow-through. This system eliminated manual administrative overhead while improving accountability and project velocity. The implementation required integration with video conferencing platforms, communication tools, and project management systems, demonstrating the complexity of production AI deployments.

A global investment company deployed AI agents end-to-end across their sales process, opening up more than 90% additional time for salespeople to spend with customers. The agents handle lead qualification, research preparation, follow-up communications, and documentation, allowing human salespeople to focus on high-value relationship building and complex negotiations.

Energy Sector: Output Optimization

A large energy producer implemented AI agents to optimize output, achieving up to 5% increase in production. For a major energy company, this seemingly modest percentage improvement translates to over one billion dollars in additional annual revenue. The system continuously analyzes operational parameters, weather patterns, equipment performance, and market conditions to recommend real-time adjustments that maximize output while maintaining safety and regulatory compliance.

Public Sector: Addressing Workforce Shortages

Government agencies are deploying AI agents to address critical workforce shortages, partnering AI systems with human workers to complete essential processes. These implementations demonstrate that production AI can address strategic organizational challenges beyond pure efficiency gains. The systems handle routine tasks, data processing, and initial citizen inquiries, allowing human workers to focus on complex cases requiring judgment and empathy.

The Build Versus Buy Decision: A Strategic Inflection

One of the most significant shifts in enterprise AI strategy has been the dramatic movement from building custom AI solutions to purchasing pre-built platforms and tools. This change reflects organizational learning about the true costs and challenges of maintaining production AI systems.

In 2024, enterprise AI solutions were split roughly 50/50 between internally built systems and purchased solutions. The conventional wisdom suggested that large enterprises would develop most AI systems in-house, tailored to their specific data and requirements. However, by 2025, this distribution had shifted dramatically to 76% purchased solutions versus 24% internally built.

This strategic pivot is driven by several factors. Pre-built AI products reach production faster than in-house developed models, allowing organizations to capture value sooner. The complexity of maintaining production AI systems including model versioning, continuous training, monitoring, and governance has proven greater than many organizations anticipated. Additionally, the rapid pace of AI advancement makes it difficult for internal teams to keep pace with state-of-the-art capabilities while simultaneously handling production operations.

However, the shift toward purchasing solutions does not eliminate the need for internal AI expertise. Organizations still require substantial technical capabilities to integrate purchased AI systems with existing infrastructure, customize them for specific business processes, manage data pipelines, and ensure governance and compliance. The distinction has shifted from building foundational AI models to building the organizational systems and processes that allow AI to deliver business value.

Types of AI Technologies and Their Production Maturity

Different categories of AI technology are at varying stages of production maturity, requiring distinct deployment approaches and organizational capabilities.

Generative AI

Generative AI has moved beyond initial hype into mature, integrated use across nearly all business functions. Organizations are deploying generative AI for customer service chatbots handling common queries, marketing systems generating draft copy and social media content, software development with AI coding assistants, HR processes including resume screening and training content creation, and numerous other applications.

The areas where enterprise leaders believe generative AI will have the most impactful effects include content creation and marketing, customer service and support, software development and coding assistance, document processing and analysis, and research and knowledge management. Success in production generative AI deployments requires robust prompt engineering, output validation processes, human oversight mechanisms, and integration with existing business systems.

Agentic AI

Agentic AI represents autonomous systems capable of taking actions, making decisions, and orchestrating complex workflows without continuous human intervention. While still emerging, agentic AI is expected to have the highest impact in customer support operations, with significant potential in supply chain management, research and development, knowledge management, and cybersecurity operations.

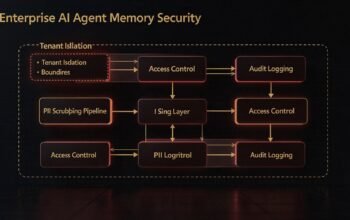

Production deployment of agentic AI requires more sophisticated infrastructure than traditional generative AI. Organizations need robust identity and access management for autonomous agents, comprehensive audit logging to track agent actions, governance frameworks defining acceptable agent behaviors, integration capabilities allowing agents to interact with multiple enterprise systems, and monitoring systems to detect anomalous agent behavior.

Physical AI

Physical AI applications involve AI systems interacting with the physical world through robotics, autonomous vehicles, drones, and other embodied systems. Common use cases include robotic picking arms in warehouses, autonomous forklifts in manufacturing facilities, drone-based inspection systems, and autonomous delivery vehicles.

Adoption of physical AI is especially advanced in manufacturing, logistics, and defense sectors where the technology is already reshaping operations. Physical AI deployments face unique challenges including safety certification requirements, integration with physical infrastructure, real-time performance requirements, and environmental variability. These systems require robust edge computing capabilities, as cloud connectivity cannot always be guaranteed in operational environments.

Critical Capabilities for Production AI

Successfully transitioning from pilot to production requires organizations to develop several critical technical and organizational capabilities. These capabilities form the foundation upon which production AI systems operate reliably at scale.

MLOps and Continuous Integration/Continuous Deployment

Production AI systems require robust MLOps practices to manage the complete lifecycle of AI models. This includes automated model training pipelines, version control for models and datasets, automated testing and validation, deployment automation, performance monitoring, and model retraining workflows.

Unlike traditional software, AI models can degrade over time as data distributions change, a phenomenon known as model drift. Production systems must continuously monitor for drift and trigger retraining when performance degrades. This requires sophisticated data engineering capabilities to maintain training datasets, automated evaluation frameworks to assess model performance, and orchestration systems to manage the retraining and redeployment process.

Data Infrastructure and Engineering

Legacy data architectures cannot power real-time, autonomous AI systems. Organizations need to modernize infrastructure to create what industry analysts call a “living AI backbone,” an organization-wide, real-time system that adapts dynamically to business and regulatory changes.

This modernization involves implementing modular, cloud-native platforms that securely connect, govern, and integrate all data types. Organizations must break down data silos through domain-owned data products while embedding privacy, sovereignty, and security by design. Enterprise standards for data quality, interoperability, and lineage must be consistently enforced across the organization.

More than 80% of enterprises currently lack AI-ready data, defined as data that is trustworthy, governed, contextualized, and aligned to specific use cases. This readiness gap has become both the leading cause of AI project failures and the biggest driver of new spending in data infrastructure and engineering capabilities.

Governance and Risk Management

As AI moves from experimentation to deployment, governance becomes the difference between scaling successfully and stalling out. The dramatic increase in organizations acknowledging AI risks, from 12% of S&P 500 companies in 2023 to 72% in 2025, reflects growing awareness of the critical importance of AI governance.

Enterprises where senior leadership actively shapes AI governance achieve significantly greater business value than those delegating governance to technical teams alone. Effective governance requires clear accountability structures, defined policies for acceptable AI use, bias detection and mitigation processes, transparency mechanisms for AI decision-making, compliance frameworks addressing regulatory requirements, and incident response procedures for AI failures.

Organizations must also implement robust identity and access management for AI agents themselves. As autonomous agents proliferate across enterprise systems, the challenge is no longer just deploying models but managing identity for these new users operating across systems. Enterprises must answer critical questions about every AI agent: Do we know every AI agent that exists? Do we understand what it is accessing? Are we confident in what it is doing when it accesses systems?

Change Management and Organizational Readiness

Technical capabilities alone are insufficient for successful production AI deployment. Organizations must invest heavily in change management to ensure teams trust and understand new AI capabilities. This involves comprehensive training programs educating employees on working alongside AI systems, clear communication about how AI will impact roles and responsibilities, feedback mechanisms allowing employees to report issues or suggest improvements, and success metrics demonstrating AI value to stakeholders.

A lack of skilled talent has become one of the biggest barriers to AI adoption. Organizations are responding by developing internal AI literacy programs, hiring specialists in AI operations and governance, partnering with external experts for complex deployments, and creating centers of excellence to share AI best practices across the organization.

The Emerging AI Infrastructure Ecosystem

The maturation of enterprise AI has spawned an entire ecosystem of infrastructure platforms and tools designed to bridge the gap between AI capabilities and production deployment. Understanding this ecosystem is critical for organizations planning their AI strategy.

AI Gateway Platforms

With 40% of enterprise applications now integrated with task-specific AI agents, organizations can no longer afford makeshift solutions for managing multi-provider AI infrastructure. Enterprise AI gateways have emerged as mission-critical infrastructure managing access to multiple LLM providers, preventing vendor lock-in, ensuring high availability through automatic failovers, and providing governance controls required for regulated industries.

Leading AI gateway platforms in 2026 deliver far more than simple API routing. They provide unified interfaces supporting multiple AI providers, dynamic provider configuration and failover capabilities, semantic caching to reduce costs and latency, hierarchical budget management and cost controls, comprehensive security and compliance features, and detailed observability into AI system performance.

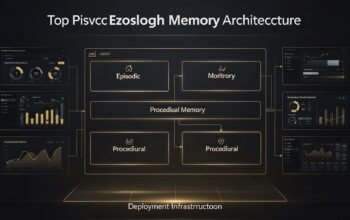

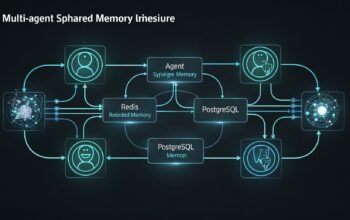

Agent Management Platforms

As organizations deploy increasing numbers of autonomous AI agents, dedicated platforms for building, deploying, and managing these agents have become essential. These platforms provide agent development frameworks and templates, integration capabilities with enterprise systems, orchestration for multi-agent workflows, monitoring and observability for agent behavior, governance controls defining agent permissions, and continuous improvement mechanisms incorporating feedback.

The announcement of OpenAI Frontier on February 5, 2026, represents a significant development in this category. Frontier provides an end-to-end platform for creating AI agents with shared business context, defined permissions, and continuous improvement capabilities. The platform supports agents from multiple vendors using open standards, reflecting the industry’s movement toward interoperable agent ecosystems.

Context Engineering Tools

As enterprises scale beyond simple chatbots to deploy sophisticated multi-agent systems, the engineering focus is shifting from crafting better prompts to architecting better context. Context engineering involves designing systems that enrich AI prompts with relevant internal organizational context, retrieve real-time business data when needed, and integrate with existing enterprise systems to perform actions.

Tools in this category increasingly use protocols like the Model Context Protocol to enable AI systems to access internal data sources, business applications, and operational workflows. This turns AI from static text generators into operational participants that query, validate, update, and orchestrate tasks based on live internal information.

Strategic Imperatives for 2026

Based on the current state of enterprise AI and the factors driving production deployment, several strategic imperatives emerge for organizations in 2026.

Prioritize Integration Over Innovation

Organizations must resist the temptation to continuously chase the latest AI capabilities and instead focus on deeply integrating existing AI systems into core business processes. This means investing in the data infrastructure, MLOps capabilities, and change management required to operationalize AI at scale rather than launching additional pilots.

Establish Clear ROI Frameworks

Production AI deployments require clear metrics demonstrating business value. Organizations should establish frameworks measuring efficiency gains through time savings or cost reduction, revenue impact from new capabilities or improved customer experience, risk reduction from better decision-making or compliance, and competitive advantage from faster innovation or superior products.

These metrics must be tied to specific business processes and tracked continuously to justify ongoing investment and guide prioritization decisions.

Invest in Data Readiness

Given that more than 80% of enterprises lack AI-ready data, organizations must prioritize data infrastructure modernization. This involves implementing automated pipeline orchestration and deployment, establishing in-line governance enforcement using policy-as-code, creating continuous observability and quality checks, implementing automated validation and regression testing, and establishing environment management across development, test, and production stages.

Build Governance into the Foundation

Organizations should establish governance frameworks before scaling AI deployments rather than retrofitting governance onto existing systems. This includes defining clear accountability for AI systems, establishing policies for acceptable AI use and limitations, implementing technical controls preventing unauthorized actions, creating transparency mechanisms for AI decisions, and developing incident response capabilities for AI failures.

Adopt Multi-Cloud and Multi-Provider Strategies

To maintain flexibility and avoid vendor lock-in, organizations should architect AI systems to operate across multiple cloud providers and AI model providers. This requires using standardized interfaces and protocols, implementing abstraction layers that allow provider switching, establishing failover mechanisms for high availability, and regularly testing the ability to migrate between providers.

Develop Internal AI Expertise

Even organizations primarily purchasing AI solutions need substantial internal expertise. Companies should invest in training programs building AI literacy across the organization, hiring specialists in AI operations, governance, and integration, creating centers of excellence sharing best practices, and establishing partnerships with external experts for complex challenges.

Looking Ahead: The Road from Pilots to Production

The enterprise AI landscape in 2026 is characterized by a fundamental shift from experimentation to operationalization. Organizations are learning that the path from pilot to production requires far more than technical capability. It demands robust infrastructure, comprehensive governance, organizational change management, and strategic discipline to resist the constant allure of new AI capabilities in favor of deeply integrating existing ones.

The case studies presented in this article demonstrate that breaking through pilot purgatory is both possible and immensely valuable. Organizations achieving production deployment are seeing order-of-magnitude improvements in cycle times, significant increases in employee productivity, and meaningful revenue impact measured in hundreds of millions or billions of dollars.

However, these successes require sustained investment in the foundational capabilities that enable production AI. The dramatic shift toward purchasing AI solutions rather than building them internally reflects organizational learning about the true complexity of maintaining production systems. Yet this shift does not eliminate the need for internal expertise. Organizations must still build the data infrastructure, integration capabilities, governance frameworks, and operational processes that allow AI to deliver business value.

As we move deeper into 2026, the competitive gap between AI leaders and laggards will continue to widen. Organizations must make strategic choices about where and how to deploy AI, recognizing that production deployment is fundamentally different from experimentation. The “Year of Truth for AI” will separate organizations that successfully operationalize AI from those that remain trapped in endless pilots.

In the subsequent articles in this series, we will dive deep into the technical infrastructure enabling production AI, explore specific implementation patterns for agentic AI systems, examine governance and risk management frameworks, analyze data readiness requirements, and present detailed case studies with quantified business outcomes. These articles will provide the technical depth and practical guidance needed to navigate the journey from pilots to production successfully.

References

- TechRepublic – AI Adoption Trends in the Enterprise 2026

- Deloitte – The State of AI in the Enterprise 2026 Report

- Constellation Research – Enterprise Technology 2026: 15 AI Trends to Watch

- ALM Corp – OpenAI Frontier Platform: Complete Guide to Enterprise AI Agent Deployment

- Maxim AI – Top 5 Enterprise AI Gateways in 2026

- Solutions Review – AI and Enterprise Technology Predictions from Industry Experts for 2026

- VentureBeat – Four AI Research Trends Enterprise Teams Should Watch in 2026

- OpenAI – Introducing OpenAI Frontier

- IBM Think – The Trends That Will Shape AI and Tech in 2026

- Capgemini – Top Tech Trends 2026