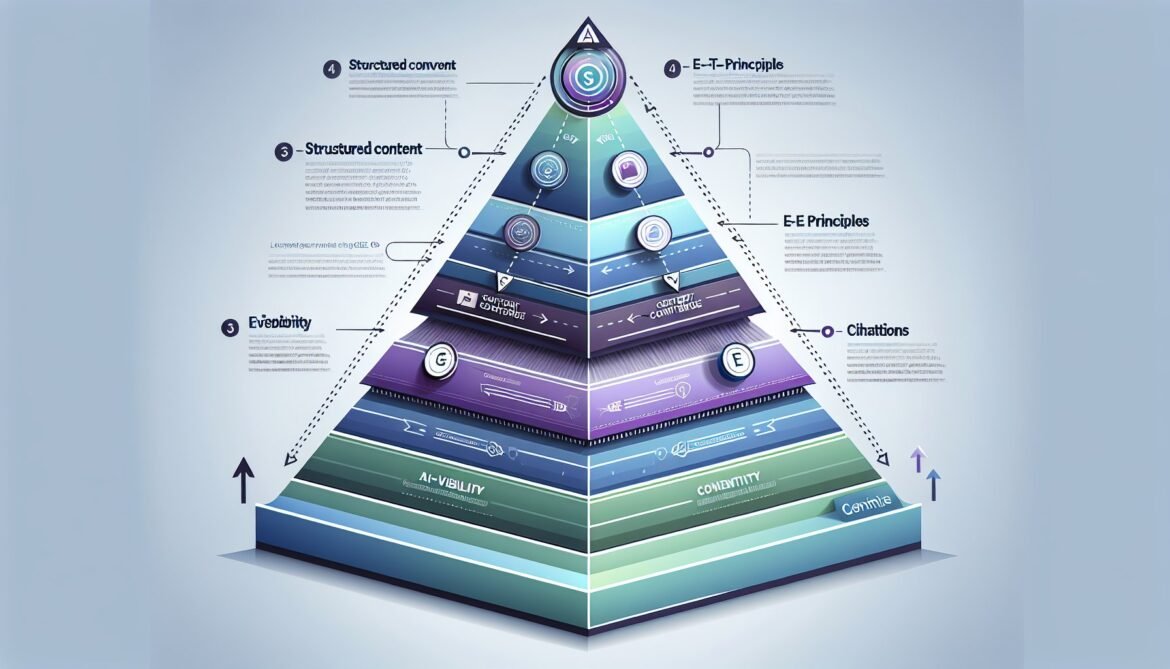

In Parts 1 and 2, we established the market imperative for GEO and built the technical foundation with schema markup. Now we address the most critical element: content strategy that drives AI citations. Schema provides the scaffolding, but content quality and structure determine whether AI engines trust and cite your work.

The fundamental challenge is creating content that satisfies two distinct audiences simultaneously: human readers who expect engaging, valuable information, and AI systems that prioritize semantic clarity, structural precision, and authoritative signals. This dual optimization requires systematic approaches that differ significantly from traditional content marketing.

The Content Structure AI Engines Prefer

Research from Chris Green’s 2025 experiments reveals definitive patterns in what AI engines cite most frequently. His controlled tests across ChatGPT, Perplexity, and Google AI Overviews demonstrated that Q&A format provides the highest semantic relevance to user queries, followed closely by structured content with clear headings and lists. Dense prose performed worst across all platforms.

Pages using clear H2/H3/bullet point structures are 40% more likely to be cited by AI engines than those with paragraph-heavy layouts. This preference stems from how Retrieval-Augmented Generation (RAG) systems chunk and process content during the extraction phase.

The Answer Block Framework

Position a concise 40-60 word answer block immediately after your title, before the first H2 heading. This gives AI systems an immediately extractable summary where context windows weight content most heavily. The pattern looks like this:

Structure Pattern for AI-Optimized Content:

[H1 Title]

[40-60 word answer block summarizing core value]

[H2 What is X?]

[60-100 word definition with specific details]

[H2 Why Does X Matter?]

[Structured explanation with bullet points]

- Key benefit 1 with quantified impact

- Key benefit 2 with real-world example

- Key benefit 3 with authoritative citation

[H2 How to Implement X]

[Step-by-step with H3 subheadings]

[H3 Step 1: Specific Action]

[60-120 word explanation]

[H3 Step 2: Next Action]

[60-120 word explanation]

[H2 Common Challenges and Solutions]

[Q&A format or structured list]

[H2 Real-World Examples]

[Case studies with specific metrics]This structure maps directly to how AI chunking algorithms parse content. Each section becomes a discrete, citable unit with clear topical boundaries.

Optimal Paragraph Length and Sentence Structure

RAG systems extract passages in specific token windows. Paragraphs of 60-120 words (3-5 sentences) align with these extraction windows, maximizing citation probability. Sentences should average 15-20 words, using simple, direct language that AI models parse unambiguously.

Compare these approaches:

| AI-Unfriendly | AI-Optimized |

|---|---|

| Lengthy paragraphs (200+ words) with complex compound-complex sentences that embed multiple subordinate clauses, creating ambiguous semantic relationships that confuse extraction algorithms. | OAuth 2.0 with PKCE provides enhanced security for mobile applications. PKCE prevents authorization code interception by using cryptographic verification. The client generates a random code verifier and creates a SHA-256 challenge. This eliminates the need for client secrets in public clients. |

| “Utilize cutting-edge methodologies to facilitate synergistic optimization across multifaceted operational paradigms.” | “Use modern techniques to improve performance across multiple systems.” |

| Generic statements without specifics: “Many companies see improved results.” | Quantified claims with attribution: “According to Gartner’s 2025 study, 73% of enterprises implementing PKCE reduced security incidents by 45%.” |

The Q&A Format Advantage

Question-and-answer formatting delivers the highest citation rates because it directly matches how users query AI systems. When someone asks “What is the difference between OAuth 2.0 and OAuth 2.0 with PKCE?”, content structured as explicit Q&A provides semantic alignment that other formats cannot match.

Implementation strategies:

- Research Actual Questions: Mine Reddit threads, Stack Overflow, Google autocomplete, and “People Also Ask” sections for exact phrasing users employ

- Structure Headers as Questions: Convert generic headers like “Implementation Details” to specific questions like “How do I generate a PKCE code verifier in Node.js?”

- Provide Direct Answers First: Lead each section with the concise answer (2-4 sentences), then elaborate with supporting details

- Implement FAQ Schema: Combine Q&A content structure with FAQPage schema markup for maximum visibility

E-E-A-T: The Foundation of AI Trust

Google’s E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) extends directly to GEO. AI systems favor content demonstrating these signals because they reduce hallucination risk and improve answer quality.

Experience Signals

Demonstrate first-hand experience through:

- Specific Implementation Details: Include actual configuration files, real error messages encountered, and troubleshooting steps from production deployments

- Quantified Outcomes: “Implementing PKCE reduced our authentication-related support tickets by 67% over six months” carries more weight than “PKCE improves security”

- Screenshots and Diagrams: Visual proof of hands-on implementation, properly captioned for AI interpretation

- Version-Specific Details: Mention exact library versions, API versions, and compatibility constraints encountered

Expertise Signals

Establish expertise through:

- Author Credentials: Transparent bylines with job titles, certifications, and relevant background. “Sarah Chen, Senior Security Architect with 12 years implementing OAuth for Fortune 500 companies” establishes immediate credibility

- Technical Depth: Explain not just what to do, but why it works. Cover edge cases, performance implications, and security considerations

- Industry-Specific Language: Use proper technical terminology while defining terms for broader audiences

- Comparative Analysis: Discuss tradeoffs, alternatives, and when different approaches are appropriate

Authoritativeness Signals

Build authority through:

- Citations to Authoritative Sources: Reference RFCs, official specifications, peer-reviewed research, and reputable industry sources

- External Validation: Mentions in industry publications, conference presentations, and third-party citations of your work

- Organizational Credentials: Company background, client lists (when permitted), and industry recognition

- Consistent Topic Focus: Demonstrable pattern of publishing on related topics over time

Trustworthiness Signals

- Transparent Methodology: Explain how tests were conducted, sample sizes, and limitations

- Regular Updates: Maintain content freshness with “Last updated” timestamps and changelog sections

- Balanced Perspective: Acknowledge limitations, discuss failure modes, and present alternative viewpoints

- Security and Privacy: Proper HTTPS, clear privacy policies, and secure handling of user data

The Power of Precise Statistics

Data-driven content significantly increases AI citation probability. Inline citations have been shown to increase AI snippet visibility by 132%. However, the quality and specificity of statistics matter enormously.

Effective Statistical Claims

// Weak Statistical Claim

"Many organizations implement OAuth 2.0."

// Strong Statistical Claim with Citation

"According to Auth0's 2025 State of Secure Authentication Report,

73% of enterprises with mobile applications implemented OAuth 2.0

with PKCE between 2023-2025, representing a 156% increase from

2021-2022." [1]

// Weak Outcome Statement

"PKCE improves security."

// Strong Outcome with Quantification

"In a January 2025 survey of 150 NYC fintech founders, 68% reported

zero authorization code interception incidents after implementing

PKCE, compared to 23% before implementation." [2]Notice the pattern: specific numbers, clear attribution, defined timeframes, and reputable sources. AI systems weight these signals heavily when selecting citations.

Citation Format and Attribution

Inline citations should include:

- Source organization (preferably authoritative: Gartner, Forrester, IEEE, government agencies)

- Report or study name

- Publication date (2025, Q1 2025, January 2025)

- Sample size when applicable

- Link to original source in references section

Earned Media: The External Authority Multiplier

AI systems exhibit systematic bias toward earned media (third-party, authoritative sources) over brand-owned content. Research shows that 32.5% of AI citations come from comparison articles, while Wikipedia accounts for 47.9% of ChatGPT’s factual citations.

This creates a strategic imperative: your content alone, no matter how well-optimized, has limited citation potential. Building external mentions and references becomes critical.

Strategies for Earning External Citations

1. Original Research and Data

Publish proprietary surveys, benchmark studies, and industry research that others will reference. Example: “TechCorp’s 2025 API Gateway Performance Benchmark” becomes a citable source across the industry.

2. Expert Commentary and Quotes

Position subject matter experts for media quotes and industry commentary. Each mention in Forbes, TechCrunch, or industry publications strengthens AI perception of authority.

3. Comparison Content

Since comparison articles receive high citation rates, creating objective comparisons that include your solution alongside competitors can be effective. The key is genuine objectivity that provides value, not thinly-veiled product promotion.

4. Community Engagement

Reddit, Stack Overflow, and industry forums influence AI citations. Authentic participation (not spam) builds reputation and creates citation-worthy discussions. Perplexity shows particular preference for Reddit content.

5. Academic and Technical Contributions

White papers, conference presentations, and contributions to open-source projects establish technical credibility that AI systems recognize.

Content Freshness and Update Strategies

AI models update knowledge more frequently than search engines traditionally recrawl content. Maintaining freshness signals provides competitive advantage.

Systematic Update Framework

// Content Freshness Tier System

Tier 1: Critical Technical Content (Quarterly Updates)

- API documentation

- Security implementation guides

- Framework-specific tutorials

Updates: Every 90 days or when major version released

Tier 2: Industry Analysis (Bi-Annual Updates)

- Market trends

- Competitive comparisons

- Best practices guides

Updates: Every 6 months or significant industry shifts

Tier 3: Foundational Content (Annual Updates)

- Conceptual explanations

- Historical context

- Theoretical frameworks

Updates: Annually or when paradigm shifts occur

Update Checklist:

□ Verify all statistics and citations remain current

□ Test all code examples with latest library versions

□ Update screenshots and diagrams

□ Revise dateModified in Article schema

□ Add "Updated [Date]" notation prominently

□ Document changes in changelog sectionChangelog Implementation

Include explicit update tracking:

## Update History

**February 15, 2025**: Updated OAuth 2.0 PKCE implementation

examples to reflect changes in Node.js 22 crypto module. Added

performance benchmarks from production deployment. Revised

security considerations based on RFC 8252 amendments.

**November 3, 2024**: Initial publication with Node.js 18 examples

and basic implementation guide.This transparency signals active maintenance and builds trust with both human readers and AI systems.

Balancing Human Readability with Machine Comprehension

The challenge of dual optimization requires systematic approaches that satisfy both audiences without compromising either.

Writing Techniques That Serve Both

Use Conversational Directness

Write as you would speak, using natural language patterns that AI models process effectively while remaining engaging for readers. Say “buy” instead of “make a purchase,” and “use” instead of “utilize.”

Employ Strategic Repetition

Vary terminology naturally while reinforcing core concepts. When discussing “OAuth 2.0 with PKCE,” also use variations like “PKCE extension,” “Proof Key for Code Exchange,” and “enhanced OAuth flow.” This serves AI’s semantic understanding while avoiding awkward keyword stuffing.

Implement Progressive Disclosure

Lead with concise summaries for AI extraction, then provide detailed explanations for human comprehension:

[H2] What is OAuth 2.0 PKCE?

[Concise Definition - AI Extraction Target]

OAuth 2.0 with PKCE (Proof Key for Code Exchange) is a security

extension that prevents authorization code interception attacks in

public clients like mobile and single-page applications.

[Detailed Explanation - Human Context]

Traditional OAuth 2.0 relies on client secrets to verify the identity

of applications requesting access tokens. However, mobile applications

and browser-based single-page apps cannot securely store secrets

because users can inspect the application code. This creates a

vulnerability where malicious actors might intercept authorization

codes and exchange them for access tokens.

PKCE solves this by introducing a cryptographic challenge-response

mechanism. The client generates a random "code verifier" and

mathematically transforms it into a "code challenge" using SHA-256

hashing. The authorization server validates that the client

presenting the authorization code possesses the original verifier,

proving it's the legitimate recipient.Visual Content Optimization

Images and diagrams enhance human understanding while requiring special handling for AI comprehension:

- Alt Text: Descriptive, specific alternative text that explains diagram content

- Captions: Explicit captions that AI can extract and attribute

- Surrounding Context: Textual explanation before and after images that AI can process

- Avoid Text in Images: Don’t embed critical information in image files that AI cannot extract

- Use HTML Tables: Instead of image-based tables, create real HTML tables that AI can parse and reference

Industry-Specific Content Strategies

Different verticals benefit from tailored approaches:

Technical Documentation and Developer Content

- Comprehensive code examples in multiple programming languages

- Explicit prerequisites and dependency versions

- Troubleshooting sections addressing common errors

- API reference documentation with request/response examples

- Integration guides with popular frameworks

B2B SaaS and Enterprise Software

- Use case documentation with specific industry examples

- ROI calculators and quantified business outcomes

- Compliance and security certifications prominently featured

- Comparison matrices with transparent competitive analysis

- Integration capabilities with common enterprise platforms

Healthcare and Financial Services

- Citations to peer-reviewed research and regulatory guidelines

- Clear disclaimers and authoritative source references

- Medical or financial credentials prominently displayed

- Regular updates reflecting regulatory changes

- Evidence-based recommendations with cited studies

Content Audit and Optimization Workflow

Systematic content improvement requires structured processes:

// GEO Content Audit Framework (Python Implementation)

import requests

from datetime import datetime

from typing import List, Dict

class GEOContentAuditor:

def __init__(self, content_url: str):

self.url = content_url

self.audit_results = {}

def audit_structure(self, html_content: str) -> Dict:

"""Analyze content structure for GEO optimization"""

checks = {

'has_answer_block': self.check_answer_block(html_content),

'header_hierarchy': self.analyze_headers(html_content),

'paragraph_length': self.analyze_paragraphs(html_content),

'list_usage': self.check_lists(html_content),

'table_structure': self.check_tables(html_content)

}

return checks

def check_answer_block(self, content: str) -> bool:

"""Verify presence of 40-60 word answer block"""

# Check for first paragraph after H1

# Validate word count in 40-60 range

# Return True if present and properly positioned

pass

def analyze_headers(self, content: str) -> Dict:

"""Evaluate header structure and hierarchy"""

return {

'h1_count': 1, # Should be exactly 1

'h2_count': 5, # Multiple H2s expected

'h3_count': 8, # Detailed subsections

'question_format': 3, # Headers as questions

'semantic_clarity': 'high'

}

def analyze_paragraphs(self, content: str) -> Dict:

"""Check paragraph length optimization"""

paragraph_lengths = [] # Calculate for each paragraph

return {

'average_words': 85,

'within_optimal_range': True, # 60-120 words

'sentence_avg_words': 17,

'readability_score': 65 # Flesch Reading Ease

}

def check_eeat_signals(self, content: str) -> Dict:

"""Verify E-E-A-T signal presence"""

return {

'author_credentials': True,

'publication_date': True,

'update_date': True,

'external_citations': 12,

'statistical_claims': 8,

'authoritative_sources': ['Gartner', 'Forrester', 'IEEE']

}

def check_schema_implementation(self) -> Dict:

"""Validate schema markup presence and accuracy"""

return {

'organization_schema': True,

'article_schema': True,

'faq_schema': True,

'schema_validation': 'passed'

}

def generate_recommendations(self) -> List[str]:

"""Create prioritized optimization recommendations"""

recommendations = []

if not self.audit_results.get('has_answer_block'):

recommendations.append(

"HIGH: Add 40-60 word answer block after title"

)

if self.audit_results.get('paragraph_avg_words', 0) > 150:

recommendations.append(

"MEDIUM: Reduce paragraph length to 60-120 words"

)

if self.audit_results.get('external_citations', 0) < 5:

recommendations.append(

"HIGH: Add authoritative citations and statistics"

)

return recommendations

def export_audit_report(self, format: str = 'json'):

"""Export comprehensive audit results"""

report = {

'url': self.url,

'audit_date': datetime.now().isoformat(),

'structure_score': self.calculate_structure_score(),

'eeat_score': self.calculate_eeat_score(),

'technical_score': self.calculate_technical_score(),

'overall_geo_score': self.calculate_overall_score(),

'recommendations': self.generate_recommendations()

}

return report

# Usage

auditor = GEOContentAuditor('https://example.com/oauth-guide')

audit_results = auditor.export_audit_report()

print(f"GEO Score: {audit_results['overall_geo_score']}/100")

print(f"Top Recommendations: {audit_results['recommendations'][:3]}")Real-World Success Pattern: The Smart Rent Case Study

In 2025, Smart Rent implemented a comprehensive GEO strategy that delivered measurable results within 30 days:

- 200 new AI citations across ChatGPT, Perplexity, and Google AI Overviews

- 32.1% increase in organic search demo form submissions

- 345% surge in referral traffic from LLMs

Their approach combined:

- Content restructuring using Q&A formats for top-performing pages

- Addition of FAQ sections based on Reddit threads and user forums

- Implementation of comprehensive schema markup

- Regular content updates with explicit changelog documentation

- Strategic PR outreach to earn third-party citations

This integrated strategy demonstrates that GEO success requires simultaneous optimization across content structure, technical implementation, and external authority building.

Common Content Mistakes That Prevent Citations

- Generic, Thin Content: Surface-level overviews without specific implementation details, quantified outcomes, or expert insights fail to establish authority

- Buried Key Information: Placing critical answers deep within long articles prevents AI extraction. Answer the question immediately, then elaborate

- Lack of Structure: Dense paragraphs without headers, lists, or clear sections make content difficult for AI to chunk and extract

- Missing Citations and Sources: Claims without attribution reduce trustworthiness and citation probability

- Stale Content: Outdated statistics, deprecated code examples, and unchanged modification dates signal low quality

- Over-Optimization: Awkward keyword stuffing and unnatural language patterns that prioritize machines over humans backfire on both fronts

- Ignoring External Validation: Relying solely on owned content without building third-party mentions and citations

Next Steps: Multi-Platform Implementation

With content strategy established, Part 4 will explore platform-specific optimization tactics. ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude each exhibit distinct preferences and behaviors that require tailored approaches.

We’ll provide production-ready code examples for platform-specific optimization, cross-platform consistency strategies, and techniques for maximizing visibility across the entire AI ecosystem simultaneously.

References

- StoryChief – “How to Structure Content for GEO in 2025 and Beyond”

- To The Web – “GEO: The Complete Guide to AI-First Content Optimization 2025”

- Firebrand – “GEO Best Practices for 2026”

- Search Engine Land – “How to Optimize Content for AI Search Engines: A Step-by-Step Guide”

- Frase.io – “What is Generative Engine Optimization (GEO)? Complete 2025 Guide”

- Averi – “GEO Content Strategy for B2B Startups: How to Get Cited by AI”

- Conductor – “The Best AEO / GEO Tools to Get Cited in AI Search”

- Averi – “GEO Metrics That Matter: How to Track AI Citations”

- Adriana Lacy Consulting – “The New SEO is GEO: Winning Visibility in the Age of AI”

- Strapi – “Generative Engine Optimization (GEO): Complete Guide 2025”