In the previous posts, we explored the Model Context Protocol fundamentals, examined Azure MCP Server’s capabilities, and built custom MCP servers on Azure. Now we advance into enterprise AI territory: integrating MCP with Azure AI Agent Service to create sophisticated multi-agent systems that can orchestrate complex business workflows.

Azure AI Agent Service represents Microsoft’s fully managed platform for building, deploying, and scaling enterprise-grade AI agents. When combined with the Model Context Protocol, it unlocks powerful capabilities for creating agents that can dynamically discover and use tools, collaborate with other agents, and maintain context across complex interactions.

Understanding Azure AI Agent Service

Azure AI Agent Service is part of the Azure AI Foundry ecosystem, designed to empower developers to build high-quality, extensible AI agents without managing underlying compute and storage infrastructure. The service provides a robust foundation for creating agents that can reason, plan, and execute tasks across your enterprise systems.

Core Capabilities

The service delivers several critical features for enterprise AI deployments:

- Fully Managed Infrastructure: No need to provision or manage compute resources. The platform handles scaling, availability, and performance automatically

- Built-in Tool Integration: Native support for Bing Search, Azure AI Search, code interpreter, and now Model Context Protocol

- Thread Management: Automatic conversation thread handling with persistent storage for maintaining context across interactions

- Identity Integration: Deep integration with Microsoft Entra ID for enterprise-grade authentication and authorization

- Multi-Agent Orchestration: Support for Agent-to-Agent (A2A) communication enabling specialized agents to collaborate

MCP Support in Azure AI Foundry

As of July 2025, Azure AI Foundry Agent Service added preview support for Model Context Protocol, transforming how developers connect agents to enterprise systems. This integration eliminates the need for custom Azure Functions or OpenAPI specifications for every backend system you want to integrate.

Think of MCP support as a USB-C connector for AI integrations. You build or deploy an MCP server once, and any Foundry agent can automatically discover and use its tools without additional custom code. The service acts as a first-class MCP client, handling tool discovery, invocation, and result processing seamlessly.

Architecture Overview

graph TB

subgraph "User Interface"

A[User Query]

end

subgraph "Azure AI Foundry"

B[Agent Service]

C[GPT-4o Model]

D[Thread Manager]

E[Tool Orchestrator]

end

subgraph "MCP Integration Layer"

F[MCP Client]

G[Tool Discovery]

H[Header Manager]

end

subgraph "MCP Servers"

I[CRM MCP Server]

J[Database MCP Server]

K[GitHub MCP Server]

L[Azure MCP Server]

end

subgraph "Backend Systems"

M[CRM Database]

N[PostgreSQL]

O[GitHub API]

P[Azure Services]

end

A --> B

B --> C

B --> D

B --> E

E --> F

F --> G

G --> I

G --> J

G --> K

G --> L

F --> H

I --> M

J --> N

K --> O

L --> P

style B fill:#4A90E2

style C fill:#7ED321

style F fill:#F5A623

style E fill:#BD10E0Creating Your First MCP-Enabled Agent

Let’s build a complete enterprise agent that uses MCP servers for customer relationship management. This example demonstrates the full workflow from agent creation to tool invocation.

Prerequisites

- Azure AI Foundry project created in a supported region

- Azure OpenAI deployment with GPT-4o or GPT-4

- An MCP server deployed and accessible (use the CRM server from Part 3)

- Python 3.8 or later with azure-ai-projects and azure-identity packages

Python Implementation

Install the required packages:

pip install azure-ai-projects azure-identity azure-ai-agents python-dotenv --upgradeCreate the agent with MCP tool integration:

import os

from azure.ai.projects import AIProjectClient

from azure.identity import DefaultAzureCredential

from azure.ai.agents.models import (

McpTool,

MessageTextContent,

ListSortOrder,

RunStatus

)

from dotenv import load_dotenv

# Load environment variables

load_dotenv()

# Initialize the AI Project Client

project_client = AIProjectClient.from_connection_string(

credential=DefaultAzureCredential(),

conn_str=os.environ["PROJECT_CONNECTION_STRING"]

)

# Create an agent with MCP tool

agent = project_client.agents.create_agent(

model="gpt-4o",

name="CRM Assistant",

instructions="""You are a helpful CRM assistant that helps users manage customer relationships.

You have access to tools for:

- Searching customers by name, email, or company

- Retrieving customer details

- Creating new customer records

- Updating existing customer information

Always provide clear, professional responses. When creating or updating customers,

confirm the details before proceeding. Use natural language to explain the results.""",

tools=[

McpTool(

server_url="https://your-crm-mcp-server.azurecontainerapps.io",

server_label="crm",

allowed_tools=["search_customers", "get_customer", "create_customer", "update_customer"]

)

]

)

print(f"Created agent: {agent.id}")

# Create a conversation thread

thread = project_client.agents.threads.create()

print(f"Created thread: {thread.id}")

# Send a message

message = project_client.agents.messages.create(

thread_id=thread.id,

role="user",

content="Search for customers from Acme Corp"

)

print(f"Created message: {message.id}")

# Create a run with MCP tool resources

run = project_client.agents.runs.create(

thread_id=thread.id,

assistant_id=agent.id,

tool_resources={

"mcp": {

"crm": {

"headers": {

"x-api-key": os.environ.get("CRM_API_KEY", "")

},

"require_approval": False

}

}

}

)

print(f"Created run: {run.id}")

# Wait for completion

import time

while run.status in [RunStatus.QUEUED, RunStatus.IN_PROGRESS, RunStatus.REQUIRES_ACTION]:

time.sleep(1)

run = project_client.agents.runs.retrieve(

thread_id=thread.id,

run_id=run.id

)

print(f"Run status: {run.status}")

# Retrieve messages

messages = project_client.agents.messages.list(

thread_id=thread.id,

order=ListSortOrder.ASCENDING

)

# Display the conversation

for message in messages:

if isinstance(message.content[0], MessageTextContent):

role = "User" if message.role == "user" else "Assistant"

print(f"\n{role}: {message.content[0].text.value}")

# Clean up

project_client.agents.delete_agent(agent.id)

project_client.agents.threads.delete_thread(thread.id)

print("\nCleaned up resources")Node.js/TypeScript Implementation

For TypeScript developers, here is the equivalent implementation:

import { AIProjectsClient } from "@azure/ai-projects";

import { DefaultAzureCredential } from "@azure/identity";

const projectClient = new AIProjectsClient(

process.env.PROJECT_CONNECTION_STRING!,

new DefaultAzureCredential()

);

async function createMcpAgent() {

// Create agent with MCP tool

const agent = await projectClient.agents.createAgent({

model: "gpt-4o",

name: "CRM Assistant",

instructions: `You are a helpful CRM assistant that helps users manage customer relationships.

You have access to tools for searching, retrieving, creating, and updating customers.

Always provide clear, professional responses.`,

tools: [

{

type: "mcp",

server_url: "https://your-crm-mcp-server.azurecontainerapps.io",

server_label: "crm",

allowed_tools: [

"search_customers",

"get_customer",

"create_customer",

"update_customer"

]

}

]

});

console.log(`Created agent: ${agent.id}`);

// Create thread

const thread = await projectClient.agents.threads.create({});

console.log(`Created thread: ${thread.id}`);

// Add message

await projectClient.agents.threads.messages.create(thread.id, {

role: "user",

content: "Show me all customers from Tech Solutions"

});

// Create run with MCP headers

const run = await projectClient.agents.threads.runs.create(thread.id, {

assistant_id: agent.id,

tool_resources: {

mcp: {

crm: {

headers: {

"x-api-key": process.env.CRM_API_KEY || ""

},

require_approval: false

}

}

}

});

console.log(`Created run: ${run.id}`);

// Poll for completion

let runStatus = await projectClient.agents.threads.runs.retrieve(

thread.id,

run.id

);

while (["queued", "in_progress", "requires_action"].includes(runStatus.status)) {

await new Promise(resolve => setTimeout(resolve, 1000));

runStatus = await projectClient.agents.threads.runs.retrieve(

thread.id,

run.id

);

console.log(`Run status: ${runStatus.status}`);

}

// Get messages

const messages = await projectClient.agents.threads.messages.list(thread.id, {

order: "asc"

});

// Display conversation

for (const message of messages.data) {

const role = message.role === "user" ? "User" : "Assistant";

if (message.content[0].type === "text") {

console.log(`\n${role}: ${message.content[0].text.value}`);

}

}

// Cleanup

await projectClient.agents.deleteAgent(agent.id);

await projectClient.agents.threads.deleteThread(thread.id);

console.log("\nCleaned up resources");

}

createMcpAgent().catch(console.error);Building a TypeScript MCP Server for Azure AI Agents

To create a seamless integration, let’s build a complete MCP server that connects Azure AI Agents to Claude Desktop or other MCP clients.

Project Setup

mkdir azure-agent-mcp

cd azure-agent-mcp

npm init -y

npm install @modelcontextprotocol/sdk zod dotenv @azure/ai-projects @azure/identity

npm install -D typescript @types/node tsxImplementation

import { Server } from "@modelcontextprotocol/sdk/server/index.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

import {

CallToolRequestSchema,

ListToolsRequestSchema,

} from "@modelcontextprotocol/sdk/types.js";

import { AIProjectClient } from "@azure/ai-projects";

import { DefaultAzureCredential } from "@azure/identity";

import { z } from "zod";

interface AgentQuery {

agentId: string;

query: string;

threadId?: string;

}

class AzureAgentMCPServer {

private server: Server;

private projectClient: AIProjectClient;

private activeThreads: Map = new Map();

constructor() {

this.server = new Server(

{

name: "azure-agent-mcp",

version: "1.0.0",

},

{

capabilities: {

tools: {},

},

}

);

this.projectClient = new AIProjectClient(

process.env.PROJECT_CONNECTION_STRING!,

new DefaultAzureCredential()

);

this.setupHandlers();

}

private setupHandlers() {

// List available tools

this.server.setRequestHandler(ListToolsRequestSchema, async () => {

return {

tools: [

{

name: "list_agents",

description: "List all available Azure AI agents",

inputSchema: {

type: "object",

properties: {},

},

},

{

name: "query_agent",

description: "Query a specific Azure AI agent",

inputSchema: {

type: "object",

properties: {

agent_id: {

type: "string",

description: "The ID of the Azure AI agent to query",

},

query: {

type: "string",

description: "The question or request to send to the agent",

},

thread_id: {

type: "string",

description: "Optional thread ID for conversation continuation",

},

},

required: ["agent_id", "query"],

},

},

{

name: "create_agent",

description: "Create a new Azure AI agent",

inputSchema: {

type: "object",

properties: {

name: {

type: "string",

description: "Name for the agent",

},

instructions: {

type: "string",

description: "System instructions for the agent",

},

model: {

type: "string",

description: "Model to use (default: gpt-4o)",

},

},

required: ["name", "instructions"],

},

},

],

};

});

// Handle tool execution

this.server.setRequestHandler(CallToolRequestSchema, async (request) => {

const { name, arguments: args } = request.params;

try {

switch (name) {

case "list_agents":

return await this.listAgents();

case "query_agent":

return await this.queryAgent(args as any);

case "create_agent":

return await this.createAgent(args as any);

default:

throw new Error(`Unknown tool: ${name}`);

}

} catch (error) {

return {

content: [

{

type: "text",

text: `Error: ${error instanceof Error ? error.message : "Unknown error"}`,

},

],

isError: true,

};

}

});

}

private async listAgents() {

const agents = await this.projectClient.agents.listAgents();

const agentList = agents.data.map(agent => ({

id: agent.id,

name: agent.name,

model: agent.model,

description: agent.description || "No description"

}));

return {

content: [

{

type: "text",

text: `Available Azure AI Agents:\n\n${JSON.stringify(agentList, null, 2)}`,

},

],

};

}

private async queryAgent(params: AgentQuery) {

const { agentId, query, threadId } = params;

// Get or create thread

let thread = threadId

? await this.projectClient.agents.threads.retrieve(threadId)

: await this.projectClient.agents.threads.create({});

// Add message

await this.projectClient.agents.threads.messages.create(thread.id, {

role: "user",

content: query,

});

// Create run

const run = await this.projectClient.agents.threads.runs.create(thread.id, {

assistant_id: agentId,

});

// Poll for completion

let runStatus = await this.projectClient.agents.threads.runs.retrieve(

thread.id,

run.id

);

while (["queued", "in_progress"].includes(runStatus.status)) {

await new Promise(resolve => setTimeout(resolve, 500));

runStatus = await this.projectClient.agents.threads.runs.retrieve(

thread.id,

run.id

);

}

// Get response

const messages = await this.projectClient.agents.threads.messages.list(

thread.id,

{ order: "desc", limit: 1 }

);

const response =

messages.data[0].content[0].type === "text"

? messages.data[0].content[0].text.value

: "No text response";

return {

content: [

{

type: "text",

text: `## Response from Azure AI Agent\n\n${response}\n\n(thread_id: ${thread.id})`,

},

],

};

}

private async createAgent(params: {

name: string;

instructions: string;

model?: string;

}) {

const agent = await this.projectClient.agents.createAgent({

model: params.model || "gpt-4o",

name: params.name,

instructions: params.instructions,

});

return {

content: [

{

type: "text",

text: `Created agent: ${agent.name} (ID: ${agent.id})`,

},

],

};

}

async start() {

const transport = new StdioServerTransport();

await this.server.connect(transport);

console.error("Azure Agent MCP Server running on stdio");

}

}

// Start the server

const server = new AzureAgentMCPServer();

server.start().catch(console.error); Multi-Agent Workflows with A2A Protocol

Azure AI Foundry supports Agent-to-Agent (A2A) communication through Semantic Kernel, enabling sophisticated multi-agent workflows where specialized agents collaborate to solve complex problems.

Building a Multi-Agent System

Let’s create a system where multiple specialized agents work together:

import os

from azure.ai.projects import AIProjectClient

from azure.identity import DefaultAzureCredential

from azure.ai.agents.models import McpTool

project_client = AIProjectClient.from_connection_string(

credential=DefaultAzureCredential(),

conn_str=os.environ["PROJECT_CONNECTION_STRING"]

)

# Create specialized agents

# Research Agent

research_agent = project_client.agents.create_agent(

model="gpt-4o",

name="Research Agent",

instructions="""You are a research specialist. Your job is to gather information,

analyze data, and provide comprehensive research findings. You have access to web

search and can query databases for information.""",

tools=[

{"type": "bing_search"},

McpTool(

server_url="https://database-mcp-server.azurecontainerapps.io",

server_label="database"

)

]

)

# Content Agent

content_agent = project_client.agents.create_agent(

model="gpt-4o",

name="Content Writer",

instructions="""You are a professional content writer. You take research findings

and create engaging, well-structured content. You focus on clarity, accuracy,

and compelling narratives."""

)

# Review Agent

review_agent = project_client.agents.create_agent(

model="gpt-4o",

name="Quality Reviewer",

instructions="""You are a quality reviewer. You check content for accuracy,

clarity, grammar, and adherence to guidelines. You provide constructive feedback

and suggest improvements."""

)

def multi_agent_workflow(topic: str):

"""

Orchestrate a multi-agent workflow for content creation

"""

print(f"Starting multi-agent workflow for topic: {topic}")

# Step 1: Research phase

research_thread = project_client.agents.threads.create()

project_client.agents.messages.create(

thread_id=research_thread.id,

role="user",

content=f"Research the topic: {topic}. Provide key findings and data points."

)

research_run = project_client.agents.runs.create_and_process(

thread_id=research_thread.id,

assistant_id=research_agent.id

)

research_messages = project_client.agents.messages.list(

thread_id=research_thread.id,

order="desc",

limit=1

)

research_findings = research_messages.data[0].content[0].text.value

print(f"\n--- Research Findings ---\n{research_findings}\n")

# Step 2: Content creation phase

content_thread = project_client.agents.threads.create()

project_client.agents.messages.create(

thread_id=content_thread.id,

role="user",

content=f"""Based on these research findings, write a comprehensive article:

Research Findings:

{research_findings}

Create an engaging article with proper structure, headings, and examples."""

)

content_run = project_client.agents.runs.create_and_process(

thread_id=content_thread.id,

assistant_id=content_agent.id

)

content_messages = project_client.agents.messages.list(

thread_id=content_thread.id,

order="desc",

limit=1

)

draft_content = content_messages.data[0].content[0].text.value

print(f"\n--- Draft Content ---\n{draft_content}\n")

# Step 3: Review phase

review_thread = project_client.agents.threads.create()

project_client.agents.messages.create(

thread_id=review_thread.id,

role="user",

content=f"""Review this article for quality, accuracy, and clarity:

{draft_content}

Provide specific feedback and a revised version if needed."""

)

review_run = project_client.agents.runs.create_and_process(

thread_id=review_thread.id,

assistant_id=review_agent.id

)

review_messages = project_client.agents.messages.list(

thread_id=review_thread.id,

order="desc",

limit=1

)

final_content = review_messages.data[0].content[0].text.value

print(f"\n--- Final Content ---\n{final_content}\n")

return {

"research": research_findings,

"draft": draft_content,

"final": final_content

}

# Execute workflow

result = multi_agent_workflow("Impact of AI on healthcare in 2025")C# Implementation with Semantic Kernel

For .NET developers, Semantic Kernel provides excellent MCP support:

using Microsoft.SemanticKernel;

using Microsoft.SemanticKernel.Agents;

using Microsoft.SemanticKernel.Connectors.AzureOpenAI;

using Azure.AI.Projects;

using Azure.Identity;

public class MultiAgentSystem

{

private readonly AIProjectClient _projectClient;

private readonly Kernel _kernel;

public MultiAgentSystem(string connectionString)

{

_projectClient = new AIProjectClient(

connectionString,

new DefaultAzureCredential()

);

_kernel = Kernel.CreateBuilder()

.AddAzureOpenAIChatCompletion(

deploymentName: "gpt-4o",

endpoint: Environment.GetEnvironmentVariable("AZURE_OPENAI_ENDPOINT")!,

apiKey: Environment.GetEnvironmentVariable("AZURE_OPENAI_KEY")!

)

.Build();

}

public async Task ExecuteWorkflowAsync(string topic)

{

// Create Research Agent with MCP tools

var researchAgent = await _projectClient.Agents.CreateAgentAsync(

model: "gpt-4o",

name: "Research Agent",

instructions: "You are a research specialist...",

tools: new[]

{

new McpTool

{

ServerUrl = "https://database-mcp-server.azurecontainerapps.io",

ServerLabel = "database"

}

}

);

// Create Content Agent

var contentAgent = await _projectClient.Agents.CreateAgentAsync(

model: "gpt-4o",

name: "Content Writer",

instructions: "You are a professional content writer..."

);

// Execute research phase

var researchThread = await _projectClient.Agents.Threads.CreateThreadAsync();

await _projectClient.Agents.Threads.Messages.CreateMessageAsync(

researchThread.Id,

role: "user",

content: $"Research the topic: {topic}"

);

var researchRun = await _projectClient.Agents.Threads.Runs.CreateAndProcessRunAsync(

researchThread.Id,

researchAgent.Id

);

var researchMessages = await _projectClient.Agents.Threads.Messages.ListMessagesAsync(

researchThread.Id,

order: "desc",

limit: 1

);

var researchFindings = researchMessages.Data[0].Content[0].Text.Value;

// Execute content creation phase

var contentThread = await _projectClient.Agents.Threads.CreateThreadAsync();

await _projectClient.Agents.Threads.Messages.CreateMessageAsync(

contentThread.Id,

role: "user",

content: $"Write an article based on: {researchFindings}"

);

var contentRun = await _projectClient.Agents.Threads.Runs.CreateAndProcessRunAsync(

contentThread.Id,

contentAgent.Id

);

var contentMessages = await _projectClient.Agents.Threads.Messages.ListMessagesAsync(

contentThread.Id,

order: "desc",

limit: 1

);

return contentMessages.Data[0].Content[0].Text.Value;

}

} Enterprise Integration Patterns

When building production multi-agent systems with MCP, follow these enterprise patterns:

Identity and Access Management

Every agent and MCP server interaction should be tied to identity using Microsoft Entra Agent ID:

# Configure agent with Entra ID

agent = project_client.agents.create_agent(

model="gpt-4o",

name="Secure CRM Agent",

instructions="...",

tools=[

McpTool(

server_url="https://crm-mcp-server.azurecontainerapps.io",

server_label="crm"

)

],

metadata={

"entra_agent_id": "agent-12345",

"department": "sales",

"data_classification": "confidential"

}

)

# Pass identity context in headers

run = project_client.agents.runs.create(

thread_id=thread.id,

assistant_id=agent.id,

tool_resources={

"mcp": {

"crm": {

"headers": {

"Authorization": f"Bearer {get_entra_token()}",

"X-User-Principal": user_principal_name

}

}

}

}

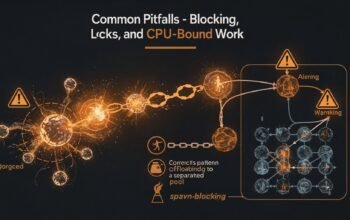

)Tool Approval Workflows

For sensitive operations, implement approval requirements:

run = project_client.agents.runs.create(

thread_id=thread.id,

assistant_id=agent.id,

tool_resources={

"mcp": {

"crm": {

"headers": {"x-api-key": api_key},

"require_approval": True # Require approval for tool calls

}

}

}

)

# Monitor run status for approval required

while True:

run_status = project_client.agents.runs.retrieve(thread.id, run.id)

if run_status.status == "requires_action":

# Get required actions

for action in run_status.required_action.submit_tool_outputs.tool_calls:

print(f"Tool call requires approval: {action.function.name}")

print(f"Arguments: {action.function.arguments}")

# Implement approval logic

if approve_tool_call(action):

# Submit approval

project_client.agents.runs.submit_tool_outputs(

thread_id=thread.id,

run_id=run.id,

tool_outputs=[{

"tool_call_id": action.id,

"output": execute_approved_tool(action)

}]

)

if run_status.status in ["completed", "failed", "cancelled"]:

break

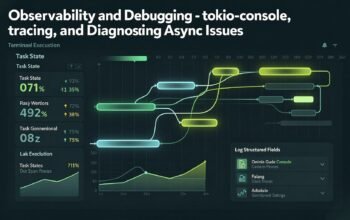

time.sleep(1)Monitoring and Observability

Azure AI Foundry provides comprehensive observability for agent operations. Enable detailed tracking:

from azure.monitor.opentelemetry import configure_azure_monitor

from opentelemetry import trace

# Configure Azure Monitor

configure_azure_monitor(

connection_string=os.environ["APPLICATIONINSIGHTS_CONNECTION_STRING"]

)

tracer = trace.get_tracer(__name__)

def execute_agent_task(query: str):

with tracer.start_as_current_span("agent_execution") as span:

span.set_attribute("query", query)

span.set_attribute("agent_id", agent.id)

try:

# Execute agent task

result = run_agent(query)

span.set_attribute("success", True)

span.set_attribute("response_length", len(result))

return result

except Exception as e:

span.set_attribute("success", False)

span.set_attribute("error", str(e))

raiseBest Practices for Production

- Use thread storage: Enable bring-your-own thread storage for conversation persistence across sessions

- Implement rate limiting: Protect MCP servers from overuse with Azure API Management

- Version your agents: Maintain multiple versions of agents for A/B testing and rollback

- Monitor token usage: Track and optimize token consumption across agents

- Implement circuit breakers: Gracefully handle MCP server failures

- Use connection pooling: Reuse connections to MCP servers for better performance

- Cache tool schemas: Cache MCP tool discovery results to reduce overhead

- Implement retry logic: Handle transient failures with exponential backoff

Looking Ahead

Azure AI Agent Service with MCP integration represents a fundamental shift in building enterprise AI systems. By combining managed agent infrastructure with standardized tool protocols, developers can create sophisticated multi-agent workflows without managing complex integration code.

In the next post, we will explore database-specific MCP implementations, focusing on Azure Database for PostgreSQL integration. You will learn how to build MCP servers that expose database operations through natural language, implement fine-grained access control, and optimize query performance for AI-driven data access.

References

- Microsoft Foundry Blog – Introducing Model Context Protocol (MCP) in Azure AI Foundry (https://devblogs.microsoft.com/foundry/integrating-azure-ai-agents-mcp/)

- Microsoft Learn – Connect to a Model Context Protocol Server Endpoint in Foundry Agent Service (https://learn.microsoft.com/en-us/azure/ai-foundry/agents/how-to/tools/model-context-protocol)

- Microsoft Community Hub – Dynamic Tool Discovery: Azure AI Agent Service + MCP Server Integration (https://techcommunity.microsoft.com/blog/azure-ai-foundry-blog/dynamic-tool-discovery-azure-ai-agent-service–mcp-server-integration/4412651)

- Microsoft Azure Blog – Agent Factory: Connecting agents, apps, and data with new open standards like MCP and A2A (https://azure.microsoft.com/en-us/blog/agent-factory-connecting-agents-apps-and-data-with-new-open-standards-like-mcp-and-a2a/)

- Microsoft Azure Blog – Agent Factory: Building your first AI agent with the tools to deliver real-world outcomes (https://azure.microsoft.com/en-us/blog/agent-factory-building-your-first-ai-agent-with-the-tools-to-deliver-real-world-outcomes/)

- InfoQ – Azure MCP Server Enters Public Preview: Expanding AI Agent Capabilities (https://www.infoq.com/news/2025/04/azure-mcp-server-public-preview/)

- Azure AI Foundry Blog – Create an MCP Server with Azure AI Agent Service (TypeScript Edition) (https://devblogs.microsoft.com/foundry/integrating-azure-ai-agents-mcp-typescript/)

- Microsoft Learn – Build Agents using Model Context Protocol on Azure (https://learn.microsoft.com/en-us/azure/developer/ai/intro-agents-mcp)

- InfoQ – Azure AI Foundry Agent Service Gains Model Context Protocol Support in Preview (https://www.infoq.com/news/2025/07/azure-foundry-mcp-agents/)

- Azure AI Foundry Blog – Announcing Model Context Protocol Support (preview) in Azure AI Foundry Agent Service (https://devblogs.microsoft.com/foundry/announcing-model-context-protocol-support-preview-in-azure-ai-foundry-agent-service/)