In the span of a single week in early March 2026, more than twelve major AI models shipped across language, video, and spatial reasoning domains. That pace is not the exception anymore. It is the new baseline. And while the headlines tend to track which model scored highest on the latest benchmark, the more important story is structural: the gap between open-source and proprietary models has effectively closed, local execution is no longer a compromise, and the first credible self-improving AI agents are running on production hardware.

This post breaks down what actually shipped in March 2026, what the benchmarks mean in practice, and what developers need to rethink about how they choose and deploy LLMs.

The Proprietary Tier: GPT-5.4 and Gemini 3.1 Flash-Lite

OpenAI shipped GPT-5.4 on March 5, 2026. The headline numbers: a 1.05-million-token context window, 57.7% on SWE-Bench Pro, 33% fewer individual claim errors compared to GPT-5.2, and native computer-use capabilities baked into the standard model rather than a separate system. Three variants ship together: Standard, Thinking (which scored 83.0% on the GDPVal benchmark, above the average human expert threshold), and Pro.

Pricing sits at $2.50 per million input tokens. That is not budget territory, but it is no longer the premium it once was given what the model can do. The native computer-use integration is the detail worth watching for developers building agentic systems. Prior approaches bolted computer interaction on top of a language model. GPT-5.4 treats it as a first-class capability, which means tool-use patterns that previously required custom scaffolding now work out of the box.

OpenAI also released GPT-5.3 Instant, which became the default model inside ChatGPT. The combination of GPT-5.3 Instant with web search produces a 26.8% reduction in hallucinations according to OpenAI’s internal benchmarks. For teams evaluating whether to build on the API or use ChatGPT as a workflow tool, that number matters more than the raw capability scores.

On the Google side, Gemini 3.1 Flash-Lite launched at $0.25 per million input tokens with response times 2.5x faster than its predecessor. The model includes adjustable reasoning levels, letting developers trade latency against accuracy at the API call level. At that price point and speed, Flash-Lite is targeting the high-volume, latency-sensitive tier of applications where GPT-5.4 is simply not economical.

The Open-Weight Shift: Qwen 3.5 Small and NVIDIA Nemotron 3 Super

The more significant story this month is not what OpenAI or Google shipped. It is what Alibaba and NVIDIA shipped, and what it means for the assumption that proprietary models hold a permanent capability lead.

Alibaba released Qwen 3.5 Small on March 1, 2026. The 9-billion-parameter model uses a Gated DeltaNet architecture combining linear attention with sparse Mixture-of-Experts routing. It scores 81.7 on GPQA Diamond, a graduate-level science reasoning benchmark, while running on a standard laptop or an iPhone with 4GB of RAM. The 262K native context window is extensible to 1M tokens. Input costs run around $0.10 per million tokens via the API.

To put that in context: Alibaba’s 9-billion-parameter open model matched OpenAI’s 120-billion-parameter model on GPQA Diamond. The efficiency gains from architecture changes, not just compute scaling, are now large enough to flip the parameter-count intuition that most teams have been using to evaluate models.

NVIDIA’s Nemotron 3 Super, announced at GTC on March 11, takes a different approach to the same efficiency problem. The model has 120 billion total parameters but only 12 billion activate per forward pass, using a hybrid Mamba-Transformer Mixture-of-Experts architecture. It scores 60.47% on SWE-Bench Verified under the OpenHands scaffold, which is the best result for any open-weight model on that benchmark. For comparison, GPT-OSS scores 41.90% on the same test. On the RULER 1M-token benchmark, Nemotron 3 Super scores 91.75% against GPT-OSS’s 22.30%, a difference that matters enormously for agentic workflows that need to hold long task histories in context.

The inference throughput advantage is also real: 2.2x higher than GPT-OSS-120B and 7.5x higher than Qwen3.5-122B on the 8K input / 16K output benchmark. For teams running models on their own infrastructure, that throughput difference translates directly into cost and latency.

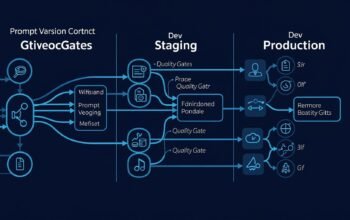

The LLM Landscape in One Diagram

graph TD

A[March 2026 LLM Landscape] --> B[Proprietary Cloud]

A --> C[Open-Weight]

B --> D["GPT-5.4 - OpenAI\n1.05M ctx, $2.50/1M\n57.7% SWE-Bench Pro"]

B --> E["GPT-5.3 Instant - OpenAI\nChatGPT Default\n26.8% less hallucination with search"]

B --> F["Gemini 3.1 Flash-Lite - Google\n$0.25/1M, 2.5x faster\nAdjustable reasoning levels"]

C --> G["Nemotron 3 Super - NVIDIA\n120B total / 12B active\n60.47% SWE-Bench Verified"]

C --> H["Qwen 3.5 Small 9B - Alibaba\n81.7 GPQA Diamond\n262K native ctx, runs on iPhone"]

C --> I["LTX 2.3 - Lightricks\n22B params, 4K at 50FPS\nApache 2.0"]

G --> J[Self-Hosted Agentic AI]

H --> K[Edge and Local Deployment]

D --> L[Cloud Agentic Workflows]

F --> M[High-Volume Low-Cost APIs]The Darwin Godel Machine: Self-Improving AI Becomes Real

Separate from the model releases, the research result getting the most attention in technical circles is the Darwin Godel Machine (DGM). It is a self-referential AI system that modifies its own source code to improve performance. In benchmark testing, DGM increased agent task performance from 20.0% to 50.0% autonomously, without human intervention. That is a 150% relative improvement achieved by the system rewriting itself.

This is not the same category as fine-tuning or reinforcement learning from human feedback. The system identifies weaknesses in its own architecture and code, generates modifications, tests them, and keeps the ones that improve the benchmark score. The practical implications for production AI systems are still being worked out, but the existence proof matters: recursive self-improvement is no longer a theoretical concern.

OpenAI Acquires Astral: What It Means for Developers

OpenAI announced plans to acquire Astral, the startup behind uv (the fast Python package manager and resolver) and ruff (the high-performance Python linter and formatter). OpenAI noted that Codex, its AI coding platform, now has more than 2 million users, triple the count from the start of 2026.

The acquisition signals that OpenAI wants to own the developer toolchain, not just the model. Astral’s tools have become critical infrastructure for Python development. uv is 10-100x faster than pip for dependency resolution. ruff is the de facto standard for Python linting in new projects. Bringing those tools inside OpenAI’s umbrella while growing Codex to millions of users sets up a tight loop between writing code, executing code, and the AI that assists both.

For developers, the near-term impact is likely minimal. Astral’s tools are open source and will remain so. But the longer-term question of whether Codex gets deep integration with uv and ruff in ways that give OpenAI’s platform an edge in developer workflow tooling is worth watching.

Practical Implications: Choosing a Model in March 2026

The decision framework most teams have been using, which typically started with “pick the best model that fits the budget,” needs an update. Three structural changes now apply:

- Parameter count no longer predicts capability. A 9B open-weight model matching a 120B proprietary model on graduate-level science reasoning is not a fluke. Architecture innovation has decoupled capability from raw size.

- Local deployment is a viable production choice. Qwen 3.5 Small running on a device with 4GB of RAM, with a 262K context window, removes the API-dependency assumption for a wide range of use cases. Edge deployment is no longer a constrained fallback.

- Agentic use cases have a different optimal model. For multi-step agent workflows, the 1M-token context retention and 60.47% SWE-Bench score of Nemotron 3 Super, combined with the ability to self-host, makes it the leading choice for teams that care about cost control and data residency.

Code Example: Model Selection with Fallback in Node.js

Here is a practical pattern for building a model-agnostic LLM client in Node.js that selects based on task type and falls back gracefully:

// @group Configuration : Model registry with capability profiles

const MODEL_REGISTRY = {

agentic: {

primary: { provider: 'nvidia', model: 'nemotron-3-super-120b-a12b' },

fallback: { provider: 'openai', model: 'gpt-5.4' },

},

edge: {

primary: { provider: 'alibaba', model: 'qwen-3.5-small-9b' },

fallback: { provider: 'google', model: 'gemini-3.1-flash-lite' },

},

general: {

primary: { provider: 'openai', model: 'gpt-5.3-instant' },

fallback: { provider: 'google', model: 'gemini-3.1-flash-lite' },

},

};

// @group BusinessLogic : Select optimal model based on task requirements

function selectModel(taskType, options = {}) {

const { forceLocal = false } = options;

const entry = MODEL_REGISTRY[taskType] ?? MODEL_REGISTRY.general;

if (forceLocal) return MODEL_REGISTRY.edge.primary;

return entry.primary;

}

// @group APIEndpoints : Unified LLM call with automatic fallback

async function callLLM(taskType, messages, options = {}) {

const model = selectModel(taskType, options);

try {

const response = await fetch(`https://api.${model.provider}.com/v1/chat/completions`, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': `Bearer ${process.env[model.provider.toUpperCase() + '_API_KEY']}`,

},

body: JSON.stringify({ model: model.model, messages }),

});

if (!response.ok) throw new Error(`HTTP ${response.status}`);

return await response.json();

} catch (err) {

const fallback = MODEL_REGISTRY[taskType]?.fallback ?? MODEL_REGISTRY.general.fallback;

console.warn(`Primary model ${model.model} failed, trying ${fallback.model}:`, err.message);

return callLLMDirect(fallback, messages);

}

}

// @group Utilities : Direct provider call without selection logic

async function callLLMDirect(model, messages) {

const response = await fetch(`https://api.${model.provider}.com/v1/chat/completions`, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': `Bearer ${process.env[model.provider.toUpperCase() + '_API_KEY']}`,

},

body: JSON.stringify({ model: model.model, messages }),

});

if (!response.ok) throw new Error(`Fallback also failed: HTTP ${response.status}`);

return response.json();

}

module.exports = { callLLM, selectModel };What to Watch in Q2 2026

Morgan Stanley’s March 2026 research note warned that a transformative AI leap is coming in the first half of 2026, driven by unprecedented compute accumulation at leading labs. That language would have sounded like marketing copy two years ago. Given what has actually shipped this quarter, it reads more like an understatement.

- Darwin Godel Machine follow-on research. If the autonomous self-improvement results replicate, the development velocity of AI systems themselves changes. Every lab with a coding agent is now asking whether their system can write better versions of itself.

- Local model ecosystem tooling. Qwen 3.5 Small running on consumer hardware is interesting. A mature ecosystem of tools for managing, updating, and orchestrating local models is what makes it practically useful at scale.

- OpenAI plus Astral integration timeline. The acquisition will take months to close and longer to integrate. But the signals about where OpenAI sees developer workflow going will appear in Codex feature announcements before any formal product release.

- Open-source agentic frameworks. With Nemotron 3 Super as the clear open-weight leader for agentic tasks, frameworks built around its 1M-token context and SWE-Bench performance will start appearing. The infrastructure around the model will matter as much as the model itself.

The practical message for development teams is this: the model landscape now moves faster than annual architecture reviews. The team that reviews model choices quarterly has a structural disadvantage. Building with provider abstraction and model-agnostic evaluation pipelines is not over-engineering. It is the minimum viable infrastructure for 2026.

References

- BuildFastWithAI – “12+ AI Models in March 2026: The Week That Changed AI” (https://www.buildfastwithai.com/blogs/ai-models-march-2026-releases)

- DevFlokers – “AI News Last 24 Hours (March 24, 2026): Models and Breakthroughs” (https://www.devflokers.com/blog/ai-news-march-24-2026-releases-breakthroughs)

- NVIDIA Developer Blog – “Introducing Nemotron 3 Super: An Open Hybrid Mamba-Transformer MoE for Agentic Reasoning” (https://developer.nvidia.com/blog/introducing-nemotron-3-super-an-open-hybrid-mamba-transformer-moe-for-agentic-reasoning/)

- MarkTechPost – “NVIDIA Releases Nemotron 3 Super: A 120B Parameter Open-Source Model” (https://www.marktechpost.com/2026/03/11/nvidia-releases-nemotron-3-super-a-120b-parameter-open-source-hybrid-mamba-attention-moe-model-delivering-5x-higher-throughput-for-agentic-ai/)

- Artificial Analysis – “NVIDIA Nemotron 3 Super: The New Leader in Open, Efficient Intelligence” (https://artificialanalysis.ai/articles/nvidia-nemotron-3-super-the-new-leader-in-open-efficient-intelligence)

- Fortune – “Morgan Stanley Warns an AI Breakthrough Is Coming in 2026 and Most of the World Is Not Ready” (https://fortune.com/2026/03/13/elon-musk-morgan-stanley-ai-leap-2026/)

- DEV Community – “The LLM and AI Agent Releases That Actually Matter This Week, March 2026” (https://dev.to/aibughunter/the-llm-and-ai-agent-releases-that-actually-matter-this-week-march-2026-5d7i)

- LLM Stats – “Nemotron 3 Super: Pricing, Benchmarks, Architecture and API” (https://llm-stats.com/blog/research/nemotron-3-super-launch)