Part 2 built the episodic memory layer: a time-indexed store of every conversation turn, action, and outcome the agent experienced. That store answers the question “what happened?” But enterprise agents need to answer a different question too: “what do I know?” Episodic memory grows without bound and contains a lot of noise. Semantic memory is the distilled, facts-first knowledge layer that emerges when you extract the signal from the noise.

This part builds a complete semantic memory system using Qdrant as the vector store and Python as the implementation language. By the end, you will have a system that extracts facts from agent interactions, stores them as searchable knowledge, and retrieves the most relevant knowledge at query time using cosine similarity.

Episodic vs Semantic Memory: The Core Distinction

Episodic memory is tied to specific events. “On April 3rd, the user said they prefer TypeScript” is an episodic memory. Semantic memory is the generalised knowledge extracted from those events: “This user prefers TypeScript” is a semantic memory. It is no longer tied to a specific session or time. It is a fact about the user that holds until it changes.

Semantic memory answers questions like:

- What does this user’s system architecture look like?

- What are the compliance constraints on this tenant’s environment?

- What technologies does this team use and avoid?

- What are the known failure modes of this user’s codebase?

These are not things you look up by time. You look them up by conceptual relevance to whatever the agent is currently working on. That is why semantic memory lives in a vector store and is retrieved by similarity search rather than by SQL time queries.

Why Qdrant

Qdrant is a purpose-built vector database written in Rust with first-class support for filtering, payload storage, and named vectors. For agent semantic memory it has three specific advantages over alternatives:

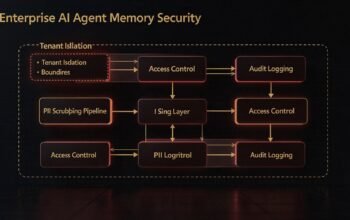

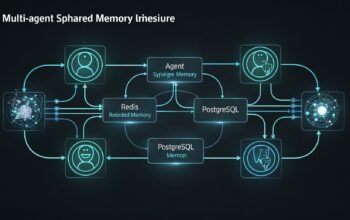

Payload filtering – Qdrant lets you attach arbitrary JSON payloads to vectors and filter on them at query time. You can search for semantically similar memories that also match a specific tenant, user, or knowledge category. This is essential for multi-tenant agents.

Upsert by ID – When a fact changes, you want to update the existing vector rather than accumulate duplicates. Qdrant’s upsert operation by deterministic ID makes this clean. You hash the fact’s key fields to generate a stable ID and upsert on every write.

Named collections – You can maintain separate collections per memory type or per tenant without running separate database instances. Each collection gets its own HNSW index with independently tuned parameters.

Semantic Memory Schema

Each point in the Qdrant collection represents one semantic memory: a discrete fact or piece of knowledge about a user, domain, or entity. The payload carries the structured metadata needed for filtering and display.

# Schema for each Qdrant point (semantic memory unit)

# {

# "id": "deterministic-uuid-from-hash", # stable for upsert

# "vector": [0.023, -0.412, ...], # 1536-dim embedding of the fact text

# "payload": {

# "tenant_id": "acme-corp",

# "user_id": "user-123",

# "category": "preference", # preference | constraint | domain_fact | entity | failure_pattern

# "subject": "programming_language", # what the fact is about

# "fact": "User prefers TypeScript over JavaScript for all new services",

# "confidence": 0.9, # 0.0 to 1.0

# "source_session_id": "uuid", # which session this came from

# "created_at": "2026-04-01T10:00:00Z",

# "updated_at": "2026-04-09T07:00:00Z",

# "access_count": 12 # how many times this memory was retrieved

# }

# }flowchart TD

subgraph Extraction["Fact Extraction Pipeline"]

EP["Episodic event\n(conversation turn or observation)"]

LLM["LLM Extractor\nclaude-haiku / gpt-4o-mini"]

Facts["Discrete facts\nJSON array"]

EP --> LLM --> Facts

end

subgraph Storage["Semantic Memory Store - Qdrant"]

Hash["Hash key fields\nto stable UUID"]

Embed["Embed fact text\ntext-embedding-3-small"]

Upsert["Upsert point\nby stable ID"]

Facts --> Hash & Embed

Hash & Embed --> Upsert

Upsert --> QC[("Qdrant Collection\nsemantic_memories")]

end

subgraph Retrieval["Retrieval at Query Time"]

Q["Agent query string"]

QE["Embed query"]

Search["Cosine similarity search\nwith tenant_id filter"]

Top["Top-k relevant facts\ninjected into context"]

Q --> QE --> Search --> Top

QC --> Search

end

style Extraction fill:#1e3a5f,color:#fff

style Storage fill:#166534,color:#fff

style Retrieval fill:#713f12,color:#fff

Setting Up the Qdrant Collection

# setup_qdrant.py

from qdrant_client import QdrantClient

from qdrant_client.models import (

Distance,

VectorParams,

HnswConfigDiff,

PayloadSchemaType,

)

client = QdrantClient(url="http://localhost:6333")

COLLECTION = "semantic_memories"

VECTOR_DIM = 1536 # text-embedding-3-small

def create_collection():

client.recreate_collection(

collection_name=COLLECTION,

vectors_config=VectorParams(

size=VECTOR_DIM,

distance=Distance.COSINE,

),

hnsw_config=HnswConfigDiff(

m=16, # number of edges per node

ef_construct=100, # construction search width - higher = better recall

full_scan_threshold=10000, # use HNSW above this collection size

),

)

# Create payload indexes for filtering

client.create_payload_index(COLLECTION, "tenant_id", PayloadSchemaType.KEYWORD)

client.create_payload_index(COLLECTION, "user_id", PayloadSchemaType.KEYWORD)

client.create_payload_index(COLLECTION, "category", PayloadSchemaType.KEYWORD)

client.create_payload_index(COLLECTION, "confidence", PayloadSchemaType.FLOAT)

print(f"Collection '{COLLECTION}' created with payload indexes.")

if __name__ == "__main__":

create_collection()Fact Extraction with an LLM

The key challenge in semantic memory is deciding what to store. You cannot store every sentence as a fact. You need to extract the discrete, durable pieces of knowledge from each interaction. A lightweight LLM call after each significant event handles this well.

# fact_extractor.py

import json

import anthropic

extractor = anthropic.Anthropic()

EXTRACTION_PROMPT = """You are a knowledge extraction assistant. Given a piece of text from an AI agent interaction, extract discrete facts that are worth remembering long-term.

Only extract facts that are:

- Durable: will still be true in future sessions

- Specific: about a concrete preference, constraint, entity, or domain fact

- Non-trivial: not obvious from context

Return a JSON array of fact objects. Each object must have:

- category: one of "preference", "constraint", "domain_fact", "entity", "failure_pattern"

- subject: short label for what the fact is about (e.g., "deployment_target", "code_style")

- fact: the fact as a clear, standalone sentence

- confidence: float 0.0 to 1.0 based on how clearly this was stated

Return only the JSON array, no other text. If no durable facts can be extracted, return [].

Text to analyse:

{text}"""

def extract_facts(text: str) -> list[dict]:

response = extractor.messages.create(

model="claude-haiku-4-5",

max_tokens=1024,

messages=[{

"role": "user",

"content": EXTRACTION_PROMPT.format(text=text),

}],

)

raw = response.content[0].text.strip()

try:

facts = json.loads(raw)

return [f for f in facts if isinstance(f, dict) and f.get("confidence", 0) >= 0.6]

except json.JSONDecodeError:

return []Semantic Memory Client

# semantic_memory.py

import hashlib

import uuid

from datetime import datetime, timezone

from openai import OpenAI

from qdrant_client import QdrantClient

from qdrant_client.models import Filter, FieldCondition, MatchValue, PointStruct, SearchRequest

from fact_extractor import extract_facts

oai = OpenAI()

qdrant = QdrantClient(url="http://localhost:6333")

COLLECTION = "semantic_memories"

def embed(text: str) -> list[float]:

response = oai.embeddings.create(

model="text-embedding-3-small",

input=text[:8000],

)

return response.data[0].embedding

def stable_id(tenant_id: str, user_id: str, category: str, subject: str) -> str:

"""Generate a deterministic UUID for a fact so upserts overwrite stale versions."""

key = f"{tenant_id}:{user_id}:{category}:{subject}"

digest = hashlib.sha256(key.encode()).hexdigest()

return str(uuid.UUID(digest[:32]))

class SemanticMemoryClient:

def __init__(self, tenant_id: str, user_id: str):

self.tenant_id = tenant_id

self.user_id = user_id

def store_from_text(self, text: str, source_session_id: str) -> int:

"""Extract facts from text and upsert them into the semantic store."""

facts = extract_facts(text)

if not facts:

return 0

points = []

for fact in facts:

fact_text = fact["fact"]

category = fact.get("category", "domain_fact")

subject = fact.get("subject", "general")

confidence = float(fact.get("confidence", 0.7))

point_id = stable_id(self.tenant_id, self.user_id, category, subject)

vector = embed(fact_text)

# Check if this point already exists to preserve access_count

existing = self._get_by_id(point_id)

access_count = existing["access_count"] if existing else 0

points.append(PointStruct(

id=point_id,

vector=vector,

payload={

"tenant_id": self.tenant_id,

"user_id": self.user_id,

"category": category,

"subject": subject,

"fact": fact_text,

"confidence": confidence,

"source_session_id": source_session_id,

"created_at": existing["created_at"] if existing else datetime.now(timezone.utc).isoformat(),

"updated_at": datetime.now(timezone.utc).isoformat(),

"access_count": access_count,

},

))

qdrant.upsert(collection_name=COLLECTION, points=points)

return len(points)

def retrieve(self, query: str, top_k: int = 10, category: str | None = None) -> list[dict]:

"""Retrieve the most semantically relevant facts for a query."""

query_vector = embed(query)

must_conditions = [

FieldCondition(key="tenant_id", match=MatchValue(value=self.tenant_id)),

FieldCondition(key="user_id", match=MatchValue(value=self.user_id)),

]

if category:

must_conditions.append(

FieldCondition(key="category", match=MatchValue(value=category))

)

results = qdrant.search(

collection_name=COLLECTION,

query_vector=query_vector,

query_filter=Filter(must=must_conditions),

limit=top_k,

score_threshold=0.70, # discard low-relevance results

with_payload=True,

)

memories = []

ids_to_increment = []

for hit in results:

payload = hit.payload

memories.append({

"id": hit.id,

"fact": payload["fact"],

"category": payload["category"],

"subject": payload["subject"],

"confidence": payload["confidence"],

"similarity": hit.score,

"updated_at": payload["updated_at"],

})

ids_to_increment.append(hit.id)

# Increment access counts async in production - sync here for clarity

self._increment_access_counts(ids_to_increment)

return memories

def retrieve_all(self, category: str | None = None) -> list[dict]:

"""Scroll through all semantic memories for this user (for consolidation)."""

must_conditions = [

FieldCondition(key="tenant_id", match=MatchValue(value=self.tenant_id)),

FieldCondition(key="user_id", match=MatchValue(value=self.user_id)),

]

if category:

must_conditions.append(

FieldCondition(key="category", match=MatchValue(value=category))

)

results, _ = qdrant.scroll(

collection_name=COLLECTION,

scroll_filter=Filter(must=must_conditions),

limit=1000,

with_payload=True,

)

return [{"id": r.id, **r.payload} for r in results]

def delete(self, point_id: str):

qdrant.delete(

collection_name=COLLECTION,

points_selector=[point_id],

)

def _get_by_id(self, point_id: str) -> dict | None:

results = qdrant.retrieve(

collection_name=COLLECTION,

ids=[point_id],

with_payload=True,

)

if results:

return results[0].payload

return None

def _increment_access_counts(self, point_ids: list[str]):

"""Increment access_count payload field for retrieved points."""

for pid in point_ids:

existing = self._get_by_id(pid)

if existing:

qdrant.set_payload(

collection_name=COLLECTION,

payload={"access_count": existing.get("access_count", 0) + 1},

points=[pid],

)Integrating Semantic Memory into the Agent

The agent retrieves semantic memories alongside episodic memories at session start, and triggers fact extraction after high-importance interactions.

# agent_with_semantic.py

import anthropic

from semantic_memory import SemanticMemoryClient

client = anthropic.Anthropic()

def format_semantic_context(memories: list[dict]) -> str:

if not memories:

return ""

by_category: dict[str, list] = {}

for m in memories:

by_category.setdefault(m["category"], []).append(m)

sections = ["\n--- Known facts about this user and their environment ---"]

labels = {

"preference": "Preferences",

"constraint": "Constraints and requirements",

"domain_fact": "Domain knowledge",

"entity": "Known entities (projects, systems, people)",

"failure_pattern": "Known failure patterns to avoid",

}

for cat, label in labels.items():

facts = by_category.get(cat, [])

if facts:

sections.append(f"\n{label}:")

for f in facts:

sections.append(f" - {f['fact']}")

sections.append("--- End of known facts ---\n")

return "\n".join(sections)

class AgentWithSemanticMemory:

def __init__(self, tenant_id: str, user_id: str, session_id: str):

self.tenant_id = tenant_id

self.user_id = user_id

self.session_id = session_id

self.semantic = SemanticMemoryClient(tenant_id, user_id)

self.history = []

self.turn_count = 0

def chat(self, user_message: str) -> str:

self.turn_count += 1

# Retrieve relevant semantic memories for this query

semantic_facts = self.semantic.retrieve(query=user_message, top_k=12)

semantic_context = format_semantic_context(semantic_facts)

system = f"""You are a helpful AI agent with persistent long-term memory.

You have access to learned facts about this user and their environment.

Use this knowledge to give more relevant, context-aware responses.

{semantic_context}"""

self.history.append({"role": "user", "content": user_message})

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=4096,

system=system,

messages=self.history,

)

assistant_message = response.content[0].text

self.history.append({"role": "assistant", "content": assistant_message})

# Extract facts every 3 turns or on high-signal exchanges

if self.turn_count % 3 == 0 or self._is_high_signal(user_message):

combined_text = f"User: {user_message}\nAssistant: {assistant_message}"

stored = self.semantic.store_from_text(combined_text, self.session_id)

if stored:

print(f"[memory] Stored {stored} new semantic facts")

return assistant_message

def _is_high_signal(self, text: str) -> bool:

"""Heuristic: longer, statement-heavy messages are more likely to contain facts."""

signal_phrases = ["prefer", "always", "never", "requirement", "must", "constraint",

"we use", "we don't", "our team", "our system", "the project"]

text_lower = text.lower()

return len(text) > 200 or any(p in text_lower for p in signal_phrases)sequenceDiagram

participant U as User

participant A as Agent

participant SM as SemanticMemoryClient

participant Q as Qdrant

participant FE as FactExtractor

participant LLM as Claude Sonnet 4.6

U->>A: chat("Our auth service uses OAuth2 and must stay stateless")

A->>SM: retrieve(query=user_message, top_k=12)

SM->>Q: vector search + tenant_id filter

Q-->>SM: top-k fact points

SM-->>A: formatted fact list

A->>LLM: system(with facts) + messages

LLM-->>A: assistant response

A-->>U: response

Note over A: High-signal message detected

A->>FE: extract_facts(user+assistant text)

FE->>LLM: extraction prompt (claude-haiku)

LLM-->>FE: JSON fact array

FE-->>A: [{category: constraint, subject: auth_service, fact: "Auth service uses OAuth2 and must stay stateless", confidence: 0.95}]

A->>SM: store_from_text(text, session_id)

SM->>Q: upsert by stable ID

Memory Categories in Practice

| Category | What it stores | Example fact | Typical confidence |

|---|---|---|---|

| preference | How the user likes things done | “User formats all API responses in camelCase JSON” | 0.8 – 1.0 |

| constraint | Hard requirements and limits | “All services must pass SOC 2 audit before production deploy” | 0.9 – 1.0 |

| domain_fact | Facts about the user’s domain or system | “The payment service processes approximately 50,000 transactions per day” | 0.7 – 0.9 |

| entity | Named systems, people, projects | “Project Helix is the internal name for the new billing engine” | 0.8 – 1.0 |

| failure_pattern | Things that went wrong and should be avoided | “N+1 queries against the orders table caused production timeouts in March 2026” | 0.7 – 0.9 |

Handling Contradictions

Facts change. A user who preferred REST APIs might switch to GraphQL. An agent with a naive upsert strategy will accumulate contradictory facts about the same subject. The stable ID approach handles most of this: because the ID is derived from tenant_id + user_id + category + subject, a new fact about the same subject overwrites the old one rather than creating a duplicate.

For cases where the subject is not precise enough to generate a stable collision, add a contradiction check during extraction. After generating facts, run a secondary prompt that compares each new fact against existing facts for the same user and flags conflicts. On a conflict, delete the old point before upserting the new one.

def store_with_contradiction_check(

self, facts: list[dict], source_session_id: str

):

existing = self.retrieve_all()

existing_texts = [e["fact"] for e in existing]

# Only run conflict check if there are existing facts

if existing_texts:

check_prompt = f"""You are checking for contradictions between new facts and existing knowledge.

Existing facts:

{chr(10).join(f"- {t}" for t in existing_texts[:50])}

New facts to add:

{chr(10).join(f"- {f['fact']}" for f in facts)}

Return a JSON array of objects with:

- new_fact: the new fact text

- contradicts: true or false

- reason: brief explanation if contradicts is true

Return only JSON."""

response = client.messages.create(

model="claude-haiku-4-5",

max_tokens=512,

messages=[{"role": "user", "content": check_prompt}],

)

import json

checks = json.loads(response.content[0].text)

conflict_facts = {c["new_fact"] for c in checks if c.get("contradicts")}

# Filter out contradicted facts from the batch

# In production: log conflicts and alert for human review

facts = [f for f in facts if f["fact"] not in conflict_facts]

return self.store_from_text_direct(facts, source_session_id)Confidence Decay and Memory Freshness

Facts that have not been confirmed recently should carry less weight. A confidence score of 0.95 on a preference stated two years ago is not reliable without recent reinforcement. Run a weekly background job that decays confidence scores for facts that have not been accessed or confirmed in the past 90 days.

from datetime import datetime, timezone, timedelta

def decay_old_memories(client: QdrantClient, tenant_id: str, days_threshold: int = 90):

"""Reduce confidence of facts not accessed in the last N days."""

cutoff = (datetime.now(timezone.utc) - timedelta(days=days_threshold)).isoformat()

results, _ = client.scroll(

collection_name=COLLECTION,

scroll_filter=Filter(must=[

FieldCondition(key="tenant_id", match=MatchValue(value=tenant_id)),

]),

limit=5000,

with_payload=True,

)

decayed = 0

for point in results:

updated_at = point.payload.get("updated_at", "")

if updated_at < cutoff:

old_confidence = point.payload.get("confidence", 0.5)

new_confidence = max(0.1, old_confidence * 0.85) # 15% decay

client.set_payload(

collection_name=COLLECTION,

payload={"confidence": new_confidence},

points=[point.id],

)

decayed += 1

print(f"Decayed confidence for {decayed} stale semantic memories")

return decayedRetrieval Quality: Tuning the Score Threshold

The score_threshold=0.70 in the retrieval call determines the minimum cosine similarity for a fact to be returned. Setting this too low floods the context with loosely related facts. Setting it too high causes the agent to miss relevant knowledge.

| Threshold | Behaviour | Use when |

|---|---|---|

| 0.85+ | Only very close matches returned | Precise factual queries, entity lookups |

| 0.75 – 0.85 | Good balance of precision and recall | General agent context injection (recommended default) |

| 0.65 – 0.75 | Broader recall, more noise | Open-ended exploration or when facts are sparse |

| Below 0.65 | Too many unrelated results | Avoid for context injection |

Start at 0.75 and evaluate retrieval quality by logging which facts are injected into context and whether the agent uses them correctly. Adjust per category: constraint facts can be at 0.70 (you want to catch them even loosely) while domain_fact facts can be at 0.80 (higher precision needed).

What Is Next

Part 4 builds the procedural memory layer in C#: the system that stores successful tool sequences and learned problem-solving patterns so agents can improve their approach over time rather than rediscovering the same solutions in every session.

References

- Qdrant – “Vector Database Documentation” (https://qdrant.tech/documentation/)

- Qdrant – “Python Client Reference” (https://python-client.qdrant.tech/)

- OpenAI – “Embeddings Guide” (https://platform.openai.com/docs/guides/embeddings)

- Anthropic – “Claude Models Overview” (https://docs.anthropic.com/en/docs/about-claude/models/overview)

- arXiv – “Generative Agents: Interactive Simulacra of Human Behavior” (https://arxiv.org/abs/2304.03442)