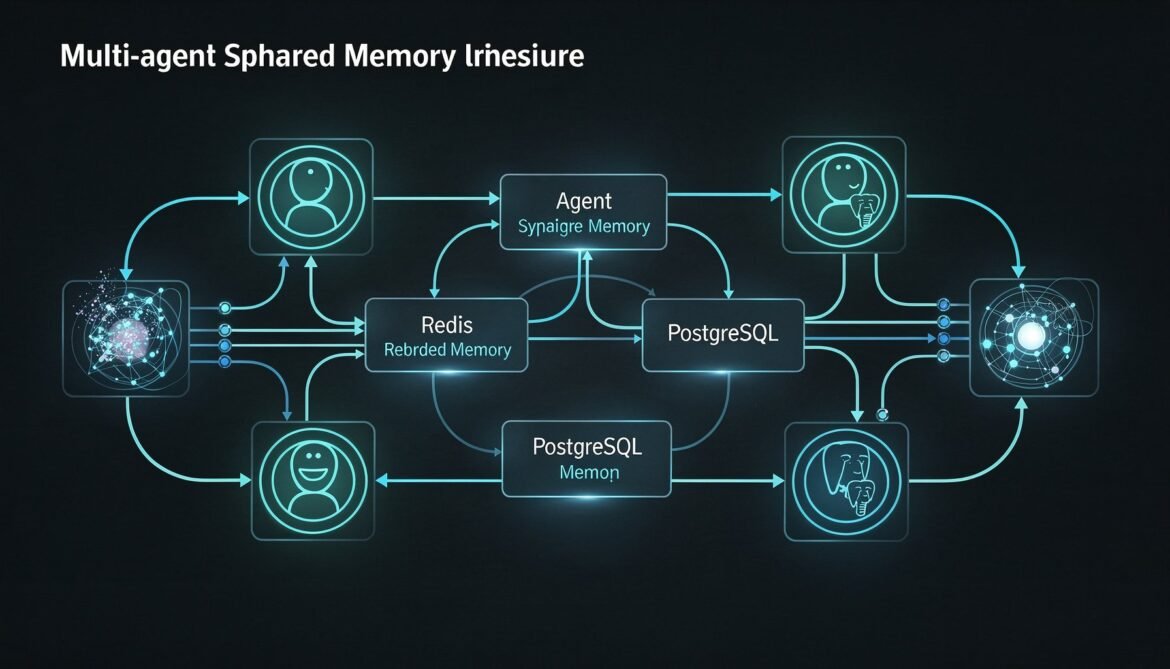

Single-agent memory is only the beginning. Enterprise systems run fleets of specialised agents that need to share knowledge without duplicating work. This part builds a shared memory architecture using Redis for low-latency coordination and PostgreSQL for durable cross-agent event history in Python.

Tag: Python

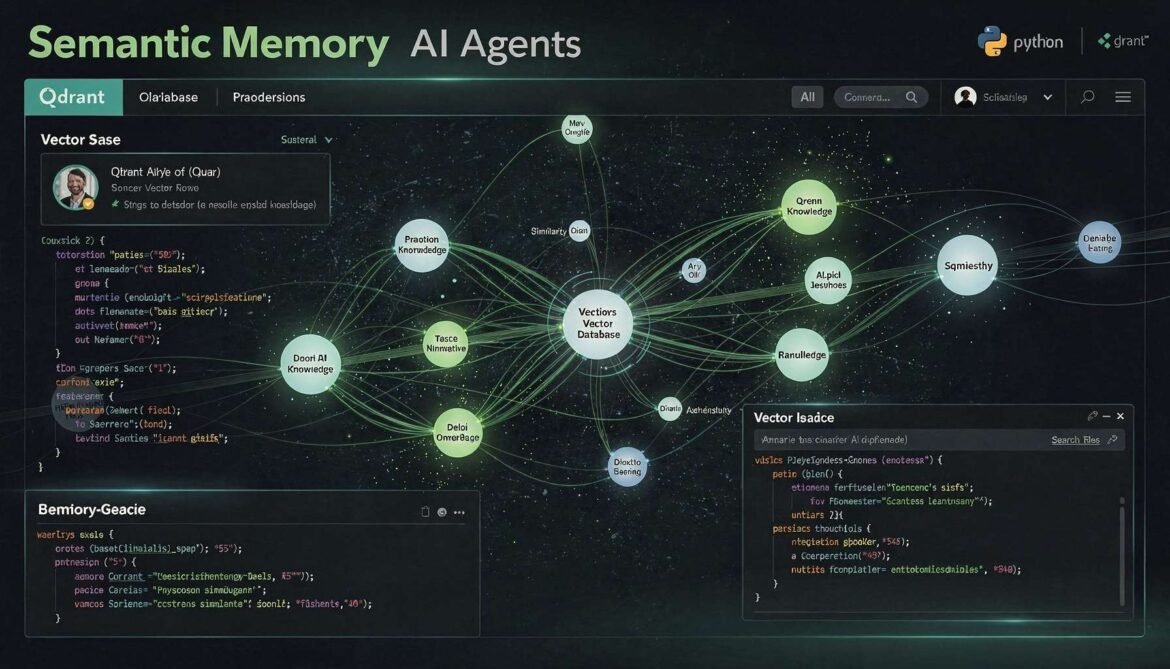

AI Agents with Memory Part 3: Semantic Memory – Building a Long-Term Knowledge Layer with Qdrant and Python

Episodic memory records what happened. Semantic memory stores what the agent has learned. This part builds a production semantic memory layer using Qdrant and Python, with fact extraction, importance-weighted upserts, and similarity retrieval that lets agents build genuine knowledge about users and domains over time.

The April 2026 Developer Stack: TypeScript Tops GitHub, Rust Rewrites the Toolchain, and Copilot Goes Agentic

April 2026 marks a turning point for software development: TypeScript claims the top spot on GitHub, Rust powers the JavaScript toolchain, Python gets free threading, and GitHub Copilot transitions from autocomplete tool to autonomous coding agent.

Semantic Caching with Redis 8.6: Vector Similarity Matching for LLM Cost Optimization in Production

Semantic caching operates above the model layer, using vector embeddings to match similar queries to previously computed responses. With Redis 8.6, you can achieve 80 percent or higher cache hit rates without calling the LLM at all. This part covers the full architecture, similarity thresholds, cache invalidation, and production implementations in both Node.js and Python.

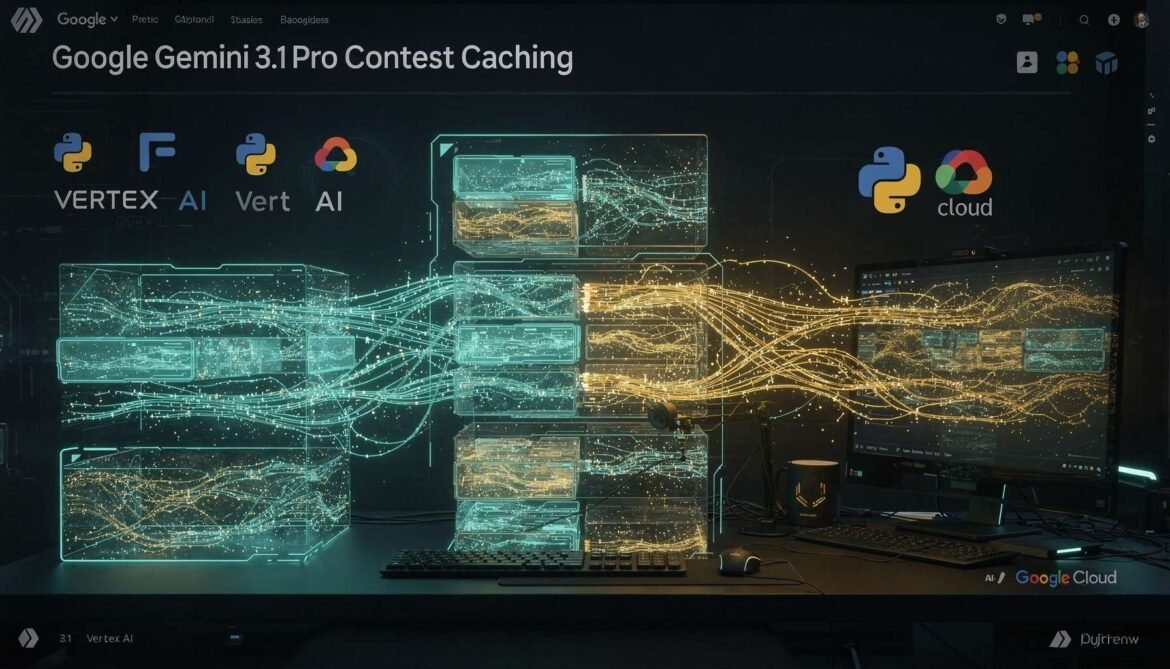

Context Caching with Gemini 3.1 Pro and Flash-Lite: Implicit vs Explicit Caching, Storage Costs, and Python Production Implementation

Google Gemini 3.1 Pro and Flash-Lite offer both implicit and explicit context caching, with the most generous default TTL of any major provider at one hour. This part covers how both modes work, how to account for storage costs, and a complete Python production implementation for Vertex AI and the Gemini API.

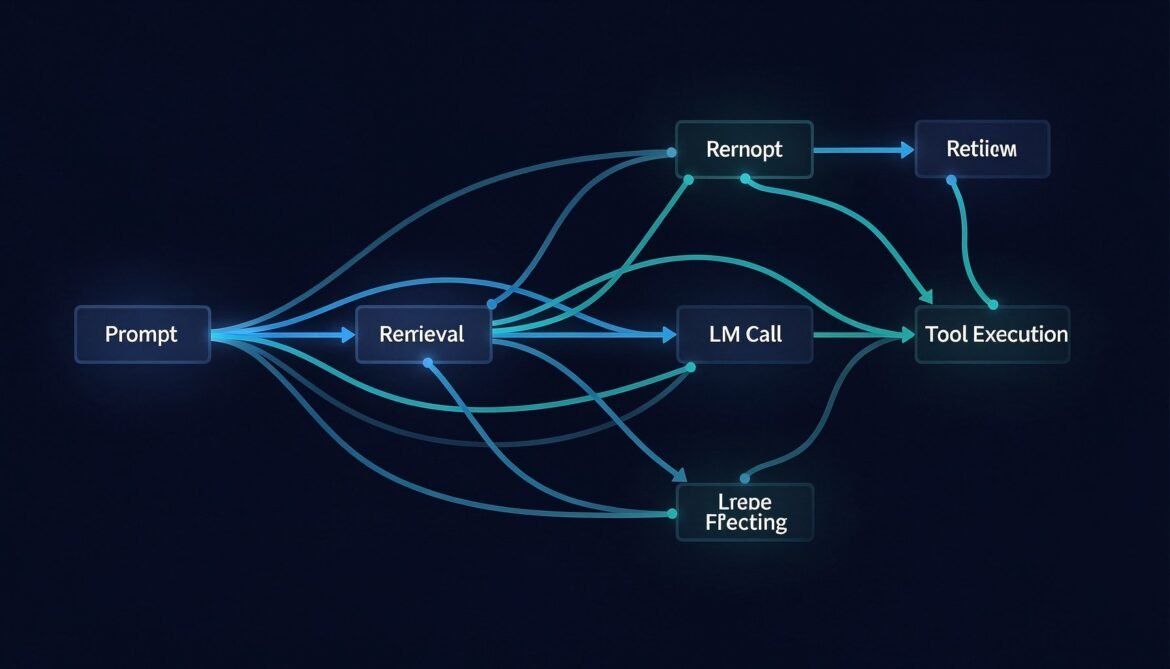

Distributed Tracing for LLM Applications with OpenTelemetry

You cannot fix what you cannot see. This post walks through instrumenting a full LLM pipeline with OpenTelemetry in Node.js, Python, and C# — capturing every span from user request through retrieval, model call, tool execution, and response.

OpenClaw Complete Guide Part 4: Building Your First Custom Skill

Learn how to build a custom OpenClaw skill from scratch. This post walks through writing SKILL.md, configuring SOUL.md and USER.md, and testing real developer workflow skills with working Node.js and Python examples. Part 4 of the complete OpenClaw developer series.

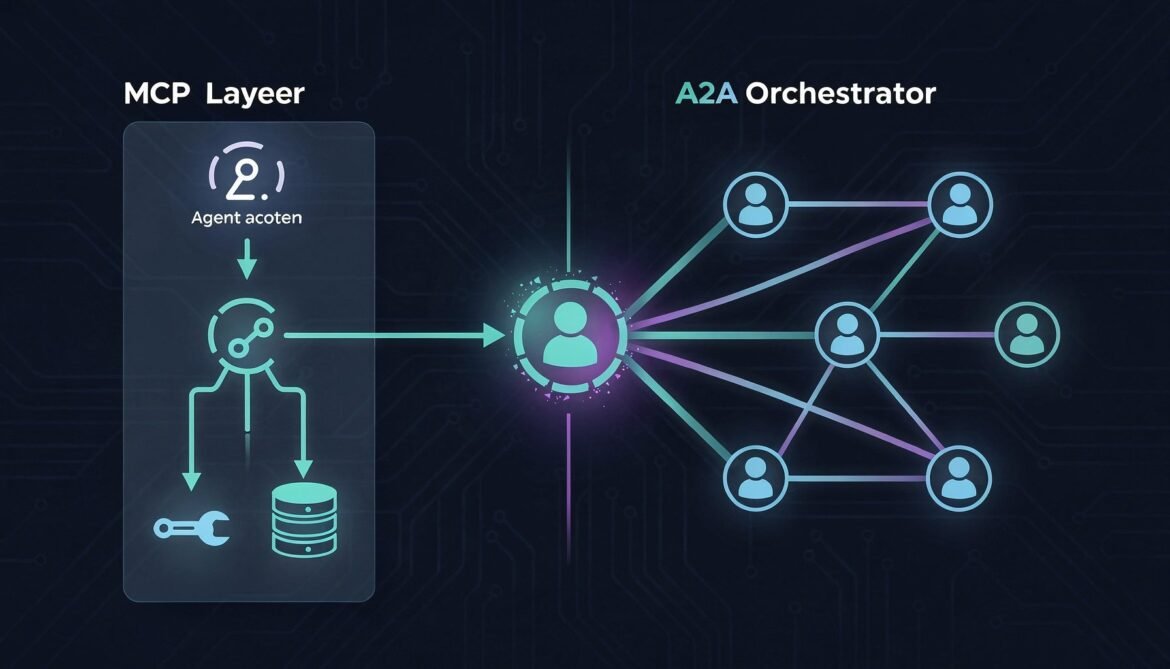

MCP and A2A Together: The Complete Agentic Stack (Part 7 of 8)

Combine MCP and A2A into one unified agentic stack. This post shows exactly where each protocol belongs, how they work together in a real enterprise workflow, and provides a complete Node.js implementation using both simultaneously.

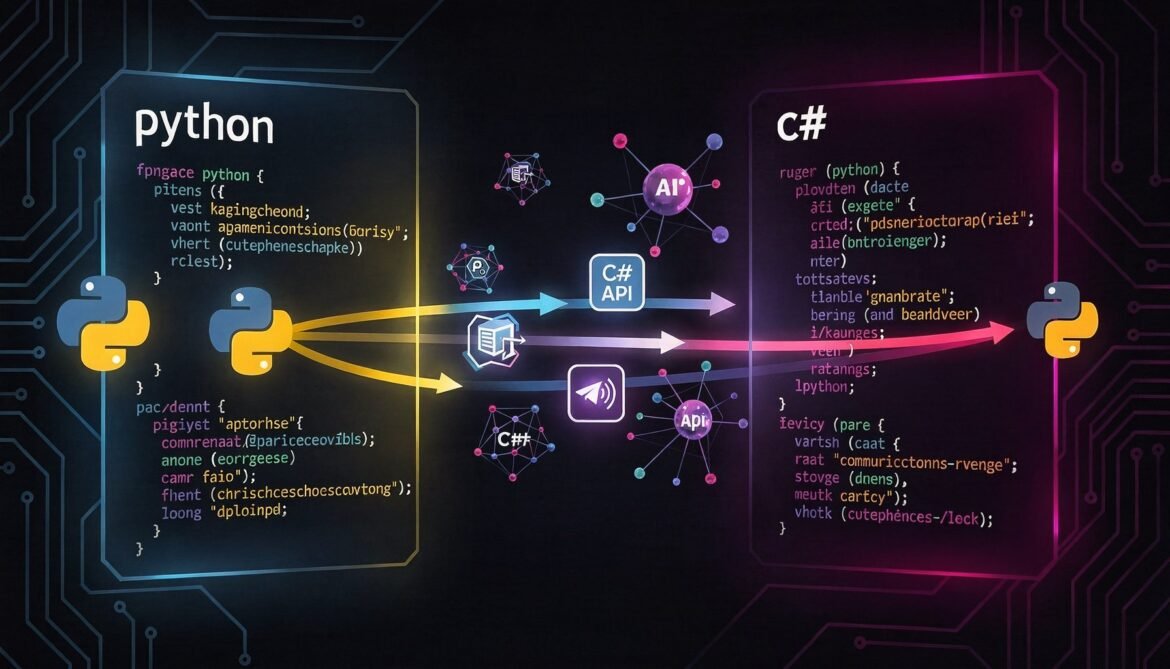

Building A2A Agent Servers in Python and C# (Part 4 of 8)

Implement a fully A2A-compliant agent server in both Python (FastAPI) and C# (ASP.NET Core). Same Inventory Management Agent from Part 3, same architecture, two more languages. Production-ready patterns for enterprise teams.

Single Agent Implementation with Semantic Kernel: Complete Guide to Building Autonomous Agents (Part 3 of 8)

With your Azure AI Foundry development environment properly configured, you are ready to build your first autonomous agent using Semantic Kernel. This article provides comprehensive