The previous six parts built a complete agent memory system: episodic storage, semantic knowledge, procedural learning, consolidation, and multi-agent sharing. Every one of those layers stores sensitive information. Conversation history contains business context, trade secrets, and personal details. Semantic memory contains distilled facts about users and their organisations. Procedural memory contains operational patterns for production systems.

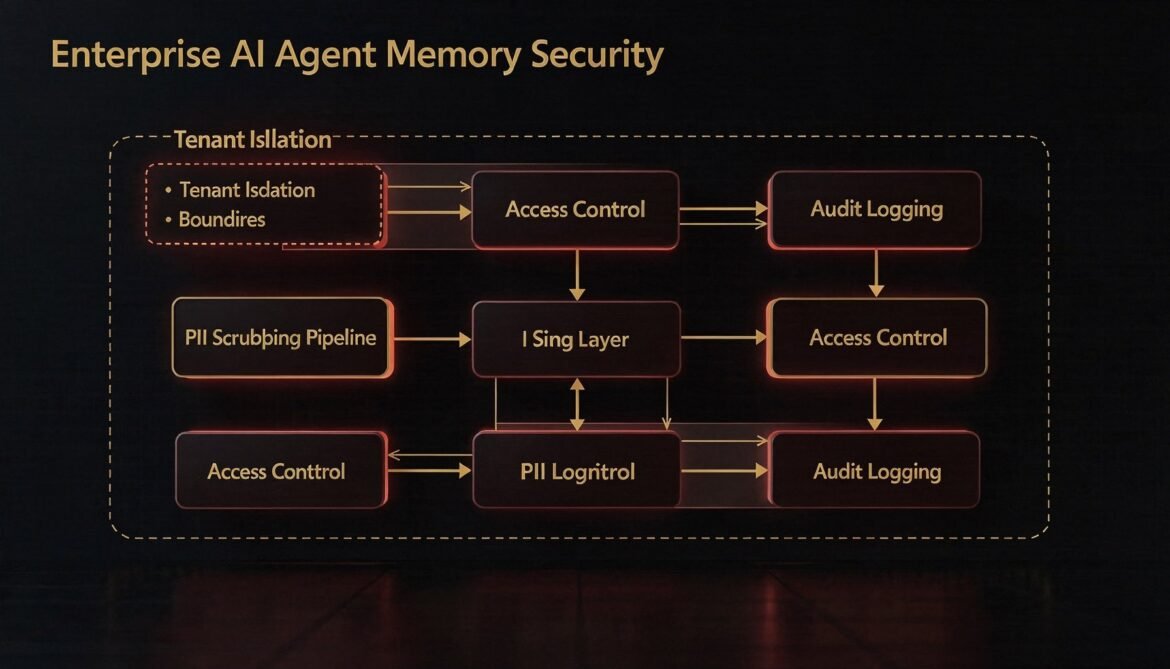

In enterprise environments this means the memory layer is subject to data residency requirements, privacy regulations like GDPR and CCPA, internal access control policies, and audit obligations. A memory system that lacks a proper security layer is not production-ready regardless of how well the retrieval works. This part builds that layer in Node.js: tenant isolation enforced at the database level, PII scrubbing before any event reaches persistent storage, role-based access control for shared memory scopes, and tamper-evident audit logging.

The Four Security Requirements

Tenant isolation – Every query against every memory store must be scoped to a single tenant. No cross-tenant data must ever be accessible regardless of application-level bugs. Isolation must be enforced at the database level, not just the application layer.

PII scrubbing – Before any event is written to persistent storage, personally identifiable information must be detected and either redacted or pseudonymised. This applies to episodic events, extracted semantic facts, and procedure records. The scrubbing pipeline must run synchronously in the write path, not as an async post-process.

Access control – In multi-agent and multi-user environments, not every agent or user should be able to read or write every memory scope. Role-based access control governs which agents can access which scopes, and what operations they can perform.

Audit logging – Every read and write to the memory layer must produce an immutable audit record. The log must capture who accessed what, when, and from which agent. For regulated industries, this log must be tamper-evident and retained for a defined period.

flowchart TD

subgraph WritePath["Write Path - Every Event"]

WI["Incoming event\n(content, metadata)"]

AC1["Access control check\nDoes this agent have write permission?"]

PII["PII scrubbing pipeline\nDetect and redact"]

TI["Tenant ID enforcement\nInject tenant_id from auth context"]

DB["Write to store\nPostgreSQL / Qdrant"]

AL1["Audit log write\nimmutable append"]

WI --> AC1 --> PII --> TI --> DB --> AL1

end

subgraph ReadPath["Read Path - Every Query"]

RI["Incoming query"]

AC2["Access control check\nDoes this agent have read permission?"]

TF["Tenant filter enforcement\nRow-level security"]

RES["Query results"]

AL2["Audit log read\nimmutable append"]

RI --> AC2 --> TF --> RES --> AL2

end

style WritePath fill:#1e3a5f,color:#fff

style ReadPath fill:#3b0764,color:#fff

Row-Level Security in PostgreSQL

Application-level tenant filtering is fragile. A missing WHERE clause, an ORM misconfiguration, or a join that bypasses filters can expose cross-tenant data. The correct approach is to enforce tenant isolation at the database level using PostgreSQL Row Level Security (RLS). With RLS enabled, no query can return rows outside the current tenant context, regardless of the SQL written by the application.

-- Enable RLS on all memory tables

ALTER TABLE episodic_memories ENABLE ROW LEVEL SECURITY;

ALTER TABLE shared_episodic_memories ENABLE ROW LEVEL SECURITY;

ALTER TABLE procedural_memories ENABLE ROW LEVEL SECURITY;

-- Create a dedicated application role (not superuser)

CREATE ROLE agent_memory_app LOGIN PASSWORD 'strong-password-here';

-- Policy: application role can only see rows matching current_setting tenant

CREATE POLICY tenant_isolation ON episodic_memories

FOR ALL

TO agent_memory_app

USING (tenant_id = current_setting('app.current_tenant_id'));

CREATE POLICY tenant_isolation ON shared_episodic_memories

FOR ALL

TO agent_memory_app

USING (tenant_id = current_setting('app.current_tenant_id'));

CREATE POLICY tenant_isolation ON procedural_memories

FOR ALL

TO agent_memory_app

USING (tenant_id = current_setting('app.current_tenant_id'));

-- Grant table access to application role

GRANT SELECT, INSERT, UPDATE, DELETE

ON episodic_memories, shared_episodic_memories, procedural_memories

TO agent_memory_app;The application sets the tenant context on every connection before executing any query. Using a connection pool with per-request tenant injection is the reliable pattern for this.

// tenant-pool.js - Connection pool with per-request tenant injection

import pg from 'pg';

const { Pool } = pg;

const pool = new Pool({

connectionString: process.env.DATABASE_URL,

max: 20,

});

// Get a client with tenant context pre-set

// Always use this instead of pool.query() directly

export async function withTenantClient(tenantId, callback) {

const client = await pool.connect();

try {

// Set tenant context before any query runs

await client.query(

`SELECT set_config('app.current_tenant_id', $1, true)`,

[tenantId]

);

return await callback(client);

} finally {

client.release();

}

}

// Example usage

// await withTenantClient(tenantId, async (client) => {

// const result = await client.query('SELECT * FROM episodic_memories WHERE user_id = $1', [userId]);

// return result.rows;

// });PII Scrubbing Pipeline

PII scrubbing runs synchronously in the write path before any content reaches the database. The pipeline uses two complementary approaches: pattern-based detection for structured PII (email addresses, phone numbers, credit card numbers, social security numbers) and LLM-based detection for unstructured PII (names, addresses, account references) that patterns cannot reliably catch.

// pii-scrubber.js

import Anthropic from '@anthropic-ai/sdk';

const anthropic = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

// --- Pattern-based redaction (fast, runs first) ---

const PII_PATTERNS = [

// Email addresses

{ pattern: /\b[A-Za-z0-9._%+-]+@[A-Za-z0-9.-]+\.[A-Z|a-z]{2,}\b/g, label: '[EMAIL]' },

// UK/US phone numbers

{ pattern: /\b(\+?1?\s?)?(\(?\d{3}\)?[\s.-]?)?\d{3}[\s.-]?\d{4}\b/g, label: '[PHONE]' },

// Credit card numbers (Luhn pattern, 13-19 digits with optional separators)

{ pattern: /\b(?:\d[ -]?){13,19}\b/g, label: '[CARD]' },

// US Social Security Numbers

{ pattern: /\b\d{3}-\d{2}-\d{4}\b/g, label: '[SSN]' },

// UK National Insurance numbers

{ pattern: /\b[A-Z]{2}\d{6}[A-D]\b/g, label: '[NI]' },

// IP addresses

{ pattern: /\b(?:\d{1,3}\.){3}\d{1,3}\b/g, label: '[IP]' },

// UUIDs (often used as internal user identifiers in logs)

// Only redact if context suggests it is a user identifier - skip for now

];

function patternScrub(text) {

let scrubbed = text;

const redactions = [];

for (const { pattern, label } of PII_PATTERNS) {

const matches = [...scrubbed.matchAll(pattern)];

if (matches.length) {

redactions.push({ label, count: matches.length });

scrubbed = scrubbed.replace(pattern, label);

}

}

return { scrubbed, redactions };

}

// --- LLM-based scrubbing for unstructured PII ---

// Use only when pattern scrub finds nothing but content is high-risk

const LLM_SCRUB_PROMPT = `You are a PII detection and redaction assistant.

Review the following text and replace any personally identifiable information with the appropriate placeholder.

Use these placeholders:

- [NAME] for personal names

- [ADDRESS] for street addresses

- [EMAIL] for email addresses (if any missed)

- [PHONE] for phone numbers (if any missed)

- [ACCOUNT] for account numbers, customer IDs, or internal identifiers tied to a person

- [DOB] for dates of birth

Return ONLY the scrubbed text with no explanation or commentary.

If no PII is found, return the original text unchanged.

Text:

{text}`;

async function llmScrub(text) {

const response = await anthropic.messages.create({

model: 'claude-haiku-4-5-20251001',

max_tokens: Math.min(text.length * 2, 2048),

messages: [{

role: 'user',

content: LLM_SCRUB_PROMPT.replace('{text}', text),

}],

});

return response.content[0].text.trim();

}

// --- Combined scrubbing pipeline ---

export async function scrubPII(text, options = {}) {

const {

useLlm = false, // set true for high-sensitivity content

llmThreshold = 500, // only run LLM scrub on content longer than this

} = options;

// Step 1: fast pattern scrub

const { scrubbed: patternResult, redactions } = patternScrub(text);

// Step 2: optional LLM scrub for unstructured PII

let finalResult = patternResult;

if (useLlm && patternResult.length >= llmThreshold) {

finalResult = await llmScrub(patternResult);

}

return {

original: text,

scrubbed: finalResult,

wasModified: finalResult !== text,

redactions,

};

}Secure Memory Writer

The secure writer wraps every write operation with the access control check, PII scrubbing, tenant enforcement, and audit logging in the correct order. All other parts of the system call this writer rather than writing to the database directly.

// secure-memory-writer.js

import { withTenantClient } from './tenant-pool.js';

import { scrubPII } from './pii-scrubber.js';

import { checkAccess } from './access-control.js';

import { writeAuditLog } from './audit-log.js';

export class SecureMemoryWriter {

constructor({ tenantId, agentId, agentType, userId }) {

this.tenantId = tenantId;

this.agentId = agentId;

this.agentType = agentType;

this.userId = userId;

}

async writeEpisodicEvent({

sessionId,

eventType,

content,

role = null,

importance = 0.5,

metadata = {},

embeddingFn, // injected to avoid circular dep

piiOptions = {},

}) {

// 1. Access control

const allowed = await checkAccess({

tenantId: this.tenantId,

agentId: this.agentId,

resource: 'episodic_memories',

action: 'write',

scopeId: this.userId,

});

if (!allowed) {

await writeAuditLog({

tenantId: this.tenantId,

agentId: this.agentId,

action: 'episodic_write_denied',

resource: 'episodic_memories',

scopeId: this.userId,

outcome: 'denied',

});

throw new Error(`Access denied: agent ${this.agentId} cannot write episodic memory for ${this.userId}`);

}

// 2. PII scrubbing (synchronous in write path)

const { scrubbed, wasModified, redactions } = await scrubPII(content, piiOptions);

if (wasModified) {

console.warn(`[pii] Redacted PII in episodic event for ${this.userId}:`, redactions);

}

// 3. Generate embedding on scrubbed content

const embedding = await embeddingFn(scrubbed);

const embeddingLiteral = `[${embedding.join(',')}]`;

// 4. Write with RLS-enforced tenant context

await withTenantClient(this.tenantId, async (client) => {

await client.query(

`INSERT INTO episodic_memories

(tenant_id, user_id, session_id, event_type, role, content,

embedding, importance, metadata)

VALUES ($1, $2, $3, $4, $5, $6, $7::vector, $8, $9)`,

[

this.tenantId, this.userId, sessionId, eventType,

role, scrubbed, embeddingLiteral, importance,

JSON.stringify({ ...metadata, pii_scrubbed: wasModified }),

]

);

});

// 5. Audit log (async, non-blocking)

writeAuditLog({

tenantId: this.tenantId,

agentId: this.agentId,

action: 'episodic_write',

resource: 'episodic_memories',

scopeId: this.userId,

outcome: 'success',

metadata: { eventType, piiScrubbed: wasModified },

}).catch(err => console.error('[audit] Write failed:', err));

}

}Access Control

Access control is stored in PostgreSQL and cached in Redis for low-latency checks. Each grant record specifies which agent can perform which action on which resource scope.

-- Access control grants table

CREATE TABLE memory_access_grants (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

tenant_id TEXT NOT NULL,

agent_id TEXT NOT NULL, -- which agent (or '*' for all agents of a type)

agent_type TEXT, -- optional: match by agent type

resource TEXT NOT NULL, -- 'episodic_memories' | 'semantic_memories' | 'procedural_memories' | 'workspace:*'

action TEXT NOT NULL, -- 'read' | 'write' | 'delete' | '*'

scope_id TEXT, -- specific user_id or workspace_id, or '*' for all

granted_by TEXT NOT NULL,

granted_at TIMESTAMPTZ NOT NULL DEFAULT now(),

expires_at TIMESTAMPTZ, -- null = no expiry

revoked_at TIMESTAMPTZ

);

CREATE INDEX idx_grants_agent

ON memory_access_grants (tenant_id, agent_id, resource, action)

WHERE revoked_at IS NULL;// access-control.js

import { createClient } from 'redis';

import pg from 'pg';

const redis = createClient({ url: process.env.REDIS_URL });

await redis.connect();

const pool = new pg.Pool({ connectionString: process.env.DATABASE_URL });

const CACHE_TTL = 300; // cache access decisions for 5 minutes

export async function checkAccess({ tenantId, agentId, resource, action, scopeId }) {

const cacheKey = `acl:${tenantId}:${agentId}:${resource}:${action}:${scopeId}`;

// Check cache first

const cached = await redis.get(cacheKey);

if (cached !== null) return cached === '1';

// Query grants

const result = await pool.query(

`SELECT id FROM memory_access_grants

WHERE tenant_id = $1

AND (agent_id = $2 OR agent_id = '*')

AND (resource = $3 OR resource = '*')

AND (action = $4 OR action = '*')

AND (scope_id = $5 OR scope_id = '*')

AND revoked_at IS NULL

AND (expires_at IS NULL OR expires_at > now())

LIMIT 1`,

[tenantId, agentId, resource, action, scopeId]

);

const allowed = result.rowCount > 0;

await redis.setEx(cacheKey, CACHE_TTL, allowed ? '1' : '0');

return allowed;

}

export async function grantAccess({ tenantId, agentId, resource, action, scopeId = '*', grantedBy, expiresAt = null }) {

await pool.query(

`INSERT INTO memory_access_grants

(tenant_id, agent_id, resource, action, scope_id, granted_by, expires_at)

VALUES ($1, $2, $3, $4, $5, $6, $7)`,

[tenantId, agentId, resource, action, scopeId, grantedBy, expiresAt]

);

// Invalidate cache for this agent/resource/action/scope

const cacheKey = `acl:${tenantId}:${agentId}:${resource}:${action}:${scopeId}`;

await redis.del(cacheKey);

}

export async function revokeAccess({ tenantId, agentId, resource, action, scopeId }) {

await pool.query(

`UPDATE memory_access_grants

SET revoked_at = now()

WHERE tenant_id = $1

AND agent_id = $2

AND resource = $3

AND action = $4

AND (scope_id = $5 OR scope_id = '*')

AND revoked_at IS NULL`,

[tenantId, agentId, resource, action, scopeId]

);

const cacheKey = `acl:${tenantId}:${agentId}:${resource}:${action}:${scopeId}`;

await redis.del(cacheKey);

}Tamper-Evident Audit Logging

Audit logs are only useful if they cannot be silently modified. The tamper-evidence mechanism used here is a hash chain: each audit record includes the SHA-256 hash of the previous record’s content. Any modification to a historical record breaks the chain and is immediately detectable.

// audit-log.js

import crypto from 'crypto';

import pg from 'pg';

const pool = new pg.Pool({ connectionString: process.env.DATABASE_URL });

// Audit log table (create once)

// CREATE TABLE memory_audit_log (

// id BIGSERIAL PRIMARY KEY,

// tenant_id TEXT NOT NULL,

// agent_id TEXT NOT NULL,

// action TEXT NOT NULL,

// resource TEXT NOT NULL,

// scope_id TEXT,

// outcome TEXT NOT NULL, -- 'success' | 'denied' | 'error'

// metadata JSONB DEFAULT '{}',

// created_at TIMESTAMPTZ NOT NULL DEFAULT now(),

// prev_hash TEXT, -- hash of previous record for chain integrity

// record_hash TEXT NOT NULL -- hash of this record's content

// );

// CREATE INDEX idx_audit_tenant ON memory_audit_log (tenant_id, created_at DESC);

function computeHash(record, prevHash) {

const content = JSON.stringify({

tenant_id: record.tenantId,

agent_id: record.agentId,

action: record.action,

resource: record.resource,

scope_id: record.scopeId,

outcome: record.outcome,

metadata: record.metadata,

created_at: record.createdAt,

prev_hash: prevHash,

});

return crypto.createHash('sha256').update(content).digest('hex');

}

export async function writeAuditLog({

tenantId, agentId, action, resource,

scopeId = null, outcome, metadata = {},

}) {

const createdAt = new Date().toISOString();

// Fetch previous record hash for chain

const prevResult = await pool.query(

`SELECT record_hash FROM memory_audit_log

WHERE tenant_id = $1

ORDER BY id DESC LIMIT 1`,

[tenantId]

);

const prevHash = prevResult.rows[0]?.record_hash || null;

const record = { tenantId, agentId, action, resource, scopeId, outcome, metadata, createdAt };

const recordHash = computeHash(record, prevHash);

await pool.query(

`INSERT INTO memory_audit_log

(tenant_id, agent_id, action, resource, scope_id, outcome, metadata, created_at, prev_hash, record_hash)

VALUES ($1, $2, $3, $4, $5, $6, $7, $8, $9, $10)`,

[tenantId, agentId, action, resource, scopeId, outcome,

JSON.stringify(metadata), createdAt, prevHash, recordHash]

);

}

// Verify audit log chain integrity for a tenant

export async function verifyAuditChain(tenantId) {

const records = await pool.query(

`SELECT * FROM memory_audit_log

WHERE tenant_id = $1

ORDER BY id ASC`,

[tenantId]

);

let prevHash = null;

let violations = 0;

for (const row of records.rows) {

const expected = computeHash({

tenantId: row.tenant_id,

agentId: row.agent_id,

action: row.action,

resource: row.resource,

scopeId: row.scope_id,

outcome: row.outcome,

metadata: row.metadata,

createdAt: row.created_at.toISOString(),

}, prevHash);

if (expected !== row.record_hash) {

console.error(`[audit] Chain violation at record id=${row.id}`);

violations++;

}

prevHash = row.record_hash;

}

return { totalRecords: records.rowCount, violations };

}flowchart LR

subgraph SecurityLayers["Security Layers - Write Path"]

A["Agent calls writeEpisodicEvent()"]

B["Access control check\ncheckAccess() - Redis cache + PostgreSQL grants"]

C{"Allowed?"}

D["PII scrubbing\npattern + optional LLM"]

E["Tenant context injection\nset_config for RLS"]

F["Database write\nRLS enforces tenant isolation"]

G["Audit log append\nhash chain record"]

H["Access denied\nAudit log denial"]

A --> B --> C

C -- yes --> D --> E --> F --> G

C -- no --> H

end

style SecurityLayers fill:#1e3a5f,color:#fff

GDPR Right to Erasure

In GDPR-regulated environments, users have the right to request deletion of all data associated with them. The memory system must support complete erasure across all three stores.

// right-to-erasure.js

import { withTenantClient } from './tenant-pool.js';

import { writeAuditLog } from './audit-log.js';

export async function eraseUserMemory({ tenantId, userId, requestedBy }) {

const results = {};

await withTenantClient(tenantId, async (client) => {

// Delete all episodic events

const ep = await client.query(

`DELETE FROM episodic_memories

WHERE tenant_id = $1 AND user_id = $2

RETURNING id`,

[tenantId, userId]

);

results.episodicDeleted = ep.rowCount;

// Delete all shared events authored by this user's agents

const sh = await client.query(

`DELETE FROM shared_episodic_memories

WHERE tenant_id = $1 AND scope_id = $2 AND scope_type = 'user'

RETURNING id`,

[tenantId, userId]

);

results.sharedDeleted = sh.rowCount;

// Delete procedural memories scoped to this user's agent

const pr = await client.query(

`DELETE FROM procedural_memories

WHERE tenant_id = $1 AND agent_id LIKE $2

RETURNING id`,

[tenantId, `%${userId}%`]

);

results.proceduralDeleted = pr.rowCount;

});

// Qdrant erasure (semantic memory) - call Python API

const qdrantRes = await fetch(

`${process.env.SEMANTIC_MEMORY_API_URL}/erase`,

{

method: 'DELETE',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ tenant_id: tenantId, user_id: userId }),

}

);

results.semanticDeleted = (await qdrantRes.json()).deleted;

// Audit the erasure

await writeAuditLog({

tenantId,

agentId: 'system',

action: 'right_to_erasure',

resource: 'all_memory_stores',

scopeId: userId,

outcome: 'success',

metadata: { requestedBy, ...results },

});

return results;

}Security Checklist for Production Deployment

| Control | Implementation | Verified by |

|---|---|---|

| Tenant isolation | PostgreSQL RLS + set_config per request | Integration test with two-tenant fixture |

| PII scrubbing | Pattern + optional LLM scrub on write path | Unit tests with PII fixtures, audit log review |

| Access control | Grant table + Redis cache + deny-by-default | Permission matrix test per agent type |

| Audit logging | Hash chain append on every read and write | Chain verification job runs nightly |

| Erasure support | eraseUserMemory() across all stores | End-to-end erasure test with verification query |

| Secrets management | DB credentials and API keys via env vars or Azure Key Vault | No secrets in source control, rotation tested |

| Transport security | TLS on all connections: DB, Redis, Qdrant, APIs | Certificate validation enabled, no self-signed in prod |

What Is Next

Part 8 is the final part: the complete production reference architecture that combines all seven layers into a single deployable system. It covers the full infrastructure diagram, deployment configuration, monitoring setup, cost model, and the decision framework for when to use each memory type. By the end you will have everything needed to take this system from development into a production enterprise environment.

References

- PostgreSQL – “Row Security Policies Documentation” (https://www.postgresql.org/docs/current/ddl-rowsecurity.html)

- GDPR.eu – “Right to Erasure (Right to be Forgotten)” (https://gdpr.eu/right-to-be-forgotten/)

- Anthropic – “Claude Models Overview” (https://docs.anthropic.com/en/docs/about-claude/models/overview)

- Redis – “Strings and SET NX Documentation” (https://redis.io/docs/latest/develop/data-types/strings/)

- Microsoft – “Azure Key Vault Overview” (https://learn.microsoft.com/en-us/azure/key-vault/general/overview)