Before you can use Tokio well, you need to understand what async Rust is doing underneath. Most tutorials hand you a #[tokio::main] macro and a few .await calls and move on. That gets you started, but it leaves you without the mental model you need when things go wrong – when tasks stall, when performance is not what you expect, or when the compiler rejects code you are sure should work.

This is Part 1 of the Async Rust with Tokio series. We are starting from first principles: what a Future is, how the poll model works, why Rust has no built-in async runtime, and what a runtime is actually doing when it drives your code. The rest of the series builds on this foundation.

The Problem Async Solves

A synchronous web server handles one request at a time per thread. If a request is waiting on a database query, the thread blocks. The CPU sits idle. To handle more requests concurrently, you spawn more threads – but threads are expensive. Each one needs a stack (typically 2-8 MB), a kernel scheduler slot, and context-switching overhead.

Async programming solves this by allowing a single thread to manage many in-flight operations. Instead of blocking while waiting for I/O, a task yields control back to a scheduler. The scheduler runs other tasks. When the I/O completes, the original task resumes. One thread, many concurrent operations, no wasted CPU cycles on waiting.

Rust achieves this using Futures and the async/await syntax, enabling tasks to yield control when waiting for external resources and resume efficiently once ready. The key distinction from languages like Node.js or Python is that Rust futures are just state machines compiled to super-tight code with no garbage collector pauses and no hidden heap allocations.

The Future Trait

Everything in async Rust is built on one trait:

pub trait Future {

type Output;

fn poll(self: Pin<&mut Self>, cx: &mut Context<'_>) -> Poll;

}

pub enum Poll {

Ready(T),

Pending,

} A Future represents a value that will be available at some point. When you call poll, you are asking: “are you done yet?” The future responds with either Poll::Ready(value) if it has finished, or Poll::Pending if it needs more time.

This is the entire foundation. Every async fn you write returns something that implements Future. Every .await you write is syntactic sugar for calling poll in a loop until the inner future returns Ready.

Two things about this design are worth noting:

- Futures are lazy. Creating a future does nothing. No work happens until something calls

poll. This is different from JavaScript promises, which start executing immediately on creation. - Futures are poll-driven, not callback-driven. There are no callbacks to register. The runtime calls

pollwhen the future might be able to make progress.

The Waker: How a Future Gets Polled Again

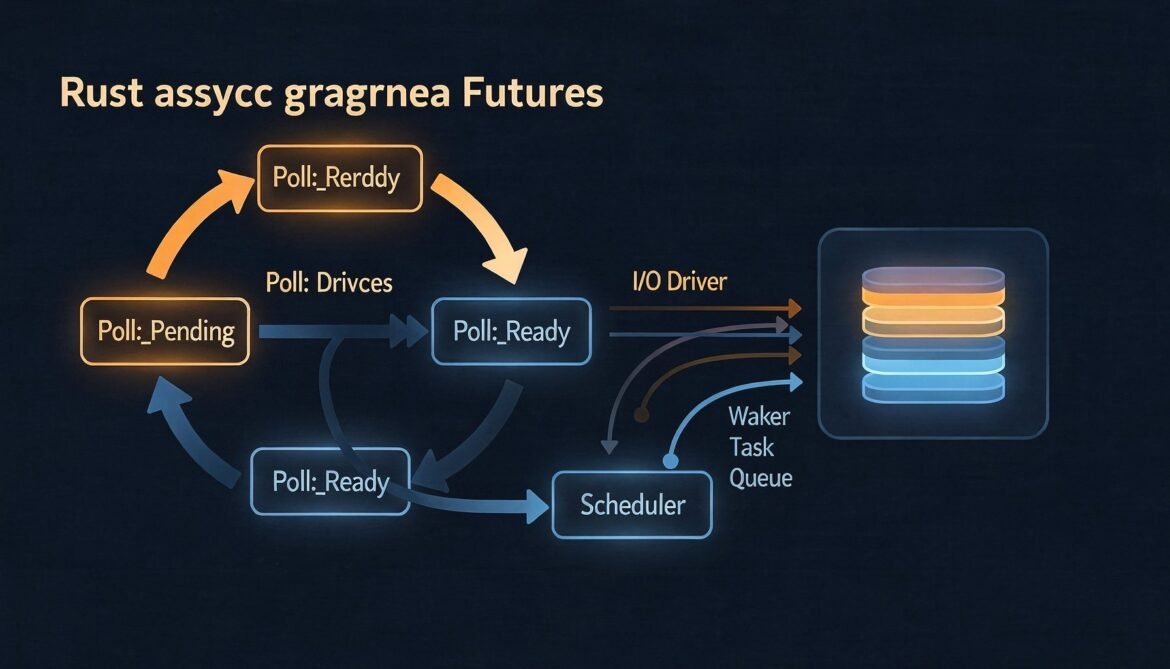

When a future returns Pending, the runtime needs to know when to poll it again. Polling too eagerly wastes CPU. Never polling again means the task stalls forever. The Waker in the Context parameter solves this.

When a future returns Pending, it is responsible for storing the waker and calling waker.wake() when it becomes ready to make progress. The runtime provides the waker. The future stores it. When an I/O event arrives (say, data on a socket), the I/O driver calls wake(), which tells the runtime to schedule the task for polling again.

sequenceDiagram

participant Runtime

participant Future

participant IODriver as I/O Driver (epoll/kqueue)

Runtime->>Future: poll(cx) with Waker

Future->>Future: registers Waker with I/O resource

Future-->>Runtime: Poll::Pending

Runtime->>Runtime: run other tasks

IODriver->>Future: data ready on socket

Future->>Runtime: waker.wake()

Runtime->>Future: poll(cx) again

Future-->>Runtime: Poll::Ready(data)

This waker mechanism is what makes async Rust efficient. The runtime never busy-waits. It relies entirely on wakers to know when to resume work.

Writing a Future by Hand

Before looking at async/await sugar, here is a manually implemented future. This is what the compiler generates for you, made explicit:

use std::future::Future;

use std::pin::Pin;

use std::task::{Context, Poll};

use std::time::{Duration, Instant};

struct Delay {

deadline: Instant,

}

impl Delay {

fn new(duration: Duration) -> Self {

Delay {

deadline: Instant::now() + duration,

}

}

}

impl Future for Delay {

type Output = ();

fn poll(self: Pin<&mut Self>, cx: &mut Context<'_>) -> Poll<()> {

if Instant::now() >= self.deadline {

Poll::Ready(())

} else {

// Schedule a wake-up when the deadline arrives

// In production this would use a timer wheel via the runtime

// For illustration, we clone the waker and spawn a thread

let waker = cx.waker().clone();

let deadline = self.deadline;

std::thread::spawn(move || {

let now = Instant::now();

if now < deadline {

std::thread::sleep(deadline - now);

}

waker.wake();

});

Poll::Pending

}

}

}

// Usage (requires a runtime to actually drive it):

// async fn example() {

// Delay::new(Duration::from_millis(100)).await;

// println!("100ms have passed");

// }This illustrates the contract: when you return Pending, you must arrange for the waker to be called. If you forget, your future stalls forever.

async fn and .await: What They Actually Do

The async fn keyword transforms a function into one that returns an anonymous type implementing Future. The .await keyword polls that inner future repeatedly until it returns Ready, yielding control back to the runtime each time it returns Pending.

// What you write:

async fn fetch_user(id: u64) -> User {

let raw = http_get(&format!("/users/{}", id)).await;

User::parse(raw)

}

// What the compiler conceptually produces:

fn fetch_user(id: u64) -> impl FutureEach .await is a suspension point. Between two .await calls, your code runs synchronously to completion. This is crucial for understanding performance: code between await points blocks the current thread for other tasks. Long stretches of CPU work between awaits cause runtime starvation.

Why Rust Has No Built-In Runtime

Go has goroutines built in. Python has asyncio. Node.js has its event loop. Rust has none of these in the standard library. This is a deliberate design decision.

Rust originally had green threads and coroutines before version 1.0, but they were removed to preserve zero-cost abstractions and C-level performance characteristics. The overhead and complexity of stack management for green threads conflicted with Rust’s design goals.

By keeping the runtime out of the standard library, Rust allows different runtimes optimized for different workloads:

- Tokio: multi-threaded, work-stealing, optimized for network services

- smol: lightweight, minimal, good for constrained environments

- async-executor: single-threaded, predictable, good for embedded or WASM

The Future trait is in the standard library. The execution of futures is not. Any runtime that implements the executor/reactor pattern can run Rust futures.

What a Runtime Actually Does

A Rust async runtime has two core components:

flowchart TD

subgraph Runtime

E[Executor / Scheduler\nMaintains queue of ready tasks\nPolls futures when woken]

R[Reactor / I/O Driver\nepoll on Linux · kqueue on macOS\nIOCP on Windows\nWatches OS events]

end

T[Your Tasks / Futures]

T -->|returns Poll::Pending\nregisters waker| R

E -->|poll| T

R -->|event fires\nwaker.wake called| E

E -->|schedules & polls\nwhen woken| T

style E fill:#2c3e50,color:#ecf0f1

style R fill:#16a085,color:#fff

style T fill:#8e44ad,color:#fff- Executor: maintains a queue of tasks that are ready to be polled. When a waker fires, the task goes back on the queue. The executor picks tasks off the queue and calls

poll. - Reactor: wraps the OS event notification system (epoll on Linux, kqueue on macOS, IOCP on Windows). It watches file descriptors and timers, and calls wakers when events arrive.

Together they form the event loop. Tokio is a highly optimized implementation of this pattern with a work-stealing multi-threaded scheduler on top. We go deep on Tokio’s architecture in Part 2.

Futures Are Lazy: A Real Consequence

The laziness of futures has a practical consequence that trips up developers coming from JavaScript:

// In JavaScript, both promises start executing immediately

const p1 = fetch("/api/users"); // starts now

const p2 = fetch("/api/orders"); // starts now

const [users, orders] = await Promise.all([p1, p2]); // wait for both

// In Rust, futures do nothing until polled

let f1 = fetch_users(); // nothing happens

let f2 = fetch_orders(); // nothing happens

// This runs them SEQUENTIALLY - f1 fully completes before f2 starts

let users = f1.await;

let orders = f2.await;

// To run concurrently, use tokio::join! which polls both simultaneously

let (users, orders) = tokio::join!(fetch_users(), fetch_orders());Sequential awaiting is one of the most common async Rust performance mistakes. Each .await suspends until the future completes before moving to the next one. For independent operations, tokio::join! or tokio::spawn is needed to achieve actual concurrency.

The async/.await Mental Model

Here is the complete mental model to keep in mind for the rest of this series:

async fnreturns a Future. The Future does nothing until polled..awaitpolls the inner future. If it returnsPending, the current task suspends and yields to the runtime.- The future stores the waker. When its resource is ready, it calls

wake(). - The runtime re-polls the task. It resumes from where it left off at the

.awaitpoint. - Between two

.awaitcalls, code runs synchronously. Long synchronous sections starve other tasks. - For concurrent work, use

tokio::join!,tokio::select!, ortokio::spawn. Sequential awaiting is not concurrency.

What Comes Next

With the Future trait and poll model clear, Part 2 goes inside Tokio itself. We look at the work-stealing scheduler, how Tokio maps tasks to OS threads, the difference between core threads and blocking threads, and how the I/O reactor integrates with epoll, kqueue, and IOCP. Understanding this architecture is what separates developers who use Tokio from developers who can reason about its behavior under load.

References

- Tokio Documentation – “Async in Depth” (https://tokio.rs/tokio/tutorial/async)

- Rust Standard Library – “Future Trait” (https://doc.rust-lang.org/std/future/trait.Future.html)

- JetBrains RustRover Blog – “The Evolution of Async Rust: From Tokio to High-Level Applications” (https://blog.jetbrains.com/rust/2026/02/17/the-evolution-of-async-rust-from-tokio-to-high-level-applications/)

- Corrode Rust Consulting – “The State of Async Rust: Runtimes” (https://corrode.dev/blog/async/)

- WyeWorks – “Async Rust: When to Use It and When to Avoid It” (https://www.wyeworks.com/blog/2025/02/25/async-rust-when-to-use-it-when-to-avoid-it/)

- GitHub – tokio-rs/tokio (https://github.com/tokio-rs/tokio)