If you have written async Rust for more than a few days, you have probably hit something confusing. A future that does not run. A task that hangs. A compiler error about Send bounds that appears in code that looks perfectly fine. These problems are not random. They all trace back to how async Rust actually works at the model level – and most resources skip that explanation in favor of getting you to write code quickly.

This is Part 1 of the Async Rust with Tokio series. We are starting at the bottom: futures, the poll model, the waker mechanism, and why Rust deliberately has no built-in async runtime. Get this foundation right and everything in the remaining nine parts will make sense.

The Core Problem Async Solves

A typical backend service spends most of its time waiting. Waiting for a database query to return. Waiting for a network response. Waiting for a file read to complete. With synchronous, blocking I/O, a thread blocks during that wait – doing nothing, consuming memory, unable to serve another request.

The naive solution is more threads. But threads are not free. Each one carries a stack (typically 2-8 MB by default), scheduler overhead, and context-switching cost. A server handling 10,000 concurrent connections with one thread each is spending significant resources just managing threads rather than doing work.

Async programming solves this by separating the concept of a task from the concept of a thread. A task can suspend itself when it would otherwise block, yield the thread to another task, and resume later when its data is ready. Many tasks share a small, fixed pool of threads. The result: high concurrency without high thread count.

What a Future Is

In Rust, the async model is built on a single trait:

pub trait Future {

type Output;

fn poll(self: Pin<&mut Self>, cx: &mut Context<'_>) -> Poll;

}

pub enum Poll {

Ready(T),

Pending,

} A Future is a value that represents a computation that may not be complete yet. When you call poll, one of two things happens: the computation finishes and you get Poll::Ready(value), or it is not done yet and you get Poll::Pending.

The critical insight: futures in Rust are lazy. Creating a future does nothing. The computation only starts when something calls poll. This is fundamentally different from JavaScript promises, which start executing immediately on creation.

// This does NOTHING - just creates a future value

let future = tokio::time::sleep(std::time::Duration::from_secs(1));

// The sleep only starts when you await it

future.await; // now it executesLaziness gives you control. You can create futures, store them, combine them, cancel them before they start – all without any side effects happening yet.

The Poll Model in Detail

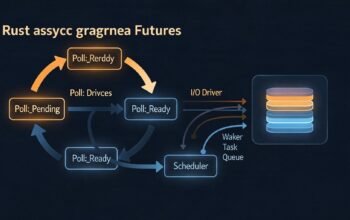

The runtime calls poll on a future to drive it forward. If the future returns Pending, it must arrange to be polled again when it makes progress. This is done through the Waker mechanism in the Context argument:

use std::future::Future;

use std::pin::Pin;

use std::task::{Context, Poll, Waker};

use std::sync::{Arc, Mutex};

use std::thread;

use std::time::Duration;

// A future that completes after a delay - implemented manually to show the mechanism

struct DelayFuture {

when: std::time::Instant,

waker: Arc>>,

}

impl DelayFuture {

fn new(duration: Duration) -> Self {

let when = std::time::Instant::now() + duration;

let waker_slot: Arc>> = Arc::new(Mutex::new(None));

// Background thread that wakes the task when ready

let waker_clone = Arc::clone(&waker_slot);

thread::spawn(move || {

let now = std::time::Instant::now();

if now < when {

thread::sleep(when - now);

}

// Wake the task - tells the runtime to poll again

let mut lock = waker_clone.lock().unwrap();

if let Some(waker) = lock.take() {

waker.wake();

}

});

DelayFuture { when, waker: waker_slot }

}

}

impl Future for DelayFuture {

type Output = ();

fn poll(self: Pin<&mut Self>, cx: &mut Context<'_>) -> Poll<()> {

if std::time::Instant::now() >= self.when {

Poll::Ready(())

} else {

// Store the waker so the background thread can wake us

*self.waker.lock().unwrap() = Some(cx.waker().clone());

Poll::Pending

}

}

} This is how all async I/O works at the lowest level. The OS notifies the runtime when a socket is readable, a timer fires, or a file operation completes. The runtime uses that notification to wake the specific task waiting for that event. The task gets polled again and this time returns Ready.

How async/await Desugars

The async and await keywords are syntax sugar. The compiler transforms them into state machines that implement Future. Understanding this transformation is key to understanding async Rust behavior.

flowchart TD

A["async fn fetch(url: &str) -> String"] -->|compiler transforms to| B["struct FetchFuture (state machine enum)"]

B --> C[State0: Initial]

B --> D["State1: Waiting for TCP connect (holds local vars)"]

B --> E["State2: Waiting for HTTP response (holds local vars)"]

B --> F[StateComplete: Done]

C -->|first poll| D

D -->|woken, poll again| E

E -->|woken, poll again| F

F -->|returns| G["Poll::Ready(String)"]

Each .await point in an async function becomes a state transition. Local variables that are alive across an await point get stored in the state machine struct. This is why async functions produce self-referential structs (covered in Part 8 of the previous series) and why Pin is required to poll them.

// What you write:

async fn example() -> String {

let client = reqwest::Client::new();

let response = client.get("https://example.com").send().await; // State1

let text = response.unwrap().text().await; // State2

text

}

// Conceptually what the compiler generates:

enum ExampleState {

State0 { client: reqwest::Client },

State1 { client: reqwest::Client, send_future: SendFuture },

State2 { text_future: TextFuture },

Done,

}

// The full struct implements Future and stores the current stateWhy Rust Has No Built-In Runtime

This surprises developers coming from Go (goroutines built in), Node.js (event loop built in), or Python (asyncio built in). Rust deliberately ships no async runtime in the standard library.

The reason is zero-cost abstractions. A built-in runtime means every Rust program pays for it – even embedded firmware, kernel modules, and CLI tools that do not need async I/O. By keeping the Future trait in std but the executor outside, Rust lets you choose the right runtime for your use case. Tokio for server applications. smol for lightweight tasks. async-executor for custom environments. No runtime at all for purely synchronous code.

The trade-off is ecosystem fragmentation. Libraries that depend on Tokio-specific types do not work with other runtimes. In practice, Tokio has become the de facto standard for server-side Rust, powering everything from backend services to databases. Most production backend code assumes Tokio.

The Executor and Reactor

An async runtime has two components:

- Executor: calls

pollon futures, manages the task queue, decides which task to run next - Reactor: interfaces with the OS event queue (epoll on Linux, kqueue on macOS, IOCP on Windows), receives I/O readiness notifications, wakes the relevant tasks

// The simplest possible single-threaded executor - illustrative only

use std::future::Future;

use std::task::{Context, Poll, RawWaker, RawWakerVTable, Waker};

use std::pin::Pin;

fn block_on(mut future: F) -> F::Output {

// Pin the future to the stack

let mut future = unsafe { Pin::new_unchecked(&mut future) };

// Create a no-op waker for this simple example

let waker = noop_waker();

let mut cx = Context::from_waker(&waker);

loop {

match future.as_mut().poll(&mut cx) {

Poll::Ready(output) => return output,

Poll::Pending => {

// A real executor would park here and wait for the reactor

// to signal that work is available

std::thread::yield_now();

}

}

}

}

// Tokio's actual executor is far more sophisticated:

// - Work-stealing multi-threaded scheduler

// - Per-thread run queues with overflow to global queue

// - epoll/kqueue/IOCP integration

// - Timer wheel for efficient timer management Concurrency vs Parallelism in Async Rust

Async Rust gives you concurrency – multiple tasks making progress in overlapping time periods. It does not automatically give you parallelism. On a single-threaded runtime, tasks take turns on one CPU core. On Tokio’s multi-threaded runtime, tasks run in parallel across CPU cores.

use tokio::time::{sleep, Duration};

#[tokio::main]

async fn main() {

// Sequential - each awaits before the next starts

// Total time: ~300ms

sleep(Duration::from_millis(100)).await;

sleep(Duration::from_millis(100)).await;

sleep(Duration::from_millis(100)).await;

// Concurrent - all three run at the same time

// Total time: ~100ms

tokio::join!(

sleep(Duration::from_millis(100)),

sleep(Duration::from_millis(100)),

sleep(Duration::from_millis(100)),

);

}The key point: .await alone does not make things concurrent. It suspends the current task and lets others run, but the awaited future runs to completion before the next line executes. For true concurrency, you need tokio::join!, tokio::select!, or tokio::spawn.

When to Use Async and When Not To

Async Rust is the right tool for I/O-bound workloads: network servers, API clients, database connections, message queues. It is the wrong tool for CPU-bound work. A future that runs for 100ms without hitting an .await point blocks its thread, starving every other task on that thread.

Tokio does not provide any advantage for file reading and writing because most operating systems lack true asynchronous file system APIs. Tokio’s fs module works by offloading file operations to a blocking thread pool, not true async I/O.

For CPU-bound work from an async context, use tokio::task::spawn_blocking. This runs the closure on a separate blocking thread pool, keeping the async threads free. We cover this pattern in detail in Part 8.

What Comes Next

You now have the foundation: futures are lazy state machines, poll drives execution, wakers enable notification, and the runtime ties it all together. Part 2 goes inside Tokio specifically – the work-stealing scheduler, how epoll integration works, and the design decisions that make Tokio fast at scale.

References

- Tokio Documentation – “Async in Depth” (https://tokio.rs/tokio/tutorial/async)

- JetBrains RustRover Blog – “The Evolution of Async Rust: From Tokio to High-Level Applications” (https://blog.jetbrains.com/rust/2026/02/17/the-evolution-of-async-rust-from-tokio-to-high-level-applications/)

- Corrode Rust Consulting – “The State of Async Rust: Runtimes” (https://corrode.dev/blog/async/)

- WyeWorks – “Async Rust: When to Use It and When to Avoid It” (https://www.wyeworks.com/blog/2025/02/25/async-rust-when-to-use-it-when-to-avoid-it/)

- Rust Standard Library – “Future Trait” (https://doc.rust-lang.org/std/future/trait.Future.html)