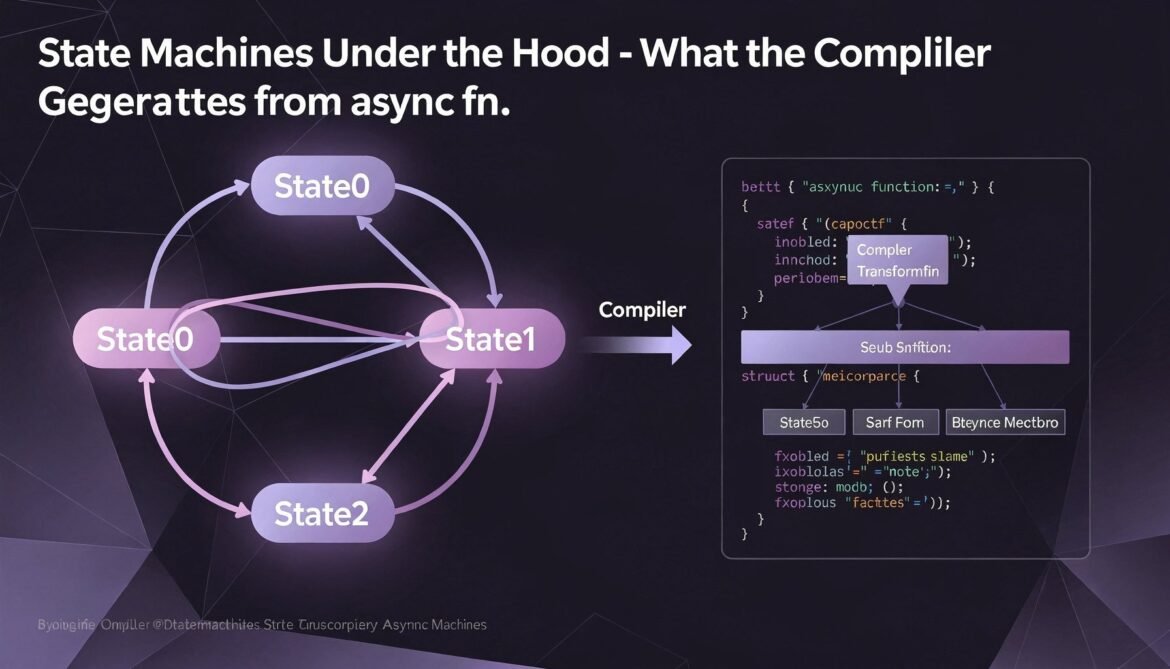

Parts 1 and 2 established that async functions compile into state machines. This post goes all the way in – exactly what the compiler generates, why the generated structs can be large, and how understanding the transformation helps you write better async code.

The Transformation in Detail

Every async fn compiles to a struct that implements Future. The struct stores all variables that are alive across any .await point, plus a discriminant that tracks which state the function is currently in. Each .await point is a state.

// Source code:

async fn process(input: String) -> usize {

let trimmed = input.trim().to_string(); // created before first await

let result = fetch_length(&trimmed).await; // State 0 -> State 1

let doubled = result * 2; // alive across nothing

log_result(doubled).await; // State 1 -> State 2

doubled

}

// Conceptual compiler output (simplified):

enum ProcessState {

// Before first await: holds variables alive across it

State0 {

input: String,

trimmed: String,

fetch_future: FetchLengthFuture,

},

// Between awaits: holds variables alive across second await

State1 {

doubled: usize,

log_future: LogResultFuture,

},

// Terminal state

Done,

}

struct ProcessFuture {

state: ProcessState,

}

impl Future for ProcessFuture {

type Output = usize;

fn poll(mut self: Pin<&mut Self>, cx: &mut Context<'_>) -> Poll {

loop {

match &mut self.state {

ProcessState::State0 { fetch_future, trimmed, .. } => {

match Pin::new(fetch_future).poll(cx) {

Poll::Ready(result) => {

let doubled = result * 2;

self.state = ProcessState::State1 {

doubled,

log_future: log_result(doubled),

};

// Loop continues to poll State1 immediately

}

Poll::Pending => return Poll::Pending,

}

}

ProcessState::State1 { log_future, doubled } => {

match Pin::new(log_future).poll(cx) {

Poll::Ready(()) => {

let result = *doubled;

self.state = ProcessState::Done;

return Poll::Ready(result);

}

Poll::Pending => return Poll::Pending,

}

}

ProcessState::Done => panic!("polled after completion"),

}

}

}

} The real compiler output uses unsafe code internally and is more compact, but this captures the essential structure. Every .await becomes a state, every variable alive across an await is stored in that state’s variant.

Why State Machines Can Be Large

The size of the generated future equals the size of its largest state variant. This means futures that hold large values across await points produce large structs:

async fn large_future() {

let big_buffer = vec![0u8; 1_000_000]; // 1MB on the heap, 24 bytes on stack

some_async_call().await; // big_buffer alive here = stored in state

process(&big_buffer);

}

// The state machine stores the Vec (24 bytes stack + heap pointer)

// Heap data is fine - Vec itself is just a pointer, not the data

async fn also_large() {

let arr = [0u8; 65536]; // 64KB - this IS stored inline in the state machine

some_async_call().await; // arr is 64KB in the state struct

use_array(&arr);

}

// This future is ~64KB. Storing it anywhere stack-allocated is risky.Practical implication: avoid holding large stack-allocated arrays across await points. Prefer heap-allocated types (Vec, Box) whose stack footprint is small (typically 24 bytes for a Vec) regardless of their actual data size.

Visualizing the State Machine

stateDiagram-v2

[*] --> State0: async fn called (future created)

State0 --> State0: poll() returns Pending\n(inner future not ready)

State0 --> State1: poll() inner future returns Ready\n(transition, loop continues)

State1 --> State1: poll() returns Pending\n(second inner future not ready)

State1 --> Done: poll() inner future returns Ready\nreturn Poll::Ready(output)

Done --> [*]

note right of State0

Stores: all variables live

across first await point

end note

note right of State1

Stores: all variables live

across second await point

end note

The Self-Referential Problem and Pin

The generated state machine can be self-referential. Consider:

async fn self_ref_example() {

let data = String::from("hello");

let reference = data.as_str(); // reference into data

some_async_call().await; // both data and reference stored in State0

println!("{}", reference);

}

// State0 stores:

// - data: String (the owned string)

// - reference: &str (points INTO data's heap allocation)

// - inner_future: ...

//

// If this struct moves in memory, data moves but reference still

// points to the old location - dangling pointer!This is exactly why Future::poll takes Pin<&mut Self>. Pinning the future to a memory location guarantees it will not move after the first poll. The runtime pins futures before calling poll for the first time and never moves them afterward.

Examining Real State Machine Sizes

use std::mem::size_of_val;

async fn small_future() -> u32 {

tokio::time::sleep(std::time::Duration::from_millis(1)).await;

42

}

async fn larger_future() -> Vec {

let data = vec![0u8; 100]; // heap-allocated, 24 bytes on stack

tokio::time::sleep(std::time::Duration::from_millis(1)).await;

data

}

async fn measure() {

let f1 = small_future();

let f2 = larger_future();

println!("small_future size: {} bytes", size_of_val(&f1));

println!("larger_future size: {} bytes", size_of_val(&f2));

// Run them to avoid warnings

let _ = tokio::join!(f1, f2);

}

// small_future: typically ~32-64 bytes (timer state + output slot)

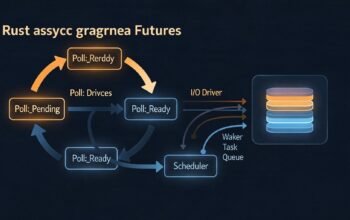

// larger_future: slightly more (adds Vec's 24 bytes to carry across await) The Loop Behavior in Poll

Notice the generated poll implementation uses a loop. This is an important optimization. When an inner future transitions from Pending to Ready and triggers a state change, the outer future immediately tries to poll the next state without returning Pending first. This avoids an unnecessary round-trip through the scheduler.

// Without the loop (naive implementation):

// State0 ready -> return Pending -> scheduler wakes task -> poll -> State1 starts

// Extra round-trip through the scheduler

// With the loop (actual implementation):

// State0 ready -> immediately poll State1 in the same poll() call

// State1 pending -> return Pending

// No unnecessary scheduler round-tripThis loop runs until either a state returns Pending (no progress possible) or the future completes. It is the source of one important pitfall: if a future’s states never return Pending – for example, a tight computation loop – the poll call runs forever, blocking the thread. We cover this in Part 8 on common pitfalls.

Nested Futures and Composition

Futures compose recursively. When you .await an inner async function, its state machine is nested inside the outer state machine’s state variant:

async fn inner() -> u32 {

tokio::time::sleep(std::time::Duration::from_millis(10)).await;

42

}

async fn outer() -> u32 {

let x = inner().await; // InnerFuture stored in OuterFuture's State0

x + 1

}

// OuterFuture::State0 contains:

// inner_future: InnerFuture (which itself contains timer state)

//

// Polling outer polls inner, which polls the timer

// The entire chain executes in a single thread with no allocationThis recursive nesting is what makes async Rust zero-cost. There is no heap allocation per await point, no dynamic dispatch, no garbage collection. The entire call stack is a single struct on whatever memory the outermost future lives in.

Using async-std-inspect or cargo-expand to See Generated Code

// Install cargo-expand:

// cargo install cargo-expand

// Then run:

// cargo expand --bin myapp 2>/dev/null | grep -A 50 "async fn my_function"

// For a simpler view, use std::mem::size_of to check state machine sizes:

fn check_sizes() {

println!("Future sizes:");

println!(" small: {}", std::mem::size_of::>());

// Note: you need concrete types for size_of, use size_of_val on instances

}

// Practical tip: if a future is unexpectedly large,

// box it to move it to the heap:

async fn large_caller() {

// Instead of:

// let f = large_async_fn().await;

// Use Box::pin to heap-allocate the state machine:

let f = Box::pin(large_async_fn()).await;

}

async fn large_async_fn() -> Vec { vec![] } What Comes Next

With the state machine model clear, Part 4 moves to tasks – how to spawn them, manage their lifetimes, handle their results, and cancel them. Understanding state machines makes task cancellation behavior much less surprising.

References

- Tokio Documentation – “Async in Depth: State Machines” (https://tokio.rs/tokio/tutorial/async)

- Rust Standard Library – “Pin” (https://doc.rust-lang.org/std/pin/index.html)

- The Async Book – “Under the Hood: Executing Futures and Tasks” (https://rust-lang.github.io/async-book/)

- Tyler Mandry – “Optimizing Await: Smaller and Faster State Machines” (https://tmandry.gitlab.io/blog/posts/optimizing-await-1/)

- Leapcell – “Unraveling Asynchronous Rust with async/await and Tokio” (https://leapcell.io/blog/unraveling-asynchronous-rust-with-async-await-and-tokio)