Part 1 explained the Future trait and poll model. You know futures are lazy state machines and that a runtime drives them by calling poll. Now we go inside Tokio itself – the work-stealing scheduler, how it integrates with OS event queues, and the architectural decisions that make it fast under real load.

Tokio Is More Than a Runtime

Most documentation describes Tokio as an async runtime. That undersells it. Tokio is a full platform for async I/O in Rust, providing a multi-threaded work-stealing scheduler, OS event queue integration, async TCP/UDP/Unix sockets, timers, channels, synchronization primitives, filesystem utilities, process management, and signal handling. It is closer to a framework than a library.

At its core, Tokio has two thread pools:

- Core threads: where all async tasks run. By default one per CPU core. These execute futures, poll them, and handle context switching between tasks at await points.

- Blocking threads: spawned on demand for blocking operations. Used by

tokio::task::spawn_blockingandtokio::fs. Kept alive for a configurable duration after use.

use tokio::runtime::Builder;

// Default multi-threaded runtime (what #[tokio::main] creates)

let rt = Builder::new_multi_thread()

.worker_threads(4) // core async threads

.max_blocking_threads(512) // blocking thread pool cap

.thread_keep_alive(std::time::Duration::from_secs(60))

.thread_stack_size(2 * 1024 * 1024) // 2MB stack per thread

.enable_all() // I/O and time drivers

.build()

.unwrap();

// Single-threaded runtime - useful for testing and constrained environments

let rt = Builder::new_current_thread()

.enable_all()

.build()

.unwrap();The Work-Stealing Scheduler

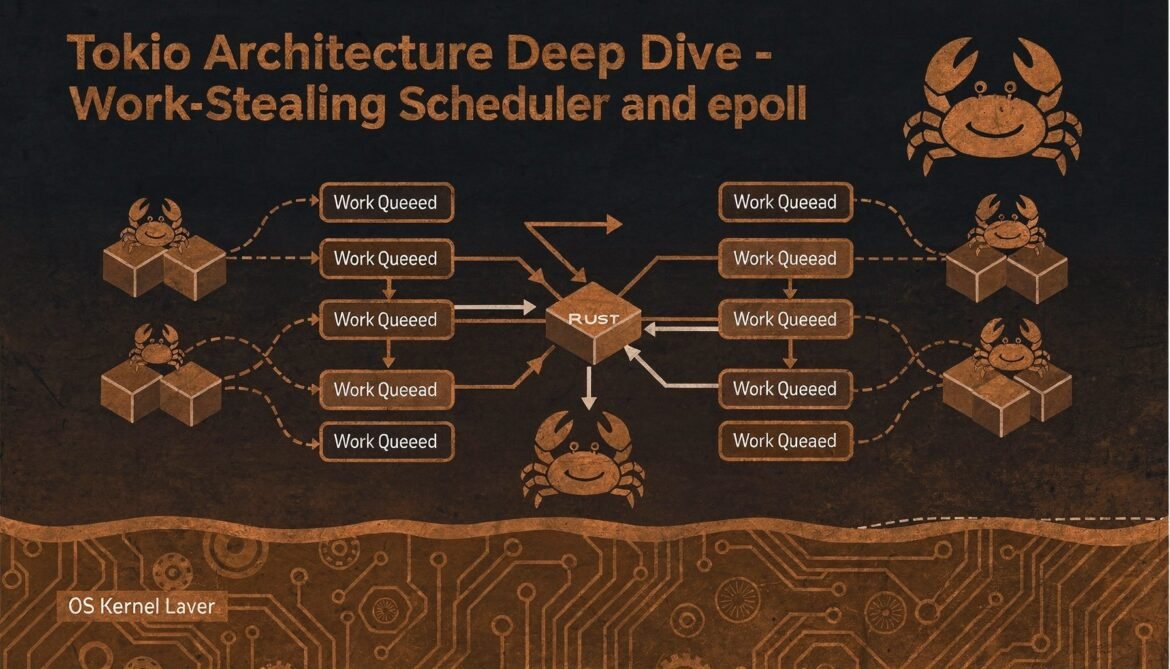

Tokio uses a work-stealing scheduler. Each worker thread has its own local run queue. When a task is spawned, it goes into the spawning thread’s local queue. When a thread’s local queue is empty, it tries to steal tasks from other threads’ queues or the global overflow queue.

flowchart TD

subgraph Thread1 ["Worker Thread 1"]

LQ1[Local Queue\nTask A, Task B]

T1[Execute Task]

end

subgraph Thread2 ["Worker Thread 2"]

LQ2[Local Queue\nEmpty]

T2[Steal from Thread 1]

end

subgraph Thread3 ["Worker Thread 3"]

LQ3[Local Queue\nTask C]

T3[Execute Task]

end

GQ[Global Overflow Queue] --> LQ1

GQ --> LQ3

LQ1 -->|steal half| LQ2

Spawn[tokio::spawn] --> LQ1

IO[I/O Wakeup] --> GQ

Work stealing keeps all CPU cores busy when load is uneven. If one thread has a backlog and another is idle, the idle thread steals work rather than spinning. The steal threshold is typically half the source queue – stealing too little means repeated steals, stealing too much risks cache thrashing.

The implication for you as a developer: task locality matters. Tasks that are spawned together and communicate frequently may benefit from running on the same thread. But you cannot control placement directly – Tokio’s scheduler handles it. What you can control is how you structure task communication to minimize cross-thread coordination.

The I/O Driver: epoll, kqueue, IOCP

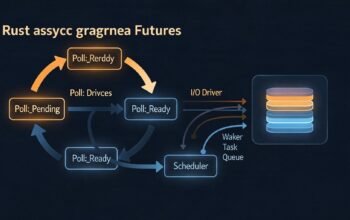

Tokio’s reactor component integrates with the OS event notification system. On Linux this is epoll, on macOS kqueue, on Windows IOCP. These are kernel mechanisms that notify a process when file descriptors (sockets, pipes, etc.) are ready for reading or writing without requiring a thread to block.

use tokio::net::TcpListener;

use tokio::io::{AsyncReadExt, AsyncWriteExt};

#[tokio::main]

async fn main() -> Result<(), Box> {

let listener = TcpListener::bind("0.0.0.0:8080").await?;

loop {

let (mut socket, addr) = listener.accept().await?;

// Each connection gets its own task

// No thread blocked waiting - the reactor wakes this task

// when the socket has data

tokio::spawn(async move {

let mut buf = vec![0u8; 1024];

loop {

match socket.read(&mut buf).await {

Ok(0) => break, // connection closed

Ok(n) => {

socket.write_all(&buf[..n]).await.unwrap();

}

Err(_) => break,

}

}

});

}

} When socket.read().await finds no data available, the future returns Pending and registers the socket’s file descriptor with epoll for read-readiness. When data arrives, the kernel notifies Tokio’s reactor thread, which wakes the task, which gets added to a worker thread’s run queue and polled again.

This entire path – from kernel event to task execution – happens with no thread context switching. The reactor thread is a lightweight event dispatcher, not a thread that blocks.

The Timer Wheel

Tokio manages timers using a hierarchical timer wheel – a data structure that allows O(1) timer insertion and efficient expiry checking. Rather than maintaining a sorted heap of all timers (expensive at scale), the wheel divides time into slots at multiple levels of granularity.

use tokio::time::{sleep, timeout, interval, Duration, Instant};

async fn timer_patterns() {

// Simple delay

sleep(Duration::from_millis(100)).await;

// Timeout wrapper - cancel operation if it takes too long

match timeout(Duration::from_secs(5), expensive_operation()).await {

Ok(result) => println!("Completed: {:?}", result),

Err(_) => println!("Timed out"),

}

// Interval - fires repeatedly at fixed periods

let mut ticker = interval(Duration::from_secs(1));

for _ in 0..5 {

ticker.tick().await; // waits for next tick

println!("tick");

}

}

async fn expensive_operation() -> String {

sleep(Duration::from_secs(2)).await;

String::from("result")

}Tokio’s timer resolution is typically 1ms. For higher-precision timing you need OS-specific mechanisms. For intervals, note that interval catches up if ticks are missed by default – if your handler takes 3 seconds and the interval is 1 second, the next three ticks fire immediately. You can change this with MissedTickBehavior::Skip.

Task Scheduling Internals

When a task is woken (by a waker being called), it is pushed to a run queue. Tokio maintains three levels of queue:

- Local queue: bounded, per-thread, lock-free FIFO. Fast path for most task scheduling.

- Global queue: unbounded, shared, used when local queues overflow or when wakeups happen from non-worker threads (e.g. from blocking thread pool results).

- Injection queue: where externally spawned tasks and woken tasks from the reactor land before being picked up by worker threads.

Worker threads follow a priority order: check local queue, then check injection queue, then attempt to steal from other threads. This ordering minimizes latency for local work while ensuring global tasks are not starved.

Configuring the Runtime for Production

use tokio::runtime::Builder;

fn build_production_runtime() -> tokio::runtime::Runtime {

Builder::new_multi_thread()

// Match CPU count for I/O-bound workloads

// Consider fewer threads for CPU-bound mixed workloads

.worker_threads(num_cpus::get())

// Generous blocking thread pool for database calls, file I/O

.max_blocking_threads(256)

// Keep blocking threads alive to avoid spawn overhead on bursts

.thread_keep_alive(std::time::Duration::from_secs(30))

// Name threads for easier debugging in profiles and stack traces

.thread_name("myapp-worker")

// Enable both I/O driver and time driver

.enable_all()

// Optional: hook for metrics

.on_thread_start(|| {

tracing::debug!("worker thread started");

})

.on_thread_stop(|| {

tracing::debug!("worker thread stopped");

})

.build()

.expect("failed to build runtime")

}Single-Threaded vs Multi-Threaded Runtime

The choice between new_current_thread() and new_multi_thread() has significant implications:

// Single-threaded: tasks do not need to be Send

// Good for: testing, embedded, single-connection processing

#[tokio::main(flavor = "current_thread")]

async fn main() {

// Rc, *mut T, and other non-Send types work fine in tasks

let rc = std::rc::Rc::new(42);

tokio::task::spawn_local(async move {

println!("{}", rc); // Rc is not Send, only works with spawn_local

}).await.unwrap();

}

// Multi-threaded: all spawned tasks must be Send + 'static

// Good for: production servers, maximum throughput

#[tokio::main]

async fn main() {

// Arc instead of Rc because tasks may move between threads

let arc = std::sync::Arc::new(42);

tokio::spawn(async move {

println!("{}", arc); // Arc is Send

}).await.unwrap();

} The Send + 'static requirement on multi-threaded spawned tasks is not a Tokio choice – it is a consequence of work stealing. A task can migrate between threads at any await point, so it must be safe to send across thread boundaries.

The #[tokio::main] Macro Expansion

Understanding what #[tokio::main] generates demystifies runtime initialization:

// What you write:

#[tokio::main]

async fn main() {

println!("hello");

}

// What the macro generates:

fn main() {

tokio::runtime::Builder::new_multi_thread()

.enable_all()

.build()

.unwrap()

.block_on(async {

println!("hello");

})

}

// For tests:

#[tokio::test]

async fn my_test() {

// Each test gets its own runtime instance

// Isolation between tests

}block_on runs a future to completion on the calling thread. It is the bridge between synchronous Rust (your main function) and the async world. You can call block_on yourself when you need to run async code from a synchronous context – but only from outside the runtime. Calling block_on from within an async task will panic.

What Comes Next

Part 3 goes deeper on state machines – specifically what the Rust compiler generates from your async functions. Understanding the generated code explains why certain patterns cause compiler errors, why some futures are large, and how to write async code that compiles to efficient state machines.

References

- Tokio Documentation – “Runtime and Scheduler” (https://docs.rs/tokio)

- Tokio GitHub Repository (https://github.com/tokio-rs/tokio)

- Tokio Blog – “Making the Tokio Scheduler 10x Faster” (https://tokio.rs/blog/2019-10-scheduler)

- JetBrains RustRover Blog – “The Evolution of Async Rust” (https://blog.jetbrains.com/rust/2026/02/17/the-evolution-of-async-rust-from-tokio-to-high-level-applications/)

- The New Stack – “Async Programming in Rust: Understanding Futures and Tokio” (https://thenewstack.io/async-programming-in-rust-understanding-futures-and-tokio/)