Parts 2 through 4 covered prompt caching at the model layer: prefix matching inside Claude, GPT-5.4, and Gemini that reduces token processing costs when your prompt starts with the same bytes. Semantic caching operates at an entirely different layer. It sits in front of the LLM entirely and asks a different question: has someone already asked something close enough to this that we can return the cached answer without calling the model at all?

The difference matters because semantic caching does not require exact matches. It uses vector embeddings to measure how similar two queries are, and if the similarity exceeds a configured threshold, it returns the stored response. For applications with repetitive query patterns, like customer support bots, internal knowledge tools, or FAQ systems, hit rates above 80 percent are achievable. At that level, you are eliminating the majority of your LLM calls entirely, not just reducing token costs on the ones you make.

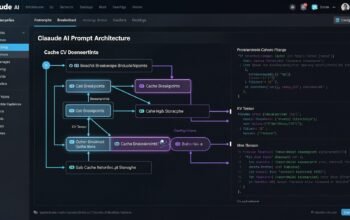

This part covers the full architecture, how to choose and tune similarity thresholds, cache invalidation strategies, and complete production implementations in both Node.js and Python using Redis 8.6 as the vector store.

How Semantic Caching Works

The core mechanism has four steps. When a query arrives, you generate a vector embedding for it using an embedding model. You then search your Redis vector index for the most similar embedding you have stored from previous queries. If the similarity score exceeds your threshold, you return the stored response without calling the LLM. If it does not, you call the LLM, store the response alongside the query embedding, and return the result.

The embedding model is the key component. It converts your query text into a fixed-length vector that captures semantic meaning, not just keywords. Two queries like “How do I reset my password?” and “I forgot my password, what do I do?” will produce embeddings that are very close in vector space even though they share almost no words. A keyword-based cache would miss this entirely. A semantic cache catches it.

flowchart TD

A[User Query] --> B[Generate Embedding\nvia Embedding Model]

B --> C{Vector Similarity Search\nin Redis}

C -->|Similarity above threshold\ne.g. 0.92| D[Return Cached Response\nNo LLM call]

C -->|Similarity below threshold\nNo close match| E[Call LLM API]

E --> F[Store query embedding\n+ response in Redis]

F --> G[Return Response to User]

D --> G

style D fill:#22c55e,color:#fff

style E fill:#ef4444,color:#fff

style F fill:#3b82f6,color:#fff

Why Redis 8.6 for Semantic Caching

Redis 8.6 introduces optimised memory usage for hash and sorted set data types, reducing memory overhead by up to 40 percent compared to earlier versions. For a semantic cache storing thousands of embeddings alongside their associated responses, this translates directly to lower infrastructure cost and higher throughput on the same hardware.

Redis also ships with RediSearch, which provides native vector similarity search using both HNSW (Hierarchical Navigable Small World) and flat index types. HNSW is the right choice for production: it provides approximate nearest-neighbour search in sub-millisecond time even across millions of stored vectors. The approximate nature means a tiny fraction of very close matches might be missed, but for semantic caching the trade-off is entirely acceptable. You are matching queries for cache hits, not running precise scientific calculations.

Redis also has native TTL support at the key level, RBAC for access control, clustering for horizontal scale, and a mature ecosystem of client libraries across every major language. It is the most operationally straightforward choice for teams already running Redis for session storage or general caching.

Choosing the Right Similarity Threshold

The similarity threshold is the most important tuning parameter in your semantic cache. Set it too high and you get very few cache hits because only near-identical queries match. Set it too low and you return cached responses for queries that are semantically different enough that the answer would actually differ.

Cosine similarity scores range from 0 (completely different) to 1 (identical). In practice for English-language LLM queries using modern embedding models, the useful range for semantic caching sits between 0.88 and 0.97.

| Threshold | Behaviour | Best For |

|---|---|---|

| 0.97 – 1.00 | Near-identical queries only | Exact or near-exact FAQ matching |

| 0.92 – 0.96 | Same question, different phrasing | Customer support, knowledge bases |

| 0.88 – 0.91 | Same topic, somewhat different intent | Only with careful validation |

| Below 0.88 | Too aggressive, risk of wrong answers | Not recommended for production |

Start at 0.93 and measure your hit rate and false positive rate in staging before adjusting. A false positive is when a cached response is returned for a query that is semantically similar but would actually require a different answer. These are harder to detect than false negatives and more damaging to user experience.

Node.js Implementation

Install the required packages:

npm install redis @anthropic-ai/sdk openai// semantic-cache.js

import { createClient } from 'redis';

import OpenAI from 'openai';

import crypto from 'crypto';

const openai = new OpenAI({ apiKey: process.env.OPENAI_API_KEY });

const redis = createClient({

url: process.env.REDIS_URL || 'redis://localhost:6379',

socket: { reconnectStrategy: (retries) => Math.min(retries * 50, 2000) },

});

await redis.connect();

const CACHE_INDEX = 'semantic_cache_idx';

const CACHE_PREFIX = 'scache:';

const EMBEDDING_DIM = 1536; // text-embedding-3-small dimensions

const DEFAULT_THRESHOLD = 0.93;

const DEFAULT_TTL_SECONDS = 3600; // 1 hour

// Create the Redis vector index on startup

async function ensureIndex() {

try {

await redis.ft.info(CACHE_INDEX);

} catch {

// Index does not exist, create it

await redis.ft.create(

CACHE_INDEX,

{

'$.embedding': {

type: 'VECTOR',

ALGORITHM: 'HNSW',

TYPE: 'FLOAT32',

DIM: EMBEDDING_DIM,

DISTANCE_METRIC: 'COSINE',

M: 16,

EF_CONSTRUCTION: 200,

},

'$.query': { type: 'TEXT', AS: 'query' },

'$.namespace': { type: 'TAG', AS: 'namespace' },

'$.created_at': { type: 'NUMERIC', AS: 'created_at' },

},

{

ON: 'JSON',

PREFIX: CACHE_PREFIX,

}

);

console.log('[SemanticCache] Index created');

}

}

await ensureIndex();

// Metrics tracking

const metrics = {

totalRequests: 0,

cacheHits: 0,

cacheMisses: 0,

totalSavedCalls: 0,

get hitRate() {

return this.totalRequests > 0

? ((this.cacheHits / this.totalRequests) * 100).toFixed(1) + '%'

: '0%';

},

};

async function getEmbedding(text) {

const response = await openai.embeddings.create({

model: 'text-embedding-3-small',

input: text.trim(),

encoding_format: 'float',

});

return response.data[0].embedding;

}

function embeddingToBuffer(embedding) {

return Buffer.from(new Float32Array(embedding).buffer);

}

export async function semanticCacheGet({

query,

namespace = 'default',

threshold = DEFAULT_THRESHOLD,

}) {

metrics.totalRequests++;

const embedding = await getEmbedding(query);

const vectorBuffer = embeddingToBuffer(embedding);

// KNN search for the most similar cached query

const results = await redis.ft.search(

CACHE_INDEX,

`(@namespace:{${namespace}})=>[KNN 1 @embedding $vec AS score]`,

{

PARAMS: { vec: vectorBuffer },

SORTBY: 'score',

DIALECT: 2,

RETURN: ['$.response', '$.query', 'score'],

LIMIT: { from: 0, size: 1 },

}

);

if (results.total === 0) {

metrics.cacheMisses++;

return null;

}

const top = results.documents[0];

// Redis COSINE distance: 0 = identical, 2 = opposite

// Convert to similarity: similarity = 1 - (distance / 2)

const distance = parseFloat(top.value.score);

const similarity = 1 - distance / 2;

if (similarity >= threshold) {

metrics.cacheHits++;

metrics.totalSavedCalls++;

console.log(

`[SemanticCache] HIT | similarity=${similarity.toFixed(4)} | ` +

`original="${top.value['$.query']?.substring(0, 60)}"`

);

return {

response: top.value['$.response'],

similarity,

cacheHit: true,

};

}

metrics.cacheMisses++;

return null;

}

export async function semanticCacheSet({

query,

response,

namespace = 'default',

ttlSeconds = DEFAULT_TTL_SECONDS,

}) {

const embedding = await getEmbedding(query);

const cacheKey = `${CACHE_PREFIX}${crypto.randomUUID()}`;

await redis.json.set(cacheKey, '$', {

query,

response,

namespace,

embedding: Array.from(embedding),

created_at: Date.now(),

});

await redis.expire(cacheKey, ttlSeconds);

}

export function getMetrics() {

return { ...metrics };

}

LLM Client with Semantic Cache Layer

// llm-with-semantic-cache.js

import Anthropic from '@anthropic-ai/sdk';

import { semanticCacheGet, semanticCacheSet, getMetrics } from './semantic-cache.js';

const anthropic = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

const SYSTEM_PROMPT = `You are a helpful enterprise support assistant.

Answer questions accurately and concisely.

If you are unsure, say so rather than guessing.`;

export async function askWithSemanticCache({

question,

namespace = 'default',

threshold = 0.93,

ttlSeconds = 3600,

}) {

// Check semantic cache first - no LLM call if hit

const cached = await semanticCacheGet({ query: question, namespace, threshold });

if (cached) {

return {

answer: cached.response,

source: 'cache',

similarity: cached.similarity,

cost: { llmCallMade: false, estimatedSavingsUSD: 0.003 }, // approx saving per call

};

}

// Cache miss - call the LLM

const response = await anthropic.messages.create({

model: 'claude-sonnet-4-6',

max_tokens: 1024,

system: SYSTEM_PROMPT,

messages: [{ role: 'user', content: question }],

});

const answer = response.content[0].text;

// Store in semantic cache for future similar queries

await semanticCacheSet({ query: question, response: answer, namespace, ttlSeconds });

return {

answer,

source: 'llm',

similarity: null,

cost: { llmCallMade: true, estimatedSavingsUSD: 0 },

};

}

// Example usage demonstrating cache behaviour across similar queries

async function demo() {

const questions = [

// First query - cache miss, calls LLM

'How do I reset my API key?',

// Semantically similar - should hit cache

'I need to regenerate my API credentials',

// Another variant - should hit cache

'Where can I get a new API key?',

// Different topic - cache miss

'What are the rate limits for the API?',

// Similar to rate limits question - should hit

'How many requests per minute am I allowed to make?',

];

for (const q of questions) {

const result = await askWithSemanticCache({ question: q, namespace: 'api_support' });

console.log(`Q: ${q}`);

console.log(`Source: ${result.source}${result.similarity ? ` (similarity: ${result.similarity.toFixed(4)})` : ''}`);

console.log(`A: ${result.answer.substring(0, 100)}...\n`);

}

console.log('Cache metrics:', getMetrics());

}

demo().catch(console.error);

Python Implementation

# semantic_cache.py

import os

import uuid

import time

import struct

import hashlib

from dataclasses import dataclass, field

from typing import Optional

import redis

from redis.commands.search.field import VectorField, TextField, TagField, NumericField

from redis.commands.search.indexDefinition import IndexDefinition, IndexType

from redis.commands.search.query import Query

import openai

openai_client = openai.OpenAI(api_key=os.environ["OPENAI_API_KEY"])

redis_client = redis.Redis(

host=os.environ.get("REDIS_HOST", "localhost"),

port=int(os.environ.get("REDIS_PORT", 6379)),

decode_responses=False, # Binary for vector storage

)

CACHE_INDEX = "semantic_cache_idx"

CACHE_PREFIX = "scache:"

EMBEDDING_DIM = 1536

DEFAULT_THRESHOLD = 0.93

DEFAULT_TTL = 3600

@dataclass

class CacheMetrics:

total_requests: int = 0

cache_hits: int = 0

cache_misses: int = 0

@property

def hit_rate_percent(self) -> float:

if self.total_requests == 0:

return 0.0

return round(self.cache_hits / self.total_requests * 100, 1)

_metrics = CacheMetrics()

def _ensure_index():

try:

redis_client.ft(CACHE_INDEX).info()

except Exception:

schema = [

VectorField(

"$.embedding",

"HNSW",

{

"TYPE": "FLOAT32",

"DIM": EMBEDDING_DIM,

"DISTANCE_METRIC": "COSINE",

"M": 16,

"EF_CONSTRUCTION": 200,

},

as_name="embedding",

),

TextField("$.query", as_name="query"),

TagField("$.namespace", as_name="namespace"),

NumericField("$.created_at", as_name="created_at"),

]

redis_client.ft(CACHE_INDEX).create_index(

schema,

definition=IndexDefinition(

prefix=[CACHE_PREFIX], index_type=IndexType.JSON

),

)

print("[SemanticCache] Index created")

_ensure_index()

def _get_embedding(text: str) -> list[float]:

response = openai_client.embeddings.create(

model="text-embedding-3-small",

input=text.strip(),

encoding_format="float",

)

return response.data[0].embedding

def _embedding_to_bytes(embedding: list[float]) -> bytes:

return struct.pack(f"{len(embedding)}f", *embedding)

def cache_get(

query: str,

namespace: str = "default",

threshold: float = DEFAULT_THRESHOLD,

) -> Optional[dict]:

_metrics.total_requests += 1

embedding = _get_embedding(query)

vector_bytes = _embedding_to_bytes(embedding)

q = (

Query(f"(@namespace:{{{namespace}}})=>[KNN 1 @embedding $vec AS score]")

.sort_by("score")

.return_fields("$.response", "$.query", "score")

.dialect(2)

.paging(0, 1)

)

results = redis_client.ft(CACHE_INDEX).search(q, query_params={"vec": vector_bytes})

if not results.docs:

_metrics.cache_misses += 1

return None

top = results.docs[0]

distance = float(top.score)

similarity = 1 - distance / 2 # Convert COSINE distance to similarity

if similarity >= threshold:

_metrics.cache_hits += 1

print(

f"[SemanticCache] HIT | similarity={similarity:.4f} | "

f"original='{getattr(top, '$.query', '')[:60]}'"

)

return {

"response": getattr(top, "$.response", ""),

"similarity": similarity,

"cache_hit": True,

}

_metrics.cache_misses += 1

return None

def cache_set(

query: str,

response: str,

namespace: str = "default",

ttl_seconds: int = DEFAULT_TTL,

) -> None:

embedding = _get_embedding(query)

cache_key = f"{CACHE_PREFIX}{uuid.uuid4()}"

redis_client.json().set(

cache_key,

"$",

{

"query": query,

"response": response,

"namespace": namespace,

"embedding": embedding,

"created_at": int(time.time()),

},

)

redis_client.expire(cache_key, ttl_seconds)

def get_metrics() -> CacheMetrics:

return _metrics

Cache Invalidation Strategies

Semantic cache entries go stale when the underlying information they are based on changes. A cached answer to “What are the current API rate limits?” becomes wrong the moment you update your rate limits. You need a deliberate invalidation strategy.

flowchart LR

subgraph TTL["TTL-based (Passive)"]

T1["Set TTL per entry\nbased on data volatility"]

T2["High volatility data\nTTL: 15 minutes"]

T3["Medium volatility data\nTTL: 1 hour"]

T4["Static reference data\nTTL: 24 hours"]

T1 --> T2 & T3 & T4

end

subgraph Namespace["Namespace-based (Active)"]

N1["Tag entries by\ndata source namespace"]

N2["On data update\ndelete all keys\nin that namespace"]

N1 --> N2

end

subgraph Version["Version-based (Hybrid)"]

V1["Include data version\nin namespace tag"]

V2["On update increment\nversion, old entries\nexpire naturally"]

V1 --> V2

end

style TTL fill:#1e3a5f,color:#fff

style Namespace fill:#166534,color:#fff

style Version fill:#713f12,color:#fff

// cache-invalidation.js

import { createClient } from 'redis';

const redis = createClient({ url: process.env.REDIS_URL });

await redis.connect();

/**

* Delete all cache entries belonging to a namespace.

* Call this when the underlying data for that namespace changes.

*/

export async function invalidateNamespace(namespace) {

// Search for all keys in this namespace

const results = await redis.ft.search(

'semantic_cache_idx',

`@namespace:{${namespace}}`,

{ LIMIT: { from: 0, size: 10000 }, RETURN: [] }

);

if (results.total === 0) {

console.log(`[Invalidation] No entries found for namespace: ${namespace}`);

return 0;

}

const keys = results.documents.map((d) => d.id);

await redis.del(keys);

console.log(`[Invalidation] Deleted ${keys.length} entries for namespace: ${namespace}`);

return keys.length;

}

/**

* Versioned namespace helper.

* Increment version to effectively invalidate all old entries

* without an expensive delete sweep.

*/

export class VersionedNamespace {

constructor(baseName, redisClient) {

this.baseName = baseName;

this.redis = redisClient;

this.versionKey = `ns_version:${baseName}`;

}

async currentNamespace() {

const version = await this.redis.get(this.versionKey) || '1';

return `${this.baseName}_v${version}`;

}

async invalidate() {

const newVersion = await this.redis.incr(this.versionKey);

console.log(`[VersionedNamespace] ${this.baseName} bumped to v${newVersion}`);

return `${this.baseName}_v${newVersion}`;

}

}

// Usage: when product docs update, bump the version

const docsNamespace = new VersionedNamespace('product_docs', redis);

// Write cache using current version

const ns = await docsNamespace.currentNamespace(); // "product_docs_v1"

// ... cache entries use this namespace ...

// On docs update - old entries become unreachable, new queries build fresh cache

await docsNamespace.invalidate(); // "product_docs_v2"

Semantic Cache + Prompt Cache: Layered Architecture

The most cost-efficient production architecture combines semantic caching at the application layer with prompt caching at the model layer. They are not competing approaches; they operate at different levels and solve different problems. Semantic caching eliminates LLM calls entirely for repeated queries. Prompt caching reduces token costs on the calls that do reach the LLM.

flowchart TD

A[User Query] --> B{Layer 1:\nSemantic Cache\nRedis Vector Search}

B -->|Hit - similarity above threshold| C[Return Cached Response\nZero LLM cost]

B -->|Miss| D{Layer 2:\nPrompt Cache\nProvider-level KV Cache}

D -->|Cache hit\non system prompt + docs| E[Process only\nnew tokens\nReduced cost]

D -->|Full miss\nCold request| F[Full LLM Processing\nFull cost]

E --> G[Generate Response]

F --> G

G --> H[Store in Semantic Cache]

G --> I[Response to User]

C --> I

style C fill:#22c55e,color:#fff

style E fill:#3b82f6,color:#fff

style F fill:#ef4444,color:#fff

In a well-tuned system with 50,000 daily requests:

- Semantic cache catches 40,000 requests (80 percent hit rate) – zero LLM cost on those

- Of the 10,000 that reach the LLM, prompt caching reduces input token costs by 70 to 90 percent on each

- Only the 10,000 requests pay any token cost at all, and even those are dramatically cheaper

Redis 8.6 Production Configuration

# redis.conf optimised for semantic caching workloads (Redis 8.6)

# Memory optimisation - new in 8.6, reduces hash/sorted set overhead by 40%

hash-max-listpack-entries 512

hash-max-listpack-value 64

zset-max-listpack-entries 128

zset-max-listpack-value 64

# Set max memory with LRU eviction to manage cache size

maxmemory 4gb

maxmemory-policy allkeys-lru

# Persistence: RDB snapshots for recovery, AOF disabled for latency

save 3600 1

save 300 100

appendonly no

# RBAC for enterprise access control (Redis 8.6 ACL)

# Define in acl file

aclfile /etc/redis/users.acl

# Connection tuning

tcp-keepalive 300

timeout 0

tcp-backlog 511

# Cluster mode for horizontal scale (if needed)

# cluster-enabled yes

# cluster-config-file nodes.conf

# cluster-node-timeout 5000# users.acl - RBAC example for semantic cache access control

# Application user - can read/write cache keys only

user app_user on >StrongPassword123 ~scache:* ~semantic_cache_idx &* +@read +@write +@sortedset +@hash +ft.search +ft.create +json.set +json.get +expire +del

# Read-only analytics user

user analytics_user on >AnalyticsPass456 ~scache:* &* +@read +ft.search +ft.info

# Admin user

user admin on >AdminPass789 ~* &* +@allMeasuring Semantic Cache ROI

The key metrics to track are hit rate, false positive rate, average embedding latency, and estimated cost savings per day. Here is a monitoring helper that computes all of these:

// cache-analytics.js

export class SemanticCacheAnalytics {

constructor(redisClient) {

this.redis = redisClient;

this.events = [];

}

record({ queryId, cacheHit, similarity, responseTimeMs, savedCostUsd = 0 }) {

this.events.push({

timestamp: Date.now(),

queryId,

cacheHit,

similarity: similarity ?? null,

responseTimeMs,

savedCostUsd,

});

// Keep last 10,000 events in memory

if (this.events.length > 10_000) this.events.shift();

}

getSummary(windowMinutes = 60) {

const cutoff = Date.now() - windowMinutes * 60 * 1000;

const recent = this.events.filter((e) => e.timestamp > cutoff);

if (recent.length === 0) return null;

const hits = recent.filter((e) => e.cacheHit);

const misses = recent.filter((e) => !e.cacheHit);

const hitSimilarities = hits.map((e) => e.similarity).filter(Boolean);

return {

windowMinutes,

totalRequests: recent.length,

cacheHits: hits.length,

hitRatePercent: ((hits.length / recent.length) * 100).toFixed(1),

avgHitSimilarity: hitSimilarities.length

? (hitSimilarities.reduce((a, b) => a + b, 0) / hitSimilarities.length).toFixed(4)

: null,

avgHitResponseMs: hits.length

? Math.round(hits.reduce((s, e) => s + e.responseTimeMs, 0) / hits.length)

: null,

avgMissResponseMs: misses.length

? Math.round(misses.reduce((s, e) => s + e.responseTimeMs, 0) / misses.length)

: null,

totalSavedCostUsd: recent.reduce((s, e) => s + (e.savedCostUsd || 0), 0).toFixed(4),

projectedDailySavingsUsd: (

(recent.reduce((s, e) => s + (e.savedCostUsd || 0), 0) / windowMinutes) *

60 * 24

).toFixed(2),

};

}

}

Common Pitfalls

Caching responses that depend on user context. If your LLM response varies based on the current user’s permissions, account state, or personalisation, you cannot share cached responses across users. Either namespace caches per user (which reduces hit rates significantly) or only cache responses that are genuinely universal.

Not validating false positive rates before going to production. A threshold that looks good in testing can produce wrong answers in production on query distributions you did not anticipate. Log every cache hit alongside the original query it matched so you can audit the matches during rollout.

Embedding model mismatch after updates. If you update your embedding model, all existing vector embeddings become incompatible. You must flush your entire cache when changing embedding models, not just the entries you think might be affected.

Ignoring embedding latency. Every request, hit or miss, incurs an embedding API call. At high volume this adds latency and cost. Batch embedding requests where possible, and consider self-hosting a smaller embedding model if latency is critical.

What Is Next

Part 6 steps back from individual provider implementations to cover context engineering as a discipline: how to design your entire prompt structure for cache efficiency, static-first architecture patterns, and cache-aware RAG pipeline design. It also covers the emerging pattern of treating prompts as versioned code artifacts rather than ad-hoc strings.

References

- DasRoot – “Caching Strategies for LLM Responses 2026” (https://dasroot.net/posts/2026/02/caching-strategies-for-llm-responses/)

- Redis – “Vector Similarity Search Documentation” (https://redis.io/docs/latest/develop/interact/search-and-query/advanced-topics/vector_similarity/)

- DigitalOcean – “Prompt Caching Explained: OpenAI, Claude, and Gemini” (https://www.digitalocean.com/community/tutorials/prompt-caching-explained)

- OpenRouter – “Prompt Caching Best Practices” (https://openrouter.ai/docs/guides/best-practices/prompt-caching)

- arXiv – “Don’t Break the Cache: Prompt Caching for Long-Horizon Agentic Tasks” (https://arxiv.org/html/2601.06007v1)