Context engineering is the discipline of designing what goes into your LLM context window, in what order, and how to structure it for maximum cache efficiency, retrieval quality, and cost control. This part covers static-first architecture, cache-aware RAG design, prompt versioning, and token budget management.

Tag: token optimization

Semantic Caching with Redis 8.6: Vector Similarity Matching for LLM Cost Optimization in Production

Semantic caching operates above the model layer, using vector embeddings to match similar queries to previously computed responses. With Redis 8.6, you can achieve 80 percent or higher cache hit rates without calling the LLM at all. This part covers the full architecture, similarity thresholds, cache invalidation, and production implementations in both Node.js and Python.

Context Caching with Gemini 3.1 Pro and Flash-Lite: Implicit vs Explicit Caching, Storage Costs, and Python Production Implementation

Google Gemini 3.1 Pro and Flash-Lite offer both implicit and explicit context caching, with the most generous default TTL of any major provider at one hour. This part covers how both modes work, how to account for storage costs, and a complete Python production implementation for Vertex AI and the Gemini API.

Prompt Caching with GPT-5.4: Automatic Caching, Tool Search, and C# Production Implementation

GPT-5.4 makes prompt caching automatic with no configuration required. This part covers how OpenAI’s caching works under the hood, how to structure prompts for maximum hit rates, how the new Tool Search feature reduces agent token costs, and a full production C# implementation with cost tracking.

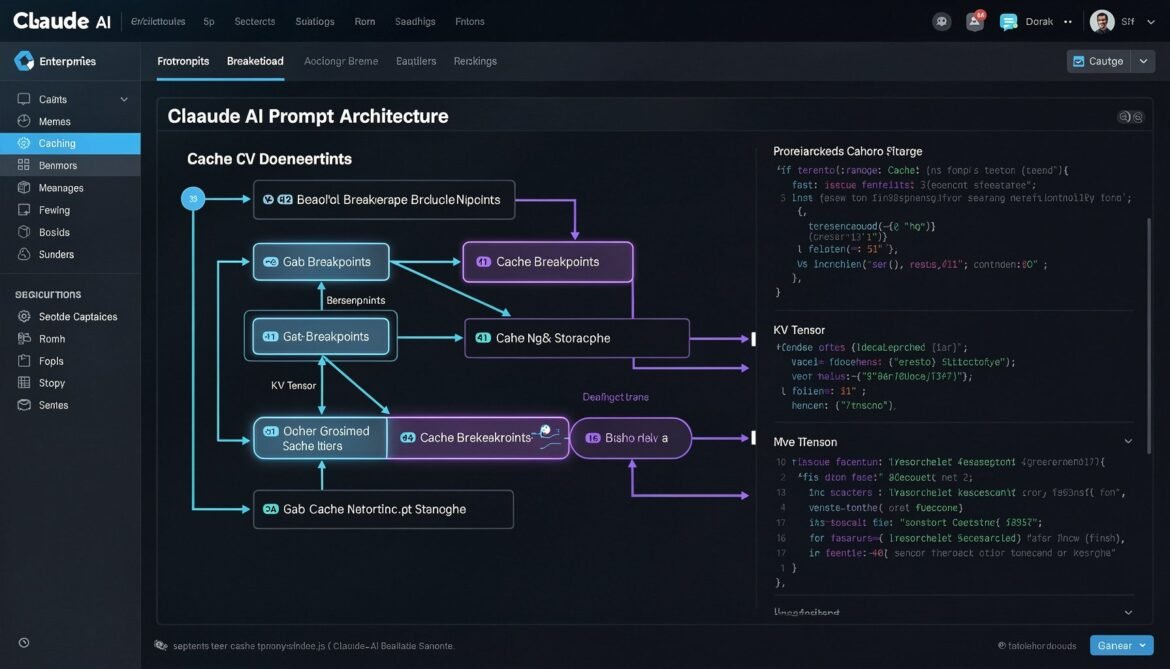

Prompt Caching with Claude Sonnet 4.6: cache_control Breakpoints, TTL Strategies, and Node.js Production Implementation

Claude Sonnet 4.6 gives developers explicit control over prompt caching through cache_control breakpoints. This part covers how to structure your prompts, configure TTL, use multi-breakpoint strategies, and implement a production-ready caching layer in Node.js.

Prompt Caching and Context Engineering in Production: What It Is and Why It Matters in 2026

Prompt caching is one of the most impactful yet underused techniques in enterprise AI today. This first part of the series explains what it is, how it works under the hood, and why it should be a default part of your production AI architecture in 2026.

Real-Time Sentiment Analysis with Azure Event Grid and OpenAI – Part 2: Azure OpenAI Integration and AI Processing

Welcome back to our real-time sentiment analysis series! In Part 1, we established the event-driven architecture foundation. Now, let’s dive deep into the AI processing