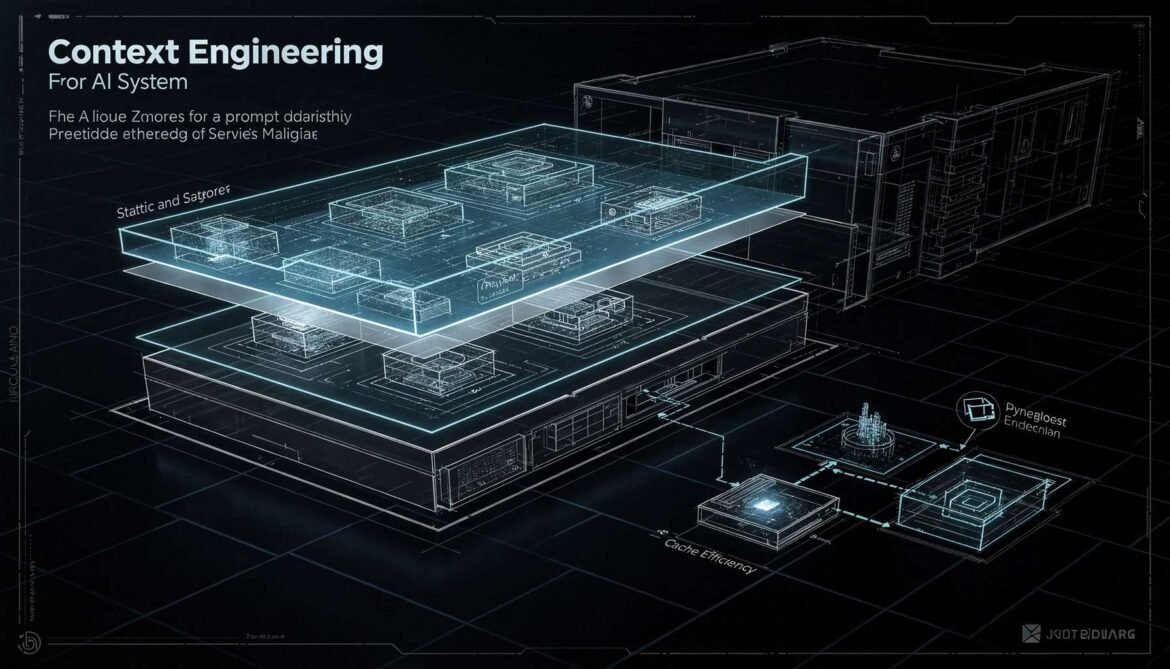

Context engineering is the discipline of designing what goes into your LLM context window, in what order, and how to structure it for maximum cache efficiency, retrieval quality, and cost control. This part covers static-first architecture, cache-aware RAG design, prompt versioning, and token budget management.

Tag: Prompt Versioning

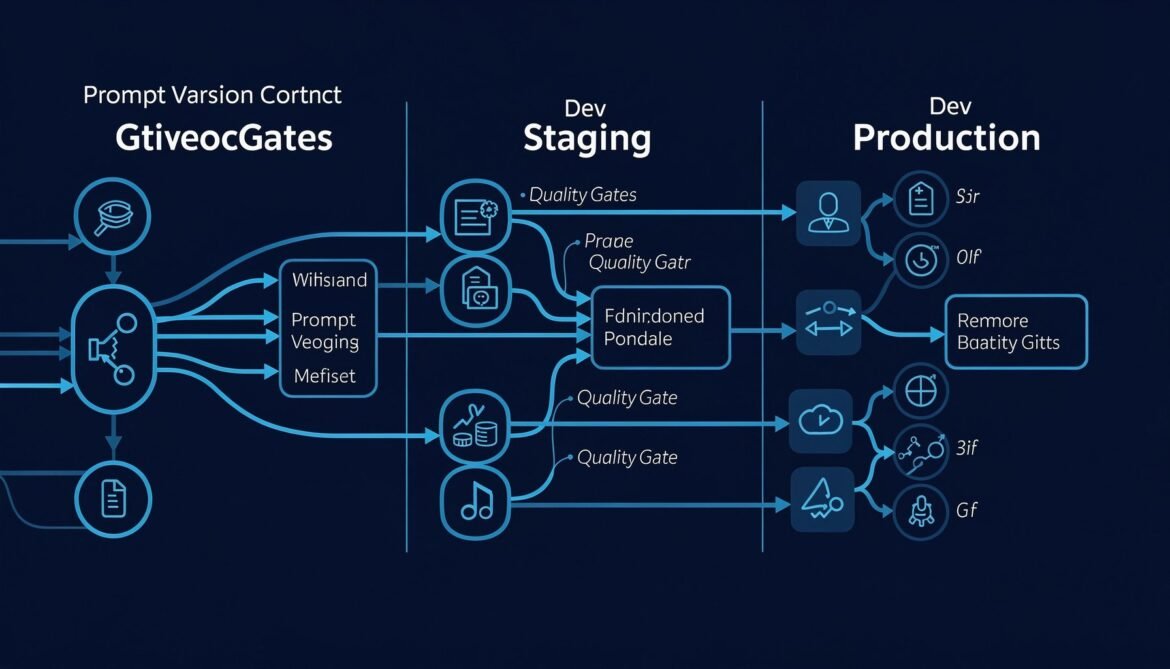

Prompt Management and Versioning: Treating Prompts as Production Code

Prompt changes are production changes. A wording edit at 3pm on a Friday can silently degrade thousands of responses with no error signal. This post builds a production-grade prompt management system with versioning, A/B testing, quality gates, and rollback in Node.js, Python, and C#.